Automated README file generator, powered by large language model APIs

Table of Contents

Objective

Readme-ai is a developer tool that auto-generates README.md files using a combination of data extraction and generative ai. Simply provide a repository URL or local path to your codebase and a well-structured and detailed README file will be generated for you.

Motivation

Streamlines documentation creation and maintenance, enhancing developer productivity. This project aims to enable all skill levels, across all domains, to better understand, use, and contribute to open-source software.

Important

Readme-ai is currently under development with an opinionated configuration and setup. It is vital to review all generated text from the LLM API to ensure it accurately represents your project.

Standard CLI usage, providing a repository URL to generate a README file.

readmeai-cli-demo.mov

Generate a README file without making API calls using the --api offline CLI option.

readmeai-streamlit-demo.mov

Tip

Offline mode is useful for generating a boilerplate README at no cost. View the offline README.md example here!

Built with flexibility in mind, readme-ai allows users to customize various aspects of the README using CLI options. Content is generated using a combination of data extraction and making a few calls to LLM APIs.

Currently, four sections of the README file are generated using LLMs:

i. Header: Project slogan that describes the repository in an engaging way.

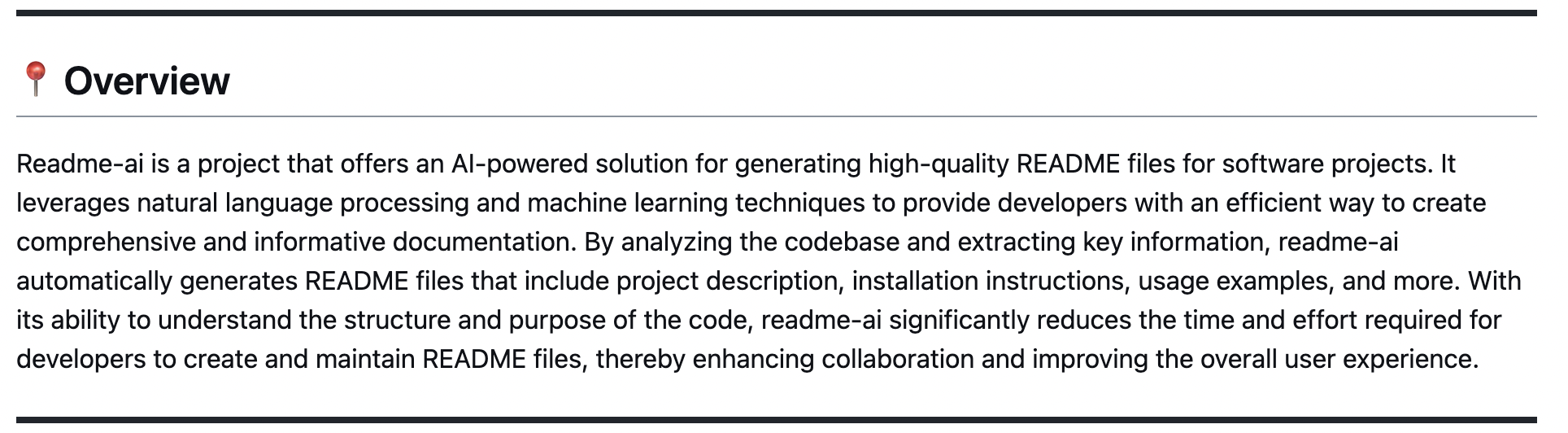

ii. Overview: Provides an intro to the project's core use-case and value proposition.

iii. Features: Markdown table containing details about the project's technical components.

iv. Modules: Codebase file summaries are generated and formatted into markdown tables.

All other content is extracted from processing and analyzing repository metadata and files.

The header section is built using repository metadata and CLI options. Key features include:

- Badges: Svg icons that represent codebase metadata, provided by shields.io and skill-icons.

- Project Logo: Select a project logo image from the base set or provide your image.

- Project Slogan: Catch phrase that describes the project, generated by generative ai.

- Table of Contents/Quick Links: Links to the different sections of the README file.

See a few example headers generated by readme-ai below.

See the Configuration section below for the complete list of CLI options and settings.

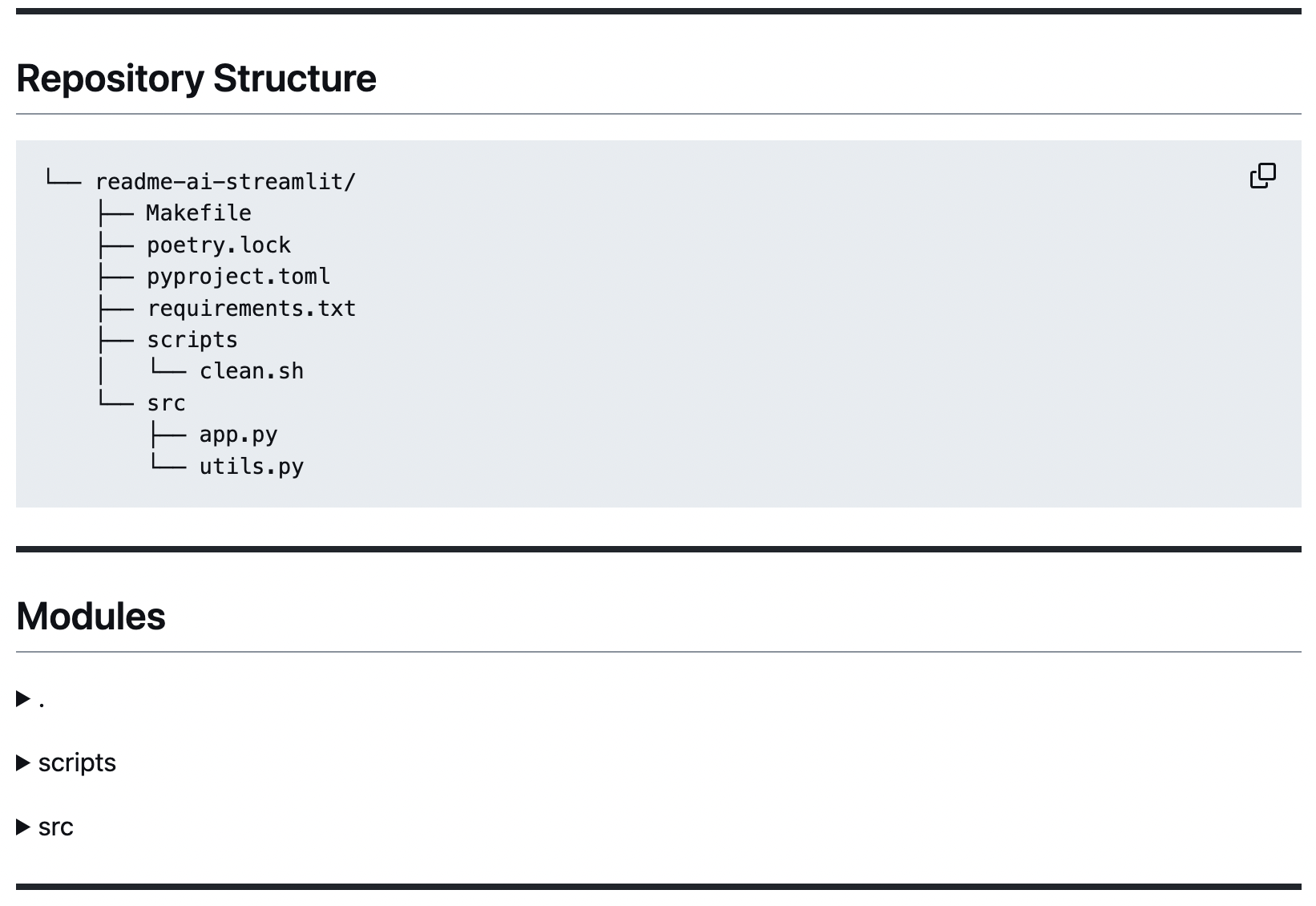

📃 Codebase Documentation

|

Repository Structure A directory tree structure is generated using pure Python (tree.py) and embedded in the README. |

|

|

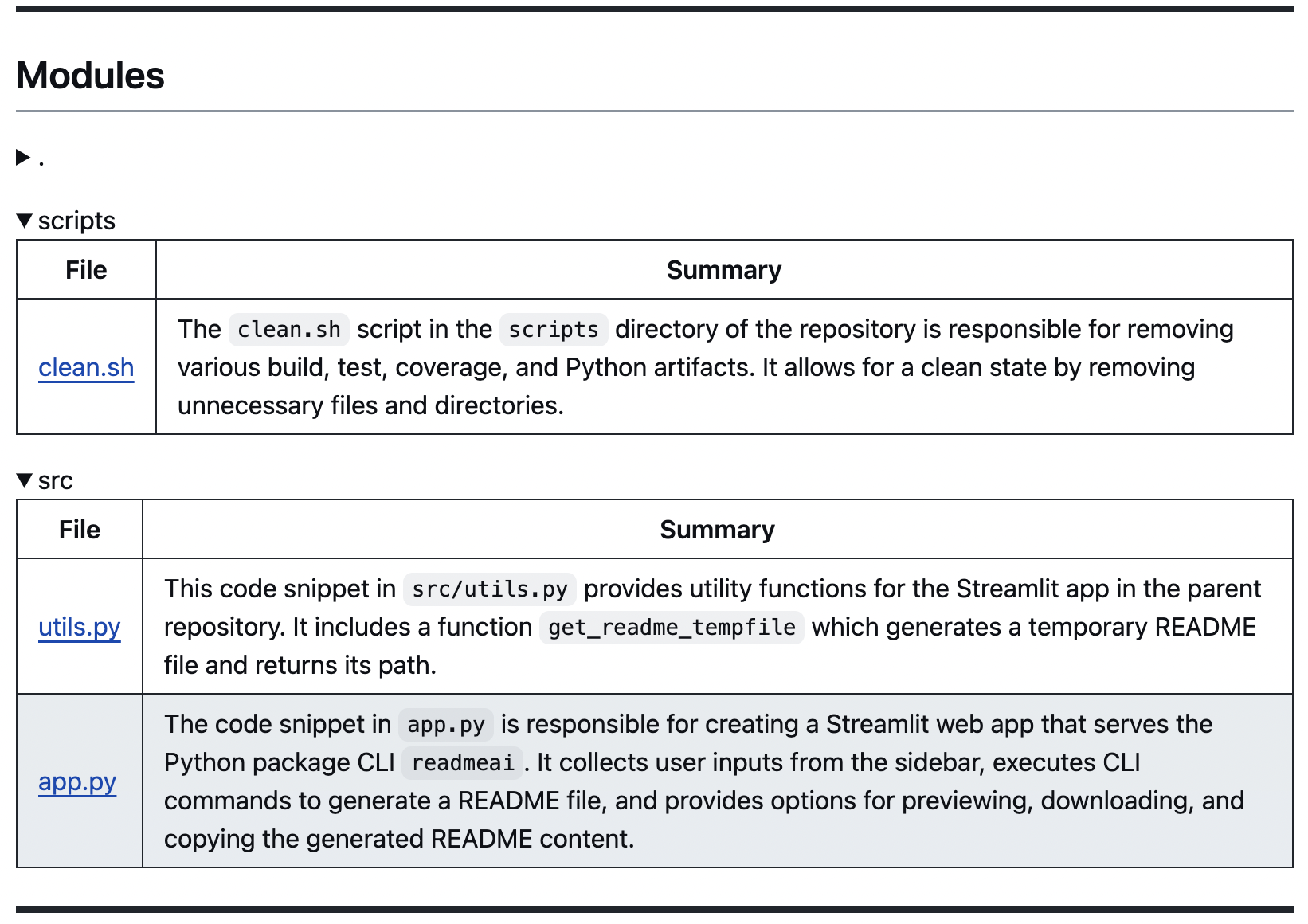

Codebase Summaries

Code summaries are generated using LLMs and grouped by directory, displayed in markdown tables. |

|

📌 Overview & Features Table

The overview and features sections are generated using LLM APIs. Structured prompt templates are injected with repository metadata to help produce more accurate and relevant content.

🔗 Dynamic Quickstart Guides

| Getting Started or Quick Start Generates structured guides for installing, running, and testing your project. This content is created by identifying dependencies and languages used in the codebase, and mapping this data using static config files such as the commands.toml file. |

|

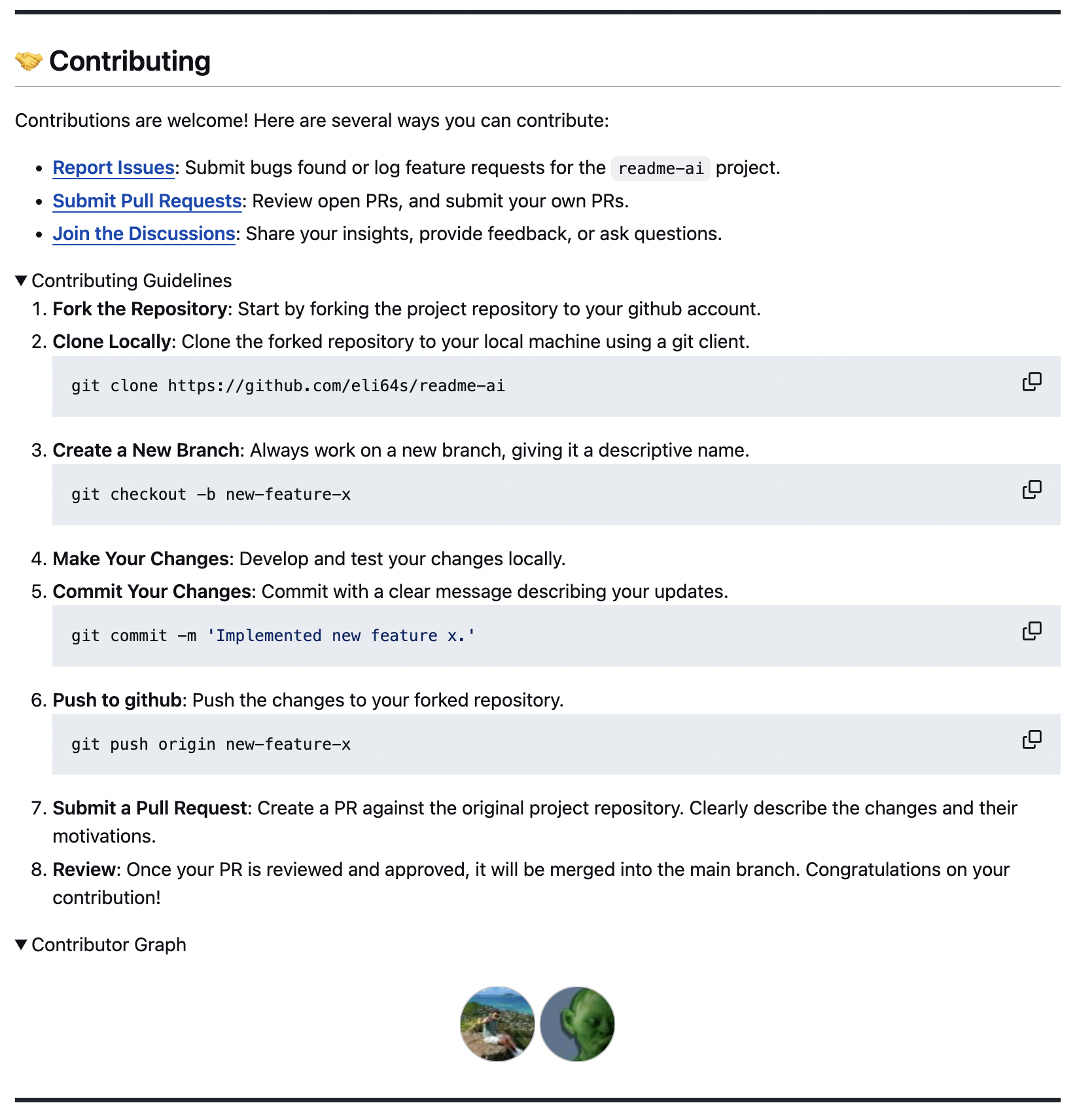

👋 Contributing & More

🧩 Template READMEs

This feature is currently under development. The template system will allow users to generate README files in different flavors, such as ai, data, web development, etc.

|

| Output File | Input Repo | Repo Contents | |

|---|---|---|---|

| ▹ | readme-python.md | readme-ai | Python |

| ▹ | readme-google-gemini.md | readme-ai | Python |

| ▹ | readme-typescript.md | chatgpt-app-react-ts | TypeScript, React |

| ▹ | readme-postgres.md | postgres-proxy-server | Postgres, Duckdb |

| ▹ | readme-kotlin.md | file.io-android-client | Kotlin, Android |

| ▹ | readme-streamlit.md | readme-ai-streamlit | Python, Streamlit |

| ▹ | readme-rust-c.md | rust-c-app | C, Rust |

| ▹ | readme-go.md | go-docker-app | Go |

| ▹ | readme-java.md | java-minimal-todo | Java |

| ▹ | readme-fastapi-redis.md | async-ml-inference | FastAPI, Redis |

| ▹ | readme-mlops.md | mlops-course | Python, Jupyter |

| ▹ | readme-local.md | Local Directory | Flink, Python |

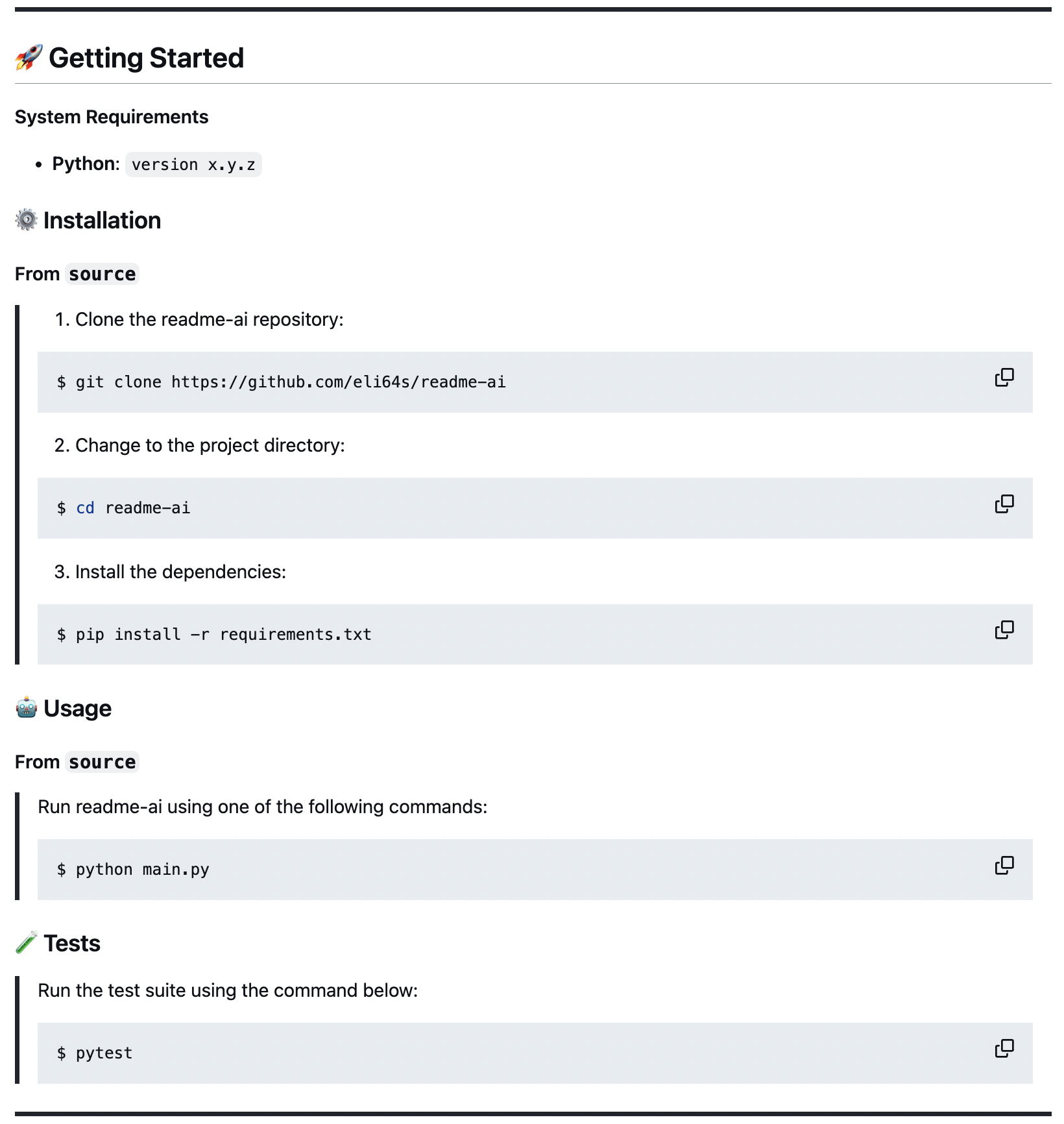

System Requirements:

- Python 3.9+

- Package manager/Container:

pip,pipx,docker - LLM service:

OpenAI,Ollama,Google Vertex AI,Offline

Choosing an LLM Service:

- OpenAI: Recommended, requires an API key and account setup.

- Ollama: Free and open-source, potentially slower and more resource-intensive.

- Google Vertex AI: Requires Google Cloud project and service account

- Offline Mode: Free, generates a boilerplate README without making API calls.

Repository URL or Local Path:

pip install readmeai

docker pull zeroxeli/readme-ai:latest

conda install -c conda-forge readmeai

From source

Clone repository and change directory.

$ git clone https://github.com/eli64s/readme-ai $ cd readme-ai

$ bash setup/setup.sh

$ poetry install

- Similiary you can use

pipenvorpipto install the requirements.txt.

Tip

Use pipx to install and run Python command-line applications without causing dependency conflicts with other packages installed on the system.

1. Set Environment Variables

Using OpenAI API Key

Set your OpenAI API key as an environment variable.

# Using Linux or macOS $ export OPENAI_API_KEY=<your_api_key> # Using Windows $ set OPENAI_API=<your_api_key>

Using Ollama

Set Ollama local host as an environment variable.

$ export OLLAMA_HOST=127.0.0.1 $ ollama pull mistral:latest # llama2, etc. $ ollama serve # run if not using the Ollama desktop appFor more details, check out the Ollama repository.

Using Google Vertex AI

Set your Google Cloud project ID and location as environment variables.

$ export VERTEXAI_LOCATION=<your_location> $ export VERTEXAI_PROJECT=<your_project>

2. Run CLI

readmeai --repository https://github.com/eli64s/readme-ai --api openai

docker run -it \ -e OPENAI_API_KEY=$OPENAI_API_KEY \ -v "$(pwd)":/app zeroxeli/readme-ai:latest \ -r https://github.com/eli64s/readme-ai

Try directly in your browser on Streamlit, no installation required! For more details, check out the readme-ai-streamlit repository.

From source

$ conda activate readmeai $ python3 -m readmeai.cli.main -r https://github.com/eli64s/readme-ai

$ poetry shell $ poetry run python3 -m readmeai.cli.main -r https://github.com/eli64s/readme-ai

$ make pytest

$ nox -f noxfile.py

Tip

Use nox to test application against multiple Python environments and dependencies!

Customize the README file using the CLI options below.

| Option | Type | Description | Default Value |

|---|---|---|---|

--alignment, -a |

String | Align the text in the README.md file's header. | center |

--api |

String | LLM API service to use for text generation. | offline |

--badge-color |

String | Badge color name or hex code. | 0080ff |

--badge-style |

String | Badge icon style type. | see below |

--base-url |

String | Base URL for the repository. | v1/chat/completions |

--context-window |

Integer | Maximum context window of the LLM API. | 3999 |

--emojis, -e |

Boolean | Adds emojis to the README.md file's header sections. | False |

--image, -i |

String | Project logo image displayed in the README file header. | blue |

🚧 --language |

String | Language for generating the README.md file. | en |

--model, -m |

String | LLM API to use for text generation. | gpt-3.5-turbo |

--output, -o |

String | Output file name for the README file. | readme-ai.md |

--rate-limit |

Integer | Maximum number of API requests per minute. | 5 |

--repository, -r |

String | Repository URL or local directory path. | None |

--temperature, -t |

Float | Sets the creativity level for content generation. | 0.9 |

🚧 --template |

String | README template style. | default |

--top-p |

Float | Sets the probability of the top-p sampling method. | 0.9 |

--tree-depth |

Integer | Maximum depth of the directory tree structure. | 2 |

--help |

Displays help information about the command and its options. |

🚧 feature under development

The --badge-style option lets you select the style of the default badge set.

| Style | Preview |

|---|---|

| default |     |

| flat |  |

| flat-square |  |

| for-the-badge |  |

| plastic |  |

| skills | |

| skills-light | |

| social |  |

When providing the --badge-style option, readme-ai does two things:

- Formats the default badge set to match the selection (i.e. flat, flat-square, etc.).

- Generates an additional badge set representing your projects dependencies and tech stack (i.e. Python, Docker, etc.)

$ readmeai --badge-style flat-square --repository https://github.com/eli64s/readme-ai

{... project logo ...}

{... project name ...}

{...project slogan...}

Developed with the software and tools below.

{... end of header ...}

Select a project logo using the --image option.

| blue | gradient | black |

| cloud | purple | grey |

For custom images, see the following options:

- Use

--image customto invoke a prompt to upload a local image file path or URL. - Use

--image llmto generate a project logo using a LLM API (in development).

- Add new CLI options to enhance README file customization.

-

--apiIntegrate singular interface for all LLM APIs (OpenAI, Gemini, Ollama, etc.) -

--auditto review existing README files and suggest improvements. -

--templateto select a README template style (i.e. ai, data, web, etc.) -

--languageto generate README files in any language (i.e. zh-CN, ES, FR, JA, KO, RU)

-

- Develop robust documentation generator to build full project docs (i.e. Sphinx, MkDocs)

- Create community-driven templates for README files and gallery of readme-ai examples.

- GitHub Actions script to automatically update README file content on repository push.

To grow the project, we need your help! See the links below to get started.