-

Notifications

You must be signed in to change notification settings - Fork 33

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

SETraining is extremely slow #21

Comments

|

And I cProfile the SE script and it shows the pykaldi asr decode funtion is the bottleneck |

|

Hi, this is not normal. It should not be as slow as this. Can you let me know your configs, like the batch_size, decoding graph, etc? |

|

I use 8 batch size and decoding graph and transition model under a tri4b system of kaldi librispeech examples. Other configurations shouldn't infulence the decoding speed...emm |

|

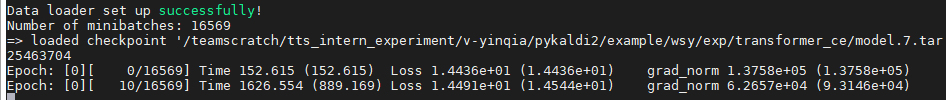

The normal training speed should be like :18:19.000Z /container_e09_1568924618046_16257_01_000002: [1,0]:=> loaded checkpoint '/datablob/users/lial/pykaldi2/seed_model/model.6.tar' The batchsize here is 4, and I used 1 V100 GPU. It took less than 30 seconds for 10 updates. I feel that your model has been diverged in the early beginning of the training, which means it would take much longer time to decode the utterances since the model is poor. I would suggest to pay attention to the learning rate, the decoding configs, CE and/or L2 regularization. Note that, use a larger momentum is very helpful for SE training from my experiments. |

Hi, I have completed the gmm stage and excute the CE training stage of transformer based on a tri4b system,. But when I'm trying a SE training on a Tesla P100 ,it is extremely slow as the following pict shows. I wonder is it normal?

The text was updated successfully, but these errors were encountered: