By Jianzhu Guo, Xiangyu Zhu, Yang Yang, Fan Yang, Zhen Lei and Stan Z. Li. The code repo is maintained by Jianzhu Guo.

[Updates]

2020.10.2: Add onnxruntime support to greatly reduce the inference latency, just append the--onnxaction when runningdemo.py, see TDDFA_ONNX.py for details.2020.9.20: Add features including pose estimation and serializations to .ply and .obj, seepose,ply,objoptions in demo.py.2020.9.19: Add PNCC (Projected Normalized Coordinate Code), uv texture mapping features, seepncc,uv_texoptions in demo.py.

This work extends 3DDFA, named 3DDFA_V2, titled Towards Fast, Accurate and Stable 3D Dense Face Alignment, accepted by ECCV 2020. The supplementary material is here. The gif above shows a webcam demo of the tracking result, in the scenario of my lab. This repo is the official implementation of 3DDFA_V2.

Compared to 3DDFA, 3DDFA_V2 achieves better performance and stability. Besides, 3DDFA_V2 incorporates the fast face detector FaceBoxes instead of Dlib. A simple 3D render written by c++ and cython is also included. This repo supports the onnxruntime, and the inference latency of default backbone is about 1.35ms/image with a single image as input. If you are interested in this repo, just try it on this google colab! Welcome for valuable issues and PRs 😄

See requirements.txt, tested on macOS and Linux platforms. Note that this repo uses Python3. The major dependencies are PyTorch, numpy, opencv-python and onnxruntime, etc.

- Clone this repo

git clone https://github.com/cleardusk/3DDFA_V2.git

cd 3DDFA_V2- Build the cython version of NMS, and Sim3DR

sh ./build.sh- Run demos

# 1. running on still image, the options include: 2d_sparse, 2d_dense, 3d, depth, pncc, pose, uv_tex, ply, obj

python3 demo.py -f examples/inputs/emma.jpg --onnx # -o [2d_sparse, 2d_dense, 3d, depth, pncc, pose, uv_tex, ply, obj]

# 2. running on videos

python3 demo_video.py -f examples/inputs/videos/214.avi --onnx

# 3. running on videos smoothly by looking ahead by `n_next` frames

python3 demo_video_smooth.py -f examples/inputs/videos/214.avi --onnx

# 4. running on webcam

python3 demo_webcam_smooth.py --onnxThe implementation of tracking is simply by alignment. If the head pose > 90° or the motion is too fast, the alignment may fail. A threshold is used to trickly check the tracking state, but it is unstable.

You can refer to demo.ipynb or google colab for the step-by-step tutorial of running on the still image.

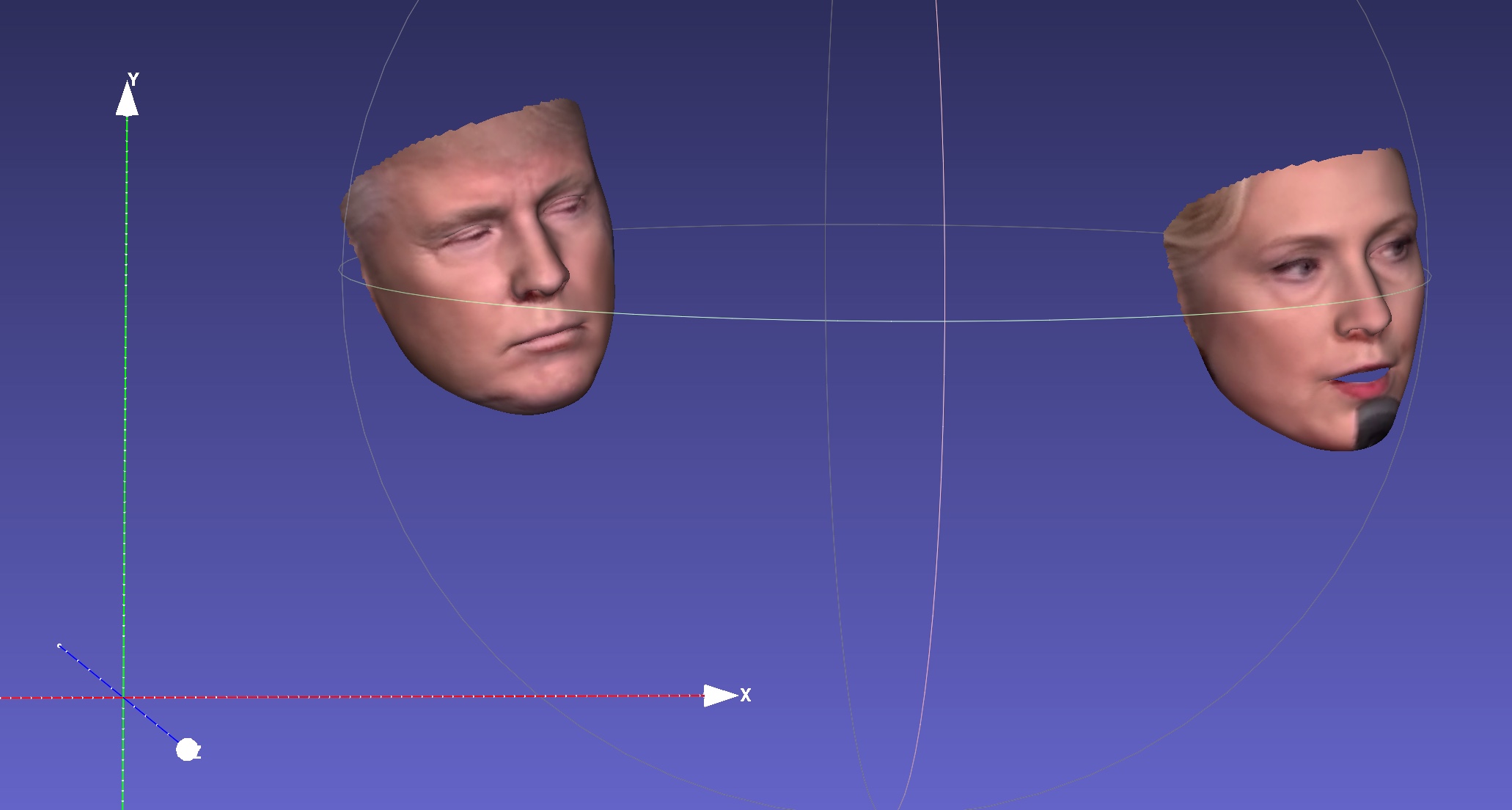

For example, running python3 demo.py -f examples/inputs/emma.jpg -o 3d will give the result below:

Another example:

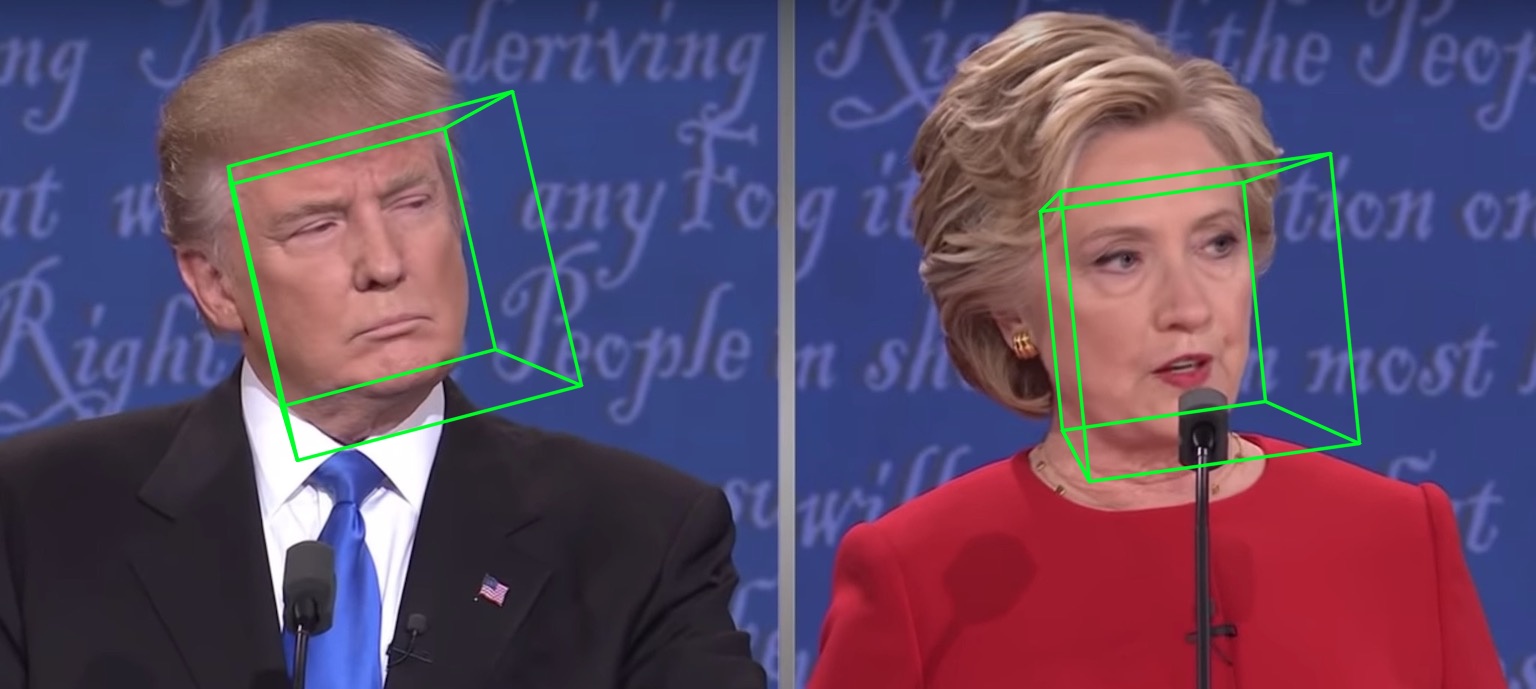

Running on a video will give:

| 2D sparse | 2D dense | 3D |

|---|---|---|

|

|

|

| Depth | PNCC | UV texture |

|

|

|

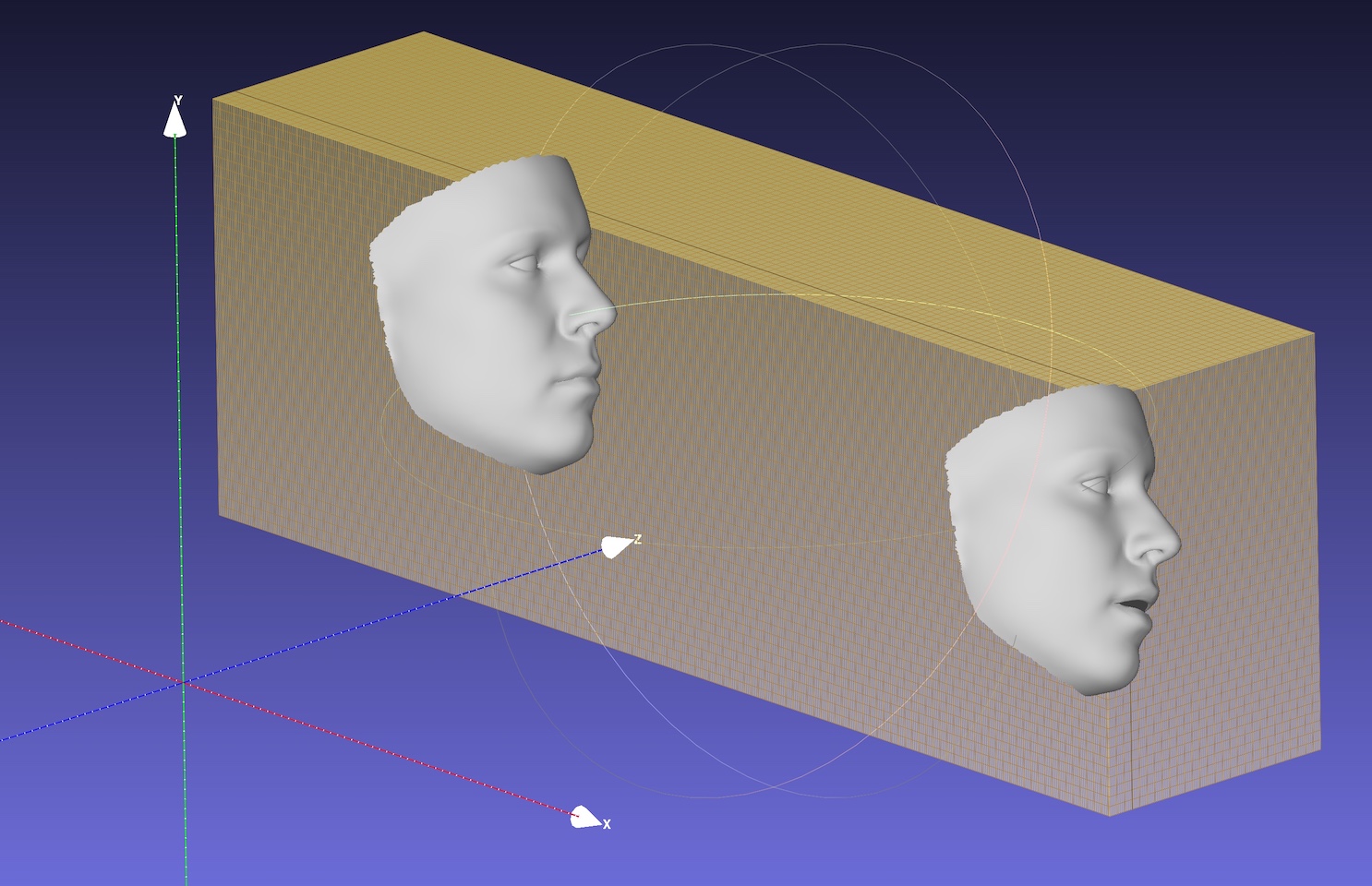

| Pose | Serialization to .ply | Serialization to .obj |

|

|

|

The default backbone is MobileNet_V1 with input size 120x120 and the default pre-trained weight is weights/mb1_120x120.pth, shown in configs/mb1_120x120.yml. This repo provides another config in configs/mb05_120x120.yml, with the widen factor 0.5, being smaller and faster. You can specify the config by -c or --config option. The released models are shown in the below table. Note that the inference time in the paper is evaluated using TensorFlow.

| Model | Input | #Params | #Macs | Inference (TF) | | :-: | :-: | :-: | :-: | :-: | :-: | | MobileNet | 120x120 | 3.27M | 183.5M | ~6.2ms | | MobileNet x0.5 | 120x120 | 0.85M | 49.5M | ~2.9ms |

Surprisingly, the latency of onnxruntime is much smaller, shown below. The results are tested on my MBP (i5-8259U CPU @ 2.30GHz on 13-inch MacBook Pro), with the 1.5.1 version of onnxruntime. The thread number is set by os.environ["OMP_NUM_THREADS"], see speed_cpu.py for more details.

| Model \ OMP_NUM_THREADS = | 1 | 2 | 4 |

|---|---|---|---|

| MobileNet | 4.4ms | 2.25ms | 1.35ms |

| MobileNet x0.5 | 1.37ms | 0.7ms | 0.5ms |

-

What is the training data?

We use 300W-LP for training. You can refer to our paper for more details about the training. Since few images are closed-eyes in the training data 300W-LP, the landmarks of eyes are not accurate when closing. The eyes part of the webcam demo are also not good.

-

Running on Windows.

You can refer to this comment for building NMS on Windows.

- The FaceBoxes module is modified from FaceBoxes.PyTorch.

- A list of previous works on 3D dense face alignment or reconstruction: 3DDFA, face3d, PRNet.

If your work or research benefits from this repo, please cite two bibs below : )

@inproceedings{guo2020towards,

title = {Towards Fast, Accurate and Stable 3D Dense Face Alignment},

author = {Guo, Jianzhu and Zhu, Xiangyu and Yang, Yang and Yang, Fan and Lei, Zhen and Li, Stan Z},

booktitle = {Proceedings of the European Conference on Computer Vision (ECCV)},

year = {2020}

}

@misc{3ddfa_cleardusk,

author = {Guo, Jianzhu and Zhu, Xiangyu and Lei, Zhen},

title = {3DDFA},

howpublished = {\url{https://github.com/cleardusk/3DDFA}},

year = {2018}

}

Jianzhu Guo (郭建珠) [Homepage, Google Scholar]: [email protected] or [email protected].