InstantID : Zero-shot Identity-Preserving Generation in Seconds

InstantID is a new state-of-the-art tuning-free method to achieve ID-Preserving generation with only single image, supporting various downstream tasks.

- [2024/1/22] 🔥 We release the pre-trained checkpoints, inference code and gradio demo!

- [2024/1/15] 🔥 We release the technical report.

- [2023/12/11] 🔥 We launch the project page.

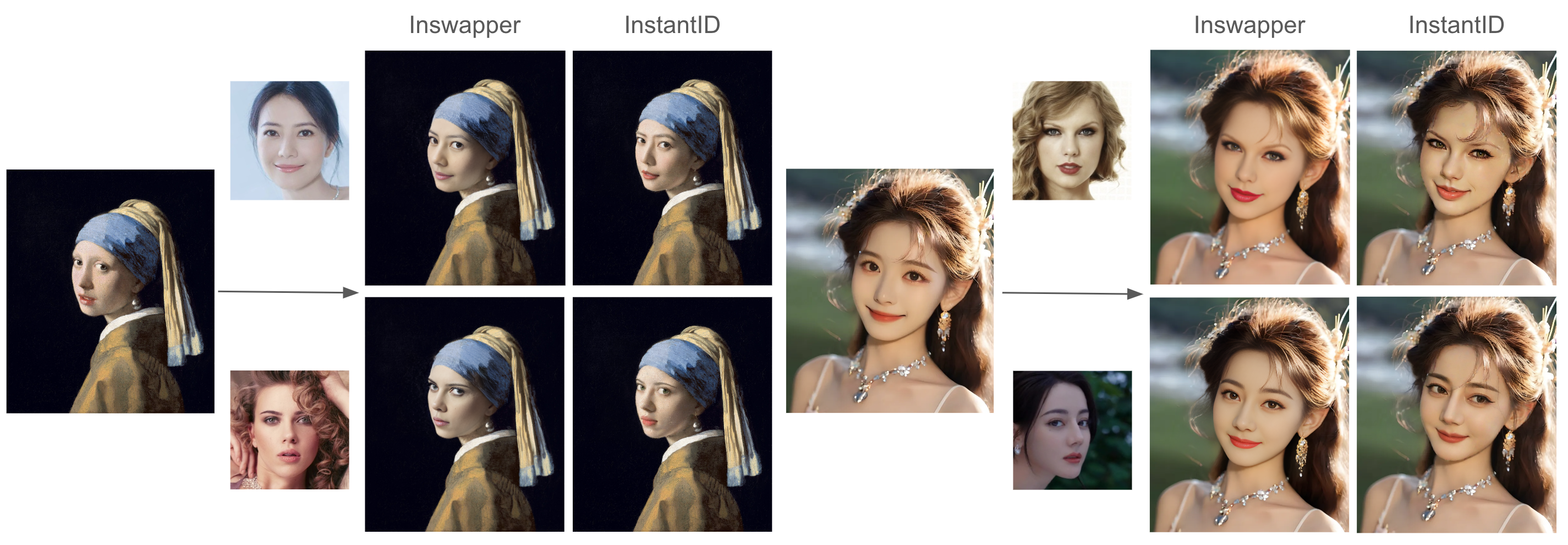

Comparison with existing tuning-free state-of-the-art techniques. InstantID achieves better fidelity and retain good text editability (faces and styles blend better).

Comparison with pre-trained character LoRAs. We don't need multiple images and still can achieve competitive results as LoRAs without any training.

Comparison with InsightFace Swapper (also known as ROOP or Refactor). However, in non-realistic style, our work is more flexible on the integration of face and background.

You can directly download the model from Huggingface. You also can download the model in python script:

from huggingface_hub import hf_hub_download

hf_hub_download(repo_id="InstantX/InstantID", filename="ControlNetModel/config.json", local_dir="./checkpoints")

hf_hub_download(repo_id="InstantX/InstantID", filename="ControlNetModel/diffusion_pytorch_model.safetensors", local_dir="./checkpoints")

hf_hub_download(repo_id="InstantX/InstantID", filename="ip-adapter.bin", local_dir="./checkpoints")If you cannot access to Huggingface, you can use hf-mirror to download models.

export HF_ENDPOINT=https://hf-mirror.com

huggingface-cli download --resume-download InstantX/InstantID --local-dir checkpointsFor face encoder, you need to manutally download via this URL to models/antelopev2 as the default link is invalid. Once you have prepared all models, the folder tree should be like:

.

├── models

├── checkpoints

├── ip_adapter

├── pipeline_stable_diffusion_xl_instantid.py

└── README.md

# !pip install opencv-python transformers accelerate insightface

import diffusers

from diffusers.utils import load_image

from diffusers.models import ControlNetModel

import cv2

import torch

import numpy as np

from PIL import Image

from insightface.app import FaceAnalysis

from pipeline_stable_diffusion_xl_instantid import StableDiffusionXLInstantIDPipeline, draw_kps

# prepare 'antelopev2' under ./models

app = FaceAnalysis(name='antelopev2', root='./', providers=['CUDAExecutionProvider', 'CPUExecutionProvider'])

app.prepare(ctx_id=0, det_size=(640, 640))

# prepare models under ./checkpoints

face_adapter = f'./checkpoints/ip-adapter.bin'

controlnet_path = f'./checkpoints/ControlNetModel'

# load IdentityNet

controlnet = ControlNetModel.from_pretrained(controlnet_path, torch_dtype=torch.float16)

pipe = StableDiffusionXLInstantIDPipeline.from_pretrained(

... "stabilityai/stable-diffusion-xl-base-1.0", controlnet=controlnet, torch_dtype=torch.float16

... )

pipe.cuda()

# load adapter

pipe.load_ip_adapter_instantid(face_adapter)Then, you can customized your own face images

# load an image

image = load_image("your-example.jpg")

# prepare face emb

face_info = app.get(cv2.cvtColor(np.array(face_image), cv2.COLOR_RGB2BGR))

face_info = sorted(face_info, key=lambda x:(x['bbox'][2]-x['bbox'][0])*x['bbox'][3]-x['bbox'][1])[-1] # only use the maximum face

face_emb = face_info['embedding']

face_kps = draw_kps(face_image, face_info['kps'])

pipe.set_ip_adapter_scale(0.8)

prompt = "Watercolor painting a man . Vibrant, beautiful, painterly, detailed, textural, artistic"

negative_prompt = "anime, photorealistic, 35mm film, deformed, glitch, low contrast, noisy"

# generate image

image = pipe(

... prompt, image_embeds=face_emb, image=face_kps, controlnet_conditioning_scale=0.8

... ).images[0]- If you're unsatisfied with the similarity, increase the weight of controlnet_conditioning_scale (IdentityNet) and ip_adapter_scale (Adapter).

- If the generated image is over-saturated, decrease the ip_adapter_scale. If not work, decrease controlnet_conditioning_scale.

- If text control is not as expected, decrease ip_adapter_scale.

- Good base model always makes a difference.

- Our work is highly inspired by IP-Adapter and ControlNet. Thanks for their great works!

- Thanks to the HuggingFace team for their generous GPU support!

This project is released under Apache License and aims to positively impact the field of AI-driven image generation. Users are granted the freedom to create images using this tool, but they are obligated to comply with local laws and utilize it responsibly. The developers will not assume any responsibility for potential misuse by users.

If you find InstantID useful for your research and applications, please cite us using this BibTeX:

@article{wang2024instantid,

title={InstantID: Zero-shot Identity-Preserving Generation in Seconds},

author={Wang, Qixun and Bai, Xu and Wang, Haofan and Qin, Zekui and Chen, Anthony},

journal={arXiv preprint arXiv:2401.07519},

year={2024}

}