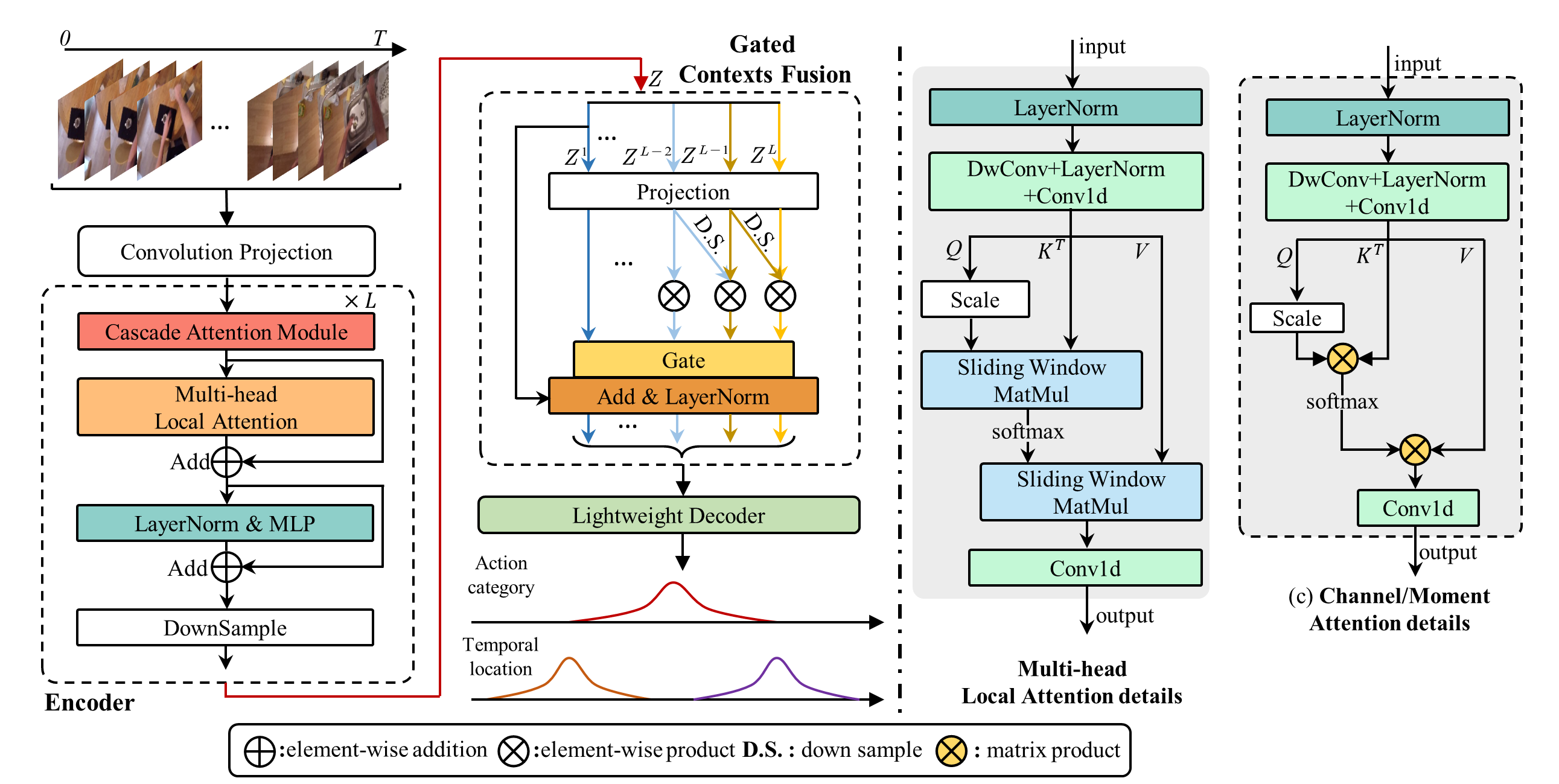

[TMM 2023] TransGMC: Gated Multi-Scale Transformer for Temporal Action Localization

This code repo implements TransGMC in TMM 2023. TransGMC achieves an average mAP of 67.5% on THUMOS14, 36.1% on ActivityNet v1.3, 24.9% on EPIC-Kitchens 100, and 23.2% on Ego4D.

| Name | Version |

|---|---|

| Python | 3.10.9 |

| Torch | 1.11.0 |

| Cuda | 11.3 |

| Nvidia | NVIDIA GeForce RTX 3090 |

Please refer to ActionFormer for more details.

- Train(Take

epic_slowfast_verbas an example)

python ./train.py --config ./configs/epic_slowfast_verb.yaml --output model- Evaluation (Take

epic_slowfast_verbas an example)

python ./eval.py ./configs/epic_slowfast_verb.yaml ./ckpt/epic_slowfast_verb_model/| Dataset | Model |

|---|---|

| Epic Kitchens (verb) | google drive download link |

| Epic Kitchens (noun) | google drive download link |

| Ego4D(S+O+E) | google drive download link |

| Thumos14 | google drive download link |

| ActivityNet v1.3 (I3D) | google drive download link |

If you are using our code, please consider citing the following paper.

@article{yang2023gated,

title={Gated Multi-Scale Transformer for Temporal Action Localization},

author={Yang, Jin and Wei, Ping and Ren, Ziyang and Zheng, Nanning},

journal={IEEE Transactions on Multimedia},

year={2023},

publisher={IEEE}

}

@inproceedings{zhang2022actionformer,

title={ActionFormer: Localizing Moments of Actions with Transformers},

author={Zhang, Chen-Lin and Wu, Jianxin and Li, Yin},

booktitle={European Conference on Computer Vision},

series={LNCS},

volume={13664},

pages={492-510},

year={2022}

}