Our FLGo is a strong and reusable experimental platform for research on federated learning (FL) algorithm, which has provided a few easy-to-use modules to hold out for those who want to do various federated learning experiments.

- Install flgo

pip install flgo- If the package is not found, please use the command below to update pip

pip install --upgrade pip- Create Your First Federated Task

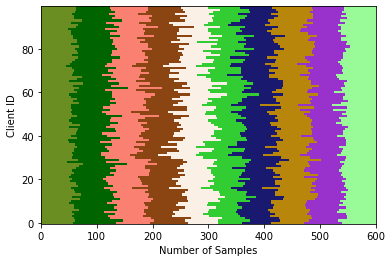

Here we take the classical federated benchmark, Federated MNIST, as the example, where the MNIST dataset is splitted into 100 parts identically and independently.

import flgo

import os

# the target path of the task

task_path = './my_first_task'

# create task configuration

task_config = {'benchmark':{'name': 'flgo.benchmark.mnist_classification'}, 'partitioner':{'name':'IIDPartitioner', 'para':{'num_clients':100}}}

# generate the task if the task doesn't exist

if not os.path.exist(task_path):

flgo.gen_task(task_config, task_path)

After running the codes above, a federated dataset is successfully created in the task_path. The visualization of the task is stored in task_path/res.png as below

- Run FedAvg to Train Your Model

import flgo.algorithm.fedavg as fedavg

# create fedavg runner on the task

runner = flgo.init(task, fedavg, {'gpu':[0,],'log_file':True, 'num_steps':5})

runner.run()

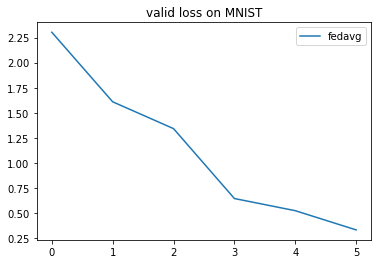

- Show Training Result

The training result is saved as a record under the dictionary of the task task_path/record. We use the built-in analyzer to read and show it.

import flgo.experiment.analyzer

# create the analysis plan

analysis_plan = {

'Selector':{'task': task_path, 'header':['fedavg',], },

'Painter':{'Curve':[{'args':{'x':'communication_round', 'y':'val_loss'}}]},

'Table':{'min_value':[{'x':'val_loss'}]},

}

flgo.experiment.analyzer.show(analysis_plan)

We will get a figure as below

| Method | Reference | Publication | Tag |

|---|---|---|---|

| FedAvg | [McMahan et al., 2017] | AISTATS' 2017 | |

| FedAsync | [Cong Xie et al., 2019] | arxiv | Asynchronous |

| FedBuff | [John Nguyen et al., 2022] | AISTATS 2022 | Asynchronous |

| TiFL | [Zheng Chai et al., 2020] | HPDC 2020 | Communication-efficiency, responsiveness |

| AFL | [Mehryar Mohri et al., 2019] | ICML 2019 | Fairness |

| FedFv | [Zheng Wang et al., 2019] | IJCAI 2021 | Fairness |

| FedMgda+ | [Zeou Hu et al., 2022] | IEEE TNSE 2022 | Fairness, robustness |

| FedProx | [Tian Li et al., 2020] | MLSys 2020 | Non-I.I.D., Incomplete Training |

| Mifa | [Xinran Gu et al., 2021] | NeurIPS 2021 | Client Availability |

| PowerofChoice | [Yae Jee Cho et al., 2020] | arxiv | Biased Sampling, Fast-Convergence |

| QFedAvg | [Tian Li et al., 2020] | ICLR 2020 | Communication-efficient, Fairness |

| Scaffold | [Sai Praneeth Karimireddy et al., 2020] | ICML 2020 | Non-I.I.D., Communication Capacity |

Basic options:

-

taskis to choose the task of splited dataset. Options: name of fedtask (e.g.mnist_classification_client100_dist0_beta0_noise0). -

algorithmis to choose the FL algorithm. Options:fedfv,fedavg,fedprox, … -

modelshould be the corresponding model of the dataset. Options:mlp,cnn,resnet18.

Server-side options:

-

sampledecides the way to sample clients in each round. Options:uniformmeans uniformly,mdmeans choosing with probability. -

aggregatedecides the way to aggregate clients' model. Options:uniform,weighted_scale,weighted_com -

num_roundsis the number of communication rounds. -

proportionis the proportion of clients to be selected in each round. -

lr_scheduleris the global learning rate scheduler. -

learning_rate_decayis the decay rate of the learning rate.

Client-side options:

-

num_epochsis the number of local training epochs. -

num_stepsis the number of local updating steps and the default value is -1. If this term is set larger than 0,num_epochsis not valid. -

learning_rateis the step size when locally training. -

batch_sizeis the size of one batch data during local training.batch_size = full_batchifbatch_size==-1andbatch_size=|Di|*batch_sizeif1>batch_size>0. -

optimizeris to choose the optimizer. Options:SGD,Adam. -

weight_decayis to set ratio for weight decay during the local training process. -

momentumis the ratio of the momentum item when the optimizer SGD taking each step.

Real Machine-Dependent options:

-

seedis the initial random seed. -

gpuis the id of the GPU device. (e.g. CPU is used without specifying this term.--gpu 0will use device GPU 0, and--gpu 0 1 2 3will use the specified 4 GPUs whennum_threads>0. -

server_with_cpuis set False as default value,.. -

test_batch_sizeis the batch_size used when evaluating models on validation datasets, which is limited by the free space of the used device. -

eval_intervalcontrols the interval between every two evaluations. -

num_threadsis the number of threads in the clients computing session that aims to accelarate the training process. -

num_workersis the number of workers of the torch.utils.data.Dataloader

Additional hyper-parameters for particular federated algorithms:

algo_parais used to receive the algorithm-dependent hyper-parameters from command lines. Usage: 1) The hyper-parameter will be set as the default value defined in Server.init() if not specifying this term, 2) For algorithms with one or more parameters, use--algo_para v1 v2 ...to specify the values for the parameters. The input order depends on the dictServer.algo_paradefined inServer.__init__().

Logger's setting

-

loggeris used to selected the logger that has the same name with this term. -

log_levelshares the same meaning with the LEVEL in the python's native module logging. -

log_filecontrols whether to store the running-time information into.loginfedtask/taskname/log/, default value is false. -

no_log_consolecontrols whether to show the running time information on the console, and default value is false.

To get more information and full-understanding of FLGo please refer to our website.

In the website, we offer :

- API docs: Detailed introduction of packages, classes and methods.

- Tutorial: Materials that help user to master FLGo.

We seperate the FL system into four parts:algorithm, benchmark, experiment, fedtask, system_simulator and utils.

├─ algorithm

│ ├─ fedavg.py //fedavg algorithm

│ ├─ ...

│ ├─ fedasync.py //the base class for asynchronous federated algorithms

│ └─ fedbase.py //the base class for federated algorithms

├─ benchmark

│ ├─ mnist_classification //classification on mnist dataset

│ │ ├─ model //the corresponding model

│ | └─ core.py //the core supporting for the dataset, and each contains three necessary classes(e.g. TaskGen, TaskReader, TaskCalculator)

│ ├─ ...

│ ├─ RAW_DATA // storing the downloaded raw dataset

│ └─ toolkits //the basic tools for generating federated dataset

│ ├─ cv // common federal division on cv

│ │ ├─ horizontal // horizontal fedtask

│ │ │ └─ image_classification.py // the base class for image classification

│ │ └─ ...

│ ├─ ...

│ ├─ base.py // the base class for all fedtask

│ ├─ partition.py // the parttion class for federal division

│ └─ visualization.py // visualization after the data set is divided

├─ experiment

│ ├─ logger //the class that records the experimental process

│ │ ├─ basic_logger.py //the base logger class

│ | └─ simple_logger.py //a simple logger class

│ ├─ analyzer.py //the class for analyzing and printing experimental results

│ ├─ res_config.yml //hyperparameter file of analyzer.py

│ ├─ run_config.yml //hyperparameter file of runner.py

| └─ runner.py //the class for generating experimental commands based on hyperparameter combinations and processor scheduling for all experimental

├─ system_simulator //system heterogeneity simulation module

│ ├─ base.py //the base class for simulate system heterogeneity

│ ├─ default_simulator.py //the default class for simulate system heterogeneity

| └─ ...

├─ utils

│ ├─ fflow.py //option to read, initialize,...

│ └─ fmodule.py //model-level operators

└─ requirements.txt

We have added many benchmarks covering several different areas such as CV, NLP, etc

| Task | Scenario | Datasets | ||

| CV | Classification | Horizontal & Vertical | CIFAR10\100, MNIST, FashionMNIST,FEMNIST, EMNIST, SVHN | |

| Detection | Horizontal | Coco, VOC | ||

| Segmentation | Horizontal | Coco, SBDataset | ||

| NLP | Classification | Horizontal | Sentiment140, AG_NEWS, sst2 | |

| Text Prediction | Horizontal | Shakespeare, Reddit | ||

| Translation | Horizontal | Multi30k | ||

| Graph | Node Classification | Horizontal | Cora, Citeseer, Pubmed | |

| Link Prediction | Horizontal | Cora, Citeseer, Pubmed | ||

| Graph Classification | Horizontal | Enzymes, Mutag | ||

| Recommendation | Rating Prediction | Horizontal & Vertical | Ciao, Movielens, Epinions, Filmtrust, Douban | |

| Series | Time series forecasting | Horizontal | Electricity, Exchange Rate | |

| Tabular | Classification | Horizontal | Adult, Bank Marketing | |

| Synthetic | Regression | Horizontal | Synthetic, DistributedQP, CUBE | |

We define each task as a combination of the federated dataset of a particular distribution and the experimental results on it. The raw dataset is processed into .json file, following LEAF (https://github.com/TalwalkarLab/leaf). The architecture of the data.json file is described as below:

# store the raw data

{

'store': 'XY'

'client_names': ['user0', ..., 'user99']

'user0': {

'dtrain': {'x': [...], 'y': [...]},

'dvalid': {'x': [...], 'y': [...]},

},...,

'user99': {

'dtrain': {'x': [...], 'y': [...]},

'dvalid': {'x': [...], 'y': [...]},

},

'dtest': {'x':[...], 'y':[...]}

}

# store the index of data in the original dataset

{

'store': 'IDX'

'datasrc':{

'class_path': 'torchvision.datasets',

'class_name': dataset_class_name,

'train_args': {

'root': "str(raw_data_path)",

...

},

'test_args': {

'root': "str(raw_data_path)",

...

}

}

'client_names': ['user0', ..., 'user99']

'user0': {

'dtrain': [...],

'dvalid': [...],

},...,

'dtest': [...]

}

Run the file ./generate_fedtask.py to get the splited dataset (.json file).

Since the task-specified models are usually orthogonal to the FL algorithms, we don't consider it an important part in this system. And the model and the basic loss function are defined in ./task/dataset_name/model_name.py. Further details are described in fedtask/README.md.

This module is the specific federated learning algorithm implementation. Each method contains two classes: the

This module is the specific federated learning algorithm implementation. Each method contains two classes: the Server and the Client.

The whole FL system starts with the main.py, which runs server.run() after initialization. Then the server repeat the method iterate() for num_rounds times, which simulates the communication process in FL. In the iterate(), the BaseServer start with sampling clients by select(), and then exchanges model parameters with them by communicate(), and finally aggregate the different models into a new one with aggregate(). Therefore, anyone who wants to customize its own method that specifies some operations on the server-side should rewrite the method iterate() and particular methods mentioned above.

The clients reponse to the server after the server communicate_with() them, who first unpack() the received package and then train the model with their local dataset by train(). After training the model, the clients pack() send package (e.g. parameters, loss, gradient,... ) to the server through reply().

The experiment module contains experiment command generation and scheduling operation, which can help FL researchers more conveniently conduct experiments in the field of federated learning.

The system_simulator module is used to realize the simulation of heterogeneous systems, and we set multiple states such as network speed and availability to better simulate the system heterogeneity of federated learning parties.

Utils is composed of commonly used operations: model-level operation (we convert model layers and parameters to dictionary type and apply it in the whole FL system).

Please cite our paper in your publications if this code helps your research.

@article{2023flgo,

title={FLGo: A Lightning Framework for Federated Learning},

author={},

journal={},

year={2023},

publisher={}

}

Zheng Wang, [email protected]

Xiaoliang Fan, [email protected], https://fanxlxmu.github.io

[Cong Xie. et al., 2019] Cong Xie, Sanmi Koyejo, Indranil Gupta. Asynchronous Federated Optimization.

[John Nguyen. et al., 2022] John Nguyen, Kshitiz Malik, Hongyuan Zhan, Ashkan Yousefpour, Michael Rabbat, Mani Malek, Dzmitry Huba. Federated Learning with Buffered Asynchronous Aggregation. In International Conference on Artificial Intelligence and Statistics (AISTATS), 2022.

[Mehryar Mohri. et al., 2019] Mehryar Mohri, Gary Sivek, Ananda Theertha Suresh. Agnostic Federated Learning.In International Conference on Machine Learning(ICML), 2019

[Zheng Wang. et al., 2021] Zheng Wang, Xiaoliang Fan, Jianzhong Qi, Chenglu Wen, Cheng Wang, Rongshan Yu. Federated Learning with Fair Averaging. In International Joint Conference on Artificial Intelligence, 2021

[Zeou Hu. et al., 2022] Zeou Hu, Kiarash Shaloudegi, Guojun Zhang, Yaoliang Yu. Federated Learning Meets Multi-objective Optimization. In IEEE Transactions on Network Science and Engineering, 2022

[Tian Li. et al., 2020] Tian Li, Anit Kumar Sahu, Manzil Zaheer, Maziar Sanjabi, Ameet Talwalkar, Virginia Smith. Federated Optimization in Heterogeneous Networks. In Conference on Machine Learning and Systems, 2020

[Xinran Gu. et al., 2021] Xinran Gu, Kaixuan Huang, Jingzhao Zhang, Longbo Huang. Fast Federated Learning in the Presence of Arbitrary Device Unavailability. In Neural Information Processing Systems(NeurIPS), 2021

[Yae Jee Cho. et al., 2020] Yae Jee Cho, Jianyu Wang, Gauri Joshi. Client Selection in Federated Learning: Convergence Analysis and Power-of-Choice Selection Strategies.

[Tian Li. et al., 2020] Tian Li, Maziar Sanjabi, Ahmad Beirami, Virginia Smith. Fair Resource Allocation in Federated Learning. In International Conference on Learning Representations, 2020

[Sai Praneeth Karimireddy. et al., 2020] Sai Praneeth Karimireddy, Satyen Kale, Mehryar Mohri, Sashank J. Reddi, Sebastian U. Stich, Ananda Theertha Suresh. SCAFFOLD: Stochastic Controlled Averaging for Federated Learning. In International Conference on Machine Learning, 2020