FightingCV Codebase For Attention,Backbone, MLP, Re-parameter, Convolution

If this project is helpful to you, welcome to give a star.

Don't forget to follow me to learn about project updates.

Hello,大家好,我是小马🚀🚀🚀

For 小白(Like Me): 最近在读论文的时候会发现一个问题,有时候论文核心思想非常简单,核心代码可能也就十几行。但是打开作者release的源码时,却发现提出的模块嵌入到分类、检测、分割等任务框架中,导致代码比较冗余,对于特定任务框架不熟悉的我,很难找到核心代码,导致在论文和网络思想的理解上会有一定困难。

For 进阶者(Like You): 如果把Conv、FC、RNN这些基本单元看做小的Lego积木,把Transformer、ResNet这些结构看成已经搭好的Lego城堡。那么本项目提供的模块就是一个个具有完整语义信息的Lego组件。让科研工作者们避免反复造轮子,只需思考如何利用这些“Lego组件”,搭建出更多绚烂多彩的作品。

For 大神(May Be Like You): 能力有限,不喜轻喷!!!

For All: 本项目就是要实现一个既能让深度学习小白也能搞懂,又能服务科研和工业社区的代码库。作为【论文解析项目】的补充,本项目的宗旨是从代码角度,实现🚀让世界上没有难读的论文🚀。

(同时也非常欢迎各位科研工作者将自己的工作的核心代码整理到本项目中,推动科研社区的发展,会在readme中注明代码的作者~)

欢迎大家关注公众号:FightingCV

公众号每天都会进行论文、算法和代码的干货分享哦~

每天在群里分享一些近期的论文和解析,欢迎大家一起学习交流哈~~~

强烈推荐大家关注知乎账号和FightingCV公众号,可以快速了解到最新优质的干货资源。

-

Pytorch implementation of "Beyond Self-attention: External Attention using Two Linear Layers for Visual Tasks---arXiv 2021.05.05"

-

Pytorch implementation of "Attention Is All You Need---NIPS2017"

-

Pytorch implementation of "Squeeze-and-Excitation Networks---CVPR2018"

-

Pytorch implementation of "Selective Kernel Networks---CVPR2019"

-

Pytorch implementation of "CBAM: Convolutional Block Attention Module---ECCV2018"

-

Pytorch implementation of "BAM: Bottleneck Attention Module---BMCV2018"

-

Pytorch implementation of "ECA-Net: Efficient Channel Attention for Deep Convolutional Neural Networks---CVPR2020"

-

Pytorch implementation of "Dual Attention Network for Scene Segmentation---CVPR2019"

-

Pytorch implementation of "EPSANet: An Efficient Pyramid Split Attention Block on Convolutional Neural Network---arXiv 2021.05.30"

-

Pytorch implementation of "ResT: An Efficient Transformer for Visual Recognition---arXiv 2021.05.28"

-

Pytorch implementation of "SA-NET: SHUFFLE ATTENTION FOR DEEP CONVOLUTIONAL NEURAL NETWORKS---ICASSP 2021"

-

Pytorch implementation of "MUSE: Parallel Multi-Scale Attention for Sequence to Sequence Learning---arXiv 2019.11.17"

-

Pytorch implementation of "Spatial Group-wise Enhance: Improving Semantic Feature Learning in Convolutional Networks---arXiv 2019.05.23"

-

Pytorch implementation of "A2-Nets: Double Attention Networks---NIPS2018"

-

Pytorch implementation of "An Attention Free Transformer---ICLR2021 (Apple New Work)"

-

Pytorch implementation of VOLO: Vision Outlooker for Visual Recognition---arXiv 2021.06.24" 【论文解析】

-

Pytorch implementation of Vision Permutator: A Permutable MLP-Like Architecture for Visual Recognition---arXiv 2021.06.23 【论文解析】

-

Pytorch implementation of CoAtNet: Marrying Convolution and Attention for All Data Sizes---arXiv 2021.06.09 【论文解析】

-

Pytorch implementation of Scaling Local Self-Attention for Parameter Efficient Visual Backbones---CVPR2021 Oral 【论文解析】

-

Pytorch implementation of Polarized Self-Attention: Towards High-quality Pixel-wise Regression---arXiv 2021.07.02 【论文解析】

-

Pytorch implementation of Contextual Transformer Networks for Visual Recognition---arXiv 2021.07.26 【论文解析】

-

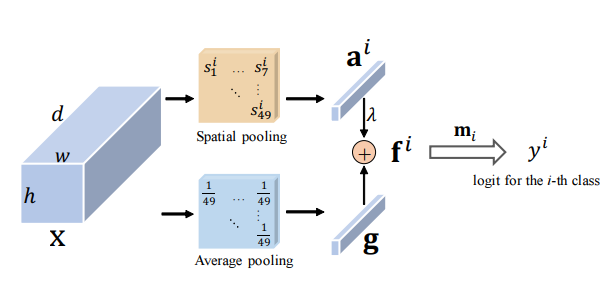

Pytorch implementation of Residual Attention: A Simple but Effective Method for Multi-Label Recognition---ICCV2021

-

Pytorch implementation of S²-MLPv2: Improved Spatial-Shift MLP Architecture for Vision---arXiv 2021.08.02 【论文解析】

-

Pytorch implementation of Global Filter Networks for Image Classification---arXiv 2021.07.01

-

Pytorch implementation of Rotate to Attend: Convolutional Triplet Attention Module---WACV 2021

-

Pytorch implementation of Coordinate Attention for Efficient Mobile Network Design ---CVPR 2021

-

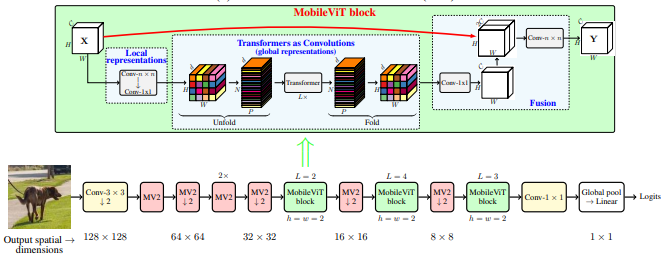

Pytorch implementation of MobileViT: Light-weight, General-purpose, and Mobile-friendly Vision Transformer---ArXiv 2021.10.05

-

Pytorch implementation of Non-deep Networks---ArXiv 2021.10.20

-

Pytorch implementation of UFO-ViT: High Performance Linear Vision Transformer without Softmax---ArXiv 2021.09.29

"Beyond Self-attention: External Attention using Two Linear Layers for Visual Tasks"

from model.attention.ExternalAttention import ExternalAttention

import torch

input=torch.randn(50,49,512)

ea = ExternalAttention(d_model=512,S=8)

output=ea(input)

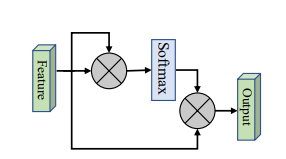

print(output.shape)from model.attention.SelfAttention import ScaledDotProductAttention

import torch

input=torch.randn(50,49,512)

sa = ScaledDotProductAttention(d_model=512, d_k=512, d_v=512, h=8)

output=sa(input,input,input)

print(output.shape)from model.attention.SimplifiedSelfAttention import SimplifiedScaledDotProductAttention

import torch

input=torch.randn(50,49,512)

ssa = SimplifiedScaledDotProductAttention(d_model=512, h=8)

output=ssa(input,input,input)

print(output.shape)"Squeeze-and-Excitation Networks"

from model.attention.SEAttention import SEAttention

import torch

input=torch.randn(50,512,7,7)

se = SEAttention(channel=512,reduction=8)

output=se(input)

print(output.shape)from model.attention.SKAttention import SKAttention

import torch

input=torch.randn(50,512,7,7)

se = SKAttention(channel=512,reduction=8)

output=se(input)

print(output.shape)"CBAM: Convolutional Block Attention Module"

from model.attention.CBAM import CBAMBlock

import torch

input=torch.randn(50,512,7,7)

kernel_size=input.shape[2]

cbam = CBAMBlock(channel=512,reduction=16,kernel_size=kernel_size)

output=cbam(input)

print(output.shape)"BAM: Bottleneck Attention Module"

from model.attention.BAM import BAMBlock

import torch

input=torch.randn(50,512,7,7)

bam = BAMBlock(channel=512,reduction=16,dia_val=2)

output=bam(input)

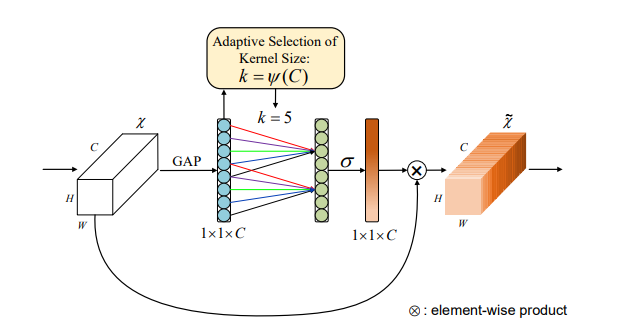

print(output.shape)"ECA-Net: Efficient Channel Attention for Deep Convolutional Neural Networks"

from model.attention.ECAAttention import ECAAttention

import torch

input=torch.randn(50,512,7,7)

eca = ECAAttention(kernel_size=3)

output=eca(input)

print(output.shape)"Dual Attention Network for Scene Segmentation"

from model.attention.DANet import DAModule

import torch

input=torch.randn(50,512,7,7)

danet=DAModule(d_model=512,kernel_size=3,H=7,W=7)

print(danet(input).shape)"EPSANet: An Efficient Pyramid Split Attention Block on Convolutional Neural Network"

from model.attention.PSA import PSA

import torch

input=torch.randn(50,512,7,7)

psa = PSA(channel=512,reduction=8)

output=psa(input)

print(output.shape)"ResT: An Efficient Transformer for Visual Recognition"

from model.attention.EMSA import EMSA

import torch

from torch import nn

from torch.nn import functional as F

input=torch.randn(50,64,512)

emsa = EMSA(d_model=512, d_k=512, d_v=512, h=8,H=8,W=8,ratio=2,apply_transform=True)

output=emsa(input,input,input)

print(output.shape)

"SA-NET: SHUFFLE ATTENTION FOR DEEP CONVOLUTIONAL NEURAL NETWORKS"

from model.attention.ShuffleAttention import ShuffleAttention

import torch

from torch import nn

from torch.nn import functional as F

input=torch.randn(50,512,7,7)

se = ShuffleAttention(channel=512,G=8)

output=se(input)

print(output.shape)

"MUSE: Parallel Multi-Scale Attention for Sequence to Sequence Learning"

from model.attention.MUSEAttention import MUSEAttention

import torch

from torch import nn

from torch.nn import functional as F

input=torch.randn(50,49,512)

sa = MUSEAttention(d_model=512, d_k=512, d_v=512, h=8)

output=sa(input,input,input)

print(output.shape)Spatial Group-wise Enhance: Improving Semantic Feature Learning in Convolutional Networks

from model.attention.SGE import SpatialGroupEnhance

import torch

from torch import nn

from torch.nn import functional as F

input=torch.randn(50,512,7,7)

sge = SpatialGroupEnhance(groups=8)

output=sge(input)

print(output.shape)A2-Nets: Double Attention Networks

from model.attention.A2Atttention import DoubleAttention

import torch

from torch import nn

from torch.nn import functional as F

input=torch.randn(50,512,7,7)

a2 = DoubleAttention(512,128,128,True)

output=a2(input)

print(output.shape)from model.attention.AFT import AFT_FULL

import torch

from torch import nn

from torch.nn import functional as F

input=torch.randn(50,49,512)

aft_full = AFT_FULL(d_model=512, n=49)

output=aft_full(input)

print(output.shape)VOLO: Vision Outlooker for Visual Recognition"

from model.attention.OutlookAttention import OutlookAttention

import torch

from torch import nn

from torch.nn import functional as F

input=torch.randn(50,28,28,512)

outlook = OutlookAttention(dim=512)

output=outlook(input)

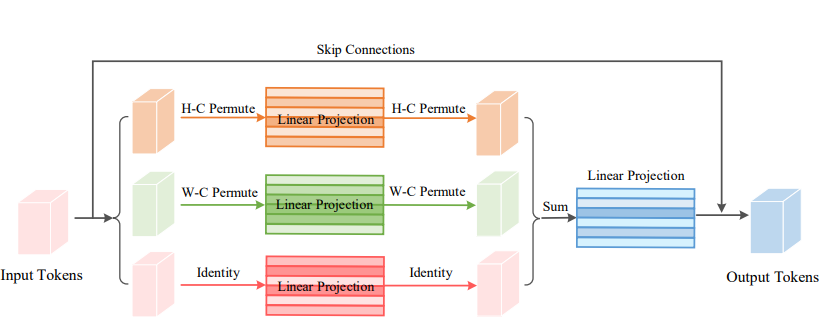

print(output.shape)Vision Permutator: A Permutable MLP-Like Architecture for Visual Recognition"

from model.attention.ViP import WeightedPermuteMLP

import torch

from torch import nn

from torch.nn import functional as F

input=torch.randn(64,8,8,512)

seg_dim=8

vip=WeightedPermuteMLP(512,seg_dim)

out=vip(input)

print(out.shape)CoAtNet: Marrying Convolution and Attention for All Data Sizes"

None

from model.attention.CoAtNet import CoAtNet

import torch

from torch import nn

from torch.nn import functional as F

input=torch.randn(1,3,224,224)

mbconv=CoAtNet(in_ch=3,image_size=224)

out=mbconv(input)

print(out.shape)Scaling Local Self-Attention for Parameter Efficient Visual Backbones"

from model.attention.HaloAttention import HaloAttention

import torch

from torch import nn

from torch.nn import functional as F

input=torch.randn(1,512,8,8)

halo = HaloAttention(dim=512,

block_size=2,

halo_size=1,)

output=halo(input)

print(output.shape)Polarized Self-Attention: Towards High-quality Pixel-wise Regression"

from model.attention.PolarizedSelfAttention import ParallelPolarizedSelfAttention,SequentialPolarizedSelfAttention

import torch

from torch import nn

from torch.nn import functional as F

input=torch.randn(1,512,7,7)

psa = SequentialPolarizedSelfAttention(channel=512)

output=psa(input)

print(output.shape)

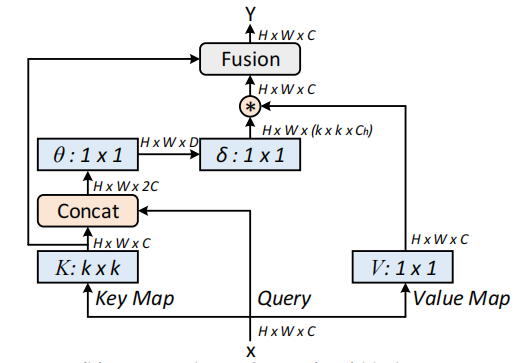

Contextual Transformer Networks for Visual Recognition---arXiv 2021.07.26

from model.attention.CoTAttention import CoTAttention

import torch

from torch import nn

from torch.nn import functional as F

input=torch.randn(50,512,7,7)

cot = CoTAttention(dim=512,kernel_size=3)

output=cot(input)

print(output.shape)

Residual Attention: A Simple but Effective Method for Multi-Label Recognition---ICCV2021

from model.attention.ResidualAttention import ResidualAttention

import torch

from torch import nn

from torch.nn import functional as F

input=torch.randn(50,512,7,7)

resatt = ResidualAttention(channel=512,num_class=1000,la=0.2)

output=resatt(input)

print(output.shape)

S²-MLPv2: Improved Spatial-Shift MLP Architecture for Vision---arXiv 2021.08.02

from model.attention.S2Attention import S2Attention

import torch

from torch import nn

from torch.nn import functional as F

input=torch.randn(50,512,7,7)

s2att = S2Attention(channels=512)

output=s2att(input)

print(output.shape)Global Filter Networks for Image Classification---arXiv 2021.07.01

25.3. Usage Code - Implemented by Wenliang Zhao (Author)

from model.attention.gfnet import GFNet

import torch

from torch import nn

from torch.nn import functional as F

x = torch.randn(1, 3, 224, 224)

gfnet = GFNet(embed_dim=384, img_size=224, patch_size=16, num_classes=1000)

out = gfnet(x)

print(out.shape)Rotate to Attend: Convolutional Triplet Attention Module---CVPR 2021

26.3. Usage Code - Implemented by digantamisra98

from model.attention.TripletAttention import TripletAttention

import torch

from torch import nn

from torch.nn import functional as F

input=torch.randn(50,512,7,7)

triplet = TripletAttention()

output=triplet(input)

print(output.shape)Coordinate Attention for Efficient Mobile Network Design---CVPR 2021

27.3. Usage Code - Implemented by Andrew-Qibin

from model.attention.CoordAttention import CoordAtt

import torch

from torch import nn

from torch.nn import functional as F

inp=torch.rand([2, 96, 56, 56])

inp_dim, oup_dim = 96, 96

reduction=32

coord_attention = CoordAtt(inp_dim, oup_dim, reduction=reduction)

output=coord_attention(inp)

print(output.shape)MobileViT: Light-weight, General-purpose, and Mobile-friendly Vision Transformer---ArXiv 2021.10.05

from model.attention.MobileViTAttention import MobileViTAttention

import torch

from torch import nn

from torch.nn import functional as F

if __name__ == '__main__':

m=MobileViTAttention()

input=torch.randn(1,3,49,49)

output=m(input)

print(output.shape) #output:(1,3,49,49)

Non-deep Networks---ArXiv 2021.10.20

from model.attention.ParNetAttention import *

import torch

from torch import nn

from torch.nn import functional as F

if __name__ == '__main__':

input=torch.randn(50,512,7,7)

pna = ParNetAttention(channel=512)

output=pna(input)

print(output.shape) #50,512,7,7

UFO-ViT: High Performance Linear Vision Transformer without Softmax---ArXiv 2021.09.29

from model.attention.UFOAttention import *

import torch

from torch import nn

from torch.nn import functional as F

if __name__ == '__main__':

input=torch.randn(50,49,512)

ufo = UFOAttention(d_model=512, d_k=512, d_v=512, h=8)

output=ufo(input,input,input)

print(output.shape) #[50, 49, 512]

-

Pytorch implementation of "Deep Residual Learning for Image Recognition---CVPR2016 Best Paper"

-

Pytorch implementation of "Aggregated Residual Transformations for Deep Neural Networks---CVPR2017"

-

Pytorch implementation of MobileViT: Light-weight, General-purpose, and Mobile-friendly Vision Transformer---ArXiv 2020.10.05

-

Pytorch implementation of Patches Are All You Need?---ICLR2022 (Under Review)

"Deep Residual Learning for Image Recognition---CVPR2016 Best Paper"

from model.backbone.resnet import ResNet50,ResNet101,ResNet152

import torch

if __name__ == '__main__':

input=torch.randn(50,3,224,224)

resnet50=ResNet50(1000)

# resnet101=ResNet101(1000)

# resnet152=ResNet152(1000)

out=resnet50(input)

print(out.shape)"Aggregated Residual Transformations for Deep Neural Networks---CVPR2017"

from model.backbone.resnext import ResNeXt50,ResNeXt101,ResNeXt152

import torch

if __name__ == '__main__':

input=torch.randn(50,3,224,224)

resnext50=ResNeXt50(1000)

# resnext101=ResNeXt101(1000)

# resnext152=ResNeXt152(1000)

out=resnext50(input)

print(out.shape)

MobileViT: Light-weight, General-purpose, and Mobile-friendly Vision Transformer---ArXiv 2020.10.05

from model.backbone.MobileViT import *

import torch

from torch import nn

from torch.nn import functional as F

if __name__ == '__main__':

input=torch.randn(1,3,224,224)

### mobilevit_xxs

mvit_xxs=mobilevit_xxs()

out=mvit_xxs(input)

print(out.shape)

### mobilevit_xs

mvit_xs=mobilevit_xs()

out=mvit_xs(input)

print(out.shape)

### mobilevit_s

mvit_s=mobilevit_s()

out=mvit_s(input)

print(out.shape)Patches Are All You Need?---ICLR2022 (Under Review)

from model.backbone.ConvMixer import *

import torch

from torch import nn

from torch.nn import functional as F

if __name__ == '__main__':

x=torch.randn(1,3,224,224)

convmixer=ConvMixer(dim=512,depth=12)

out=convmixer(x)

print(out.shape) #[1, 1000]

-

Pytorch implementation of "RepMLP: Re-parameterizing Convolutions into Fully-connected Layers for Image Recognition---arXiv 2021.05.05"

-

Pytorch implementation of "MLP-Mixer: An all-MLP Architecture for Vision---arXiv 2021.05.17"

-

Pytorch implementation of "ResMLP: Feedforward networks for image classification with data-efficient training---arXiv 2021.05.07"

-

Pytorch implementation of "Pay Attention to MLPs---arXiv 2021.05.17"

-

Pytorch implementation of "Sparse MLP for Image Recognition: Is Self-Attention Really Necessary?---arXiv 2021.09.12"

"RepMLP: Re-parameterizing Convolutions into Fully-connected Layers for Image Recognition"

from model.mlp.repmlp import RepMLP

import torch

from torch import nn

N=4 #batch size

C=512 #input dim

O=1024 #output dim

H=14 #image height

W=14 #image width

h=7 #patch height

w=7 #patch width

fc1_fc2_reduction=1 #reduction ratio

fc3_groups=8 # groups

repconv_kernels=[1,3,5,7] #kernel list

repmlp=RepMLP(C,O,H,W,h,w,fc1_fc2_reduction,fc3_groups,repconv_kernels=repconv_kernels)

x=torch.randn(N,C,H,W)

repmlp.eval()

for module in repmlp.modules():

if isinstance(module, nn.BatchNorm2d) or isinstance(module, nn.BatchNorm1d):

nn.init.uniform_(module.running_mean, 0, 0.1)

nn.init.uniform_(module.running_var, 0, 0.1)

nn.init.uniform_(module.weight, 0, 0.1)

nn.init.uniform_(module.bias, 0, 0.1)

#training result

out=repmlp(x)

#inference result

repmlp.switch_to_deploy()

deployout = repmlp(x)

print(((deployout-out)**2).sum())"MLP-Mixer: An all-MLP Architecture for Vision"

from model.mlp.mlp_mixer import MlpMixer

import torch

mlp_mixer=MlpMixer(num_classes=1000,num_blocks=10,patch_size=10,tokens_hidden_dim=32,channels_hidden_dim=1024,tokens_mlp_dim=16,channels_mlp_dim=1024)

input=torch.randn(50,3,40,40)

output=mlp_mixer(input)

print(output.shape)"ResMLP: Feedforward networks for image classification with data-efficient training"

from model.mlp.resmlp import ResMLP

import torch

input=torch.randn(50,3,14,14)

resmlp=ResMLP(dim=128,image_size=14,patch_size=7,class_num=1000)

out=resmlp(input)

print(out.shape) #the last dimention is class_numfrom model.mlp.g_mlp import gMLP

import torch

num_tokens=10000

bs=50

len_sen=49

num_layers=6

input=torch.randint(num_tokens,(bs,len_sen)) #bs,len_sen

gmlp = gMLP(num_tokens=num_tokens,len_sen=len_sen,dim=512,d_ff=1024)

output=gmlp(input)

print(output.shape)"Sparse MLP for Image Recognition: Is Self-Attention Really Necessary?"

from model.mlp.sMLP_block import sMLPBlock

import torch

from torch import nn

from torch.nn import functional as F

if __name__ == '__main__':

input=torch.randn(50,3,224,224)

smlp=sMLPBlock(h=224,w=224)

out=smlp(input)

print(out.shape)-

Pytorch implementation of "RepVGG: Making VGG-style ConvNets Great Again---CVPR2021"

-

Pytorch implementation of "ACNet: Strengthening the Kernel Skeletons for Powerful CNN via Asymmetric Convolution Blocks---ICCV2019"

-

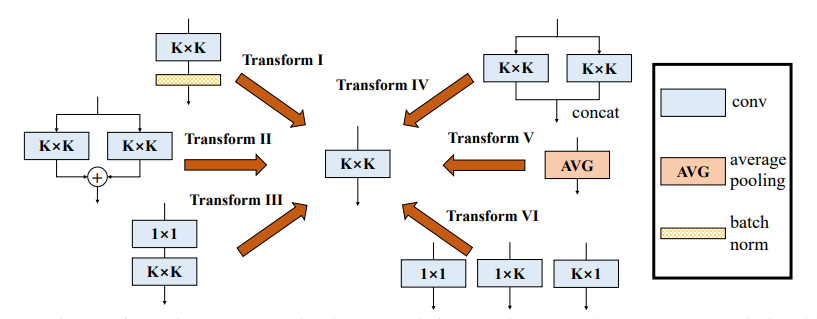

Pytorch implementation of "Diverse Branch Block: Building a Convolution as an Inception-like Unit---CVPR2021"

"RepVGG: Making VGG-style ConvNets Great Again"

from model.rep.repvgg import RepBlock

import torch

input=torch.randn(50,512,49,49)

repblock=RepBlock(512,512)

repblock.eval()

out=repblock(input)

repblock._switch_to_deploy()

out2=repblock(input)

print('difference between vgg and repvgg')

print(((out2-out)**2).sum())"ACNet: Strengthening the Kernel Skeletons for Powerful CNN via Asymmetric Convolution Blocks"

from model.rep.acnet import ACNet

import torch

from torch import nn

input=torch.randn(50,512,49,49)

acnet=ACNet(512,512)

acnet.eval()

out=acnet(input)

acnet._switch_to_deploy()

out2=acnet(input)

print('difference:')

print(((out2-out)**2).sum())"Diverse Branch Block: Building a Convolution as an Inception-like Unit"

from model.rep.ddb import transI_conv_bn

import torch

from torch import nn

from torch.nn import functional as F

input=torch.randn(1,64,7,7)

#conv+bn

conv1=nn.Conv2d(64,64,3,padding=1)

bn1=nn.BatchNorm2d(64)

bn1.eval()

out1=bn1(conv1(input))

#conv_fuse

conv_fuse=nn.Conv2d(64,64,3,padding=1)

conv_fuse.weight.data,conv_fuse.bias.data=transI_conv_bn(conv1,bn1)

out2=conv_fuse(input)

print("difference:",((out2-out1)**2).sum().item())from model.rep.ddb import transII_conv_branch

import torch

from torch import nn

from torch.nn import functional as F

input=torch.randn(1,64,7,7)

#conv+conv

conv1=nn.Conv2d(64,64,3,padding=1)

conv2=nn.Conv2d(64,64,3,padding=1)

out1=conv1(input)+conv2(input)

#conv_fuse

conv_fuse=nn.Conv2d(64,64,3,padding=1)

conv_fuse.weight.data,conv_fuse.bias.data=transII_conv_branch(conv1,conv2)

out2=conv_fuse(input)

print("difference:",((out2-out1)**2).sum().item())from model.rep.ddb import transIII_conv_sequential

import torch

from torch import nn

from torch.nn import functional as F

input=torch.randn(1,64,7,7)

#conv+conv

conv1=nn.Conv2d(64,64,1,padding=0,bias=False)

conv2=nn.Conv2d(64,64,3,padding=1,bias=False)

out1=conv2(conv1(input))

#conv_fuse

conv_fuse=nn.Conv2d(64,64,3,padding=1,bias=False)

conv_fuse.weight.data=transIII_conv_sequential(conv1,conv2)

out2=conv_fuse(input)

print("difference:",((out2-out1)**2).sum().item())from model.rep.ddb import transIV_conv_concat

import torch

from torch import nn

from torch.nn import functional as F

input=torch.randn(1,64,7,7)

#conv+conv

conv1=nn.Conv2d(64,32,3,padding=1)

conv2=nn.Conv2d(64,32,3,padding=1)

out1=torch.cat([conv1(input),conv2(input)],dim=1)

#conv_fuse

conv_fuse=nn.Conv2d(64,64,3,padding=1)

conv_fuse.weight.data,conv_fuse.bias.data=transIV_conv_concat(conv1,conv2)

out2=conv_fuse(input)

print("difference:",((out2-out1)**2).sum().item())from model.rep.ddb import transV_avg

import torch

from torch import nn

from torch.nn import functional as F

input=torch.randn(1,64,7,7)

avg=nn.AvgPool2d(kernel_size=3,stride=1)

out1=avg(input)

conv=transV_avg(64,3)

out2=conv(input)

print("difference:",((out2-out1)**2).sum().item())from model.rep.ddb import transVI_conv_scale

import torch

from torch import nn

from torch.nn import functional as F

input=torch.randn(1,64,7,7)

#conv+conv

conv1x1=nn.Conv2d(64,64,1)

conv1x3=nn.Conv2d(64,64,(1,3),padding=(0,1))

conv3x1=nn.Conv2d(64,64,(3,1),padding=(1,0))

out1=conv1x1(input)+conv1x3(input)+conv3x1(input)

#conv_fuse

conv_fuse=nn.Conv2d(64,64,3,padding=1)

conv_fuse.weight.data,conv_fuse.bias.data=transVI_conv_scale(conv1x1,conv1x3,conv3x1)

out2=conv_fuse(input)

print("difference:",((out2-out1)**2).sum().item())-

Pytorch implementation of "MobileNets: Efficient Convolutional Neural Networks for Mobile Vision Applications---CVPR2017"

-

Pytorch implementation of "Efficientnet: Rethinking model scaling for convolutional neural networks---PMLR2019"

-

Pytorch implementation of "Involution: Inverting the Inherence of Convolution for Visual Recognition---CVPR2021"

-

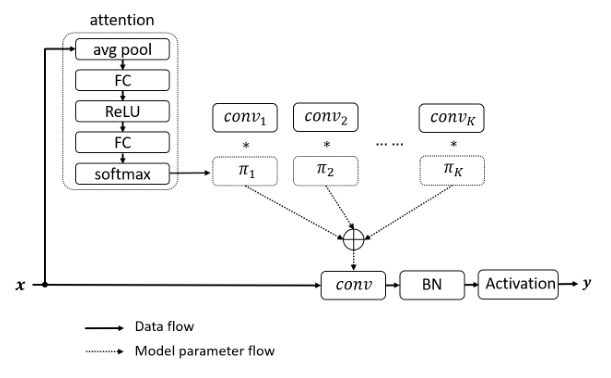

Pytorch implementation of "Dynamic Convolution: Attention over Convolution Kernels---CVPR2020 Oral"

-

Pytorch implementation of "CondConv: Conditionally Parameterized Convolutions for Efficient Inference---NeurIPS2019"

"MobileNets: Efficient Convolutional Neural Networks for Mobile Vision Applications"

from model.conv.DepthwiseSeparableConvolution import DepthwiseSeparableConvolution

import torch

from torch import nn

from torch.nn import functional as F

input=torch.randn(1,3,224,224)

dsconv=DepthwiseSeparableConvolution(3,64)

out=dsconv(input)

print(out.shape)"Efficientnet: Rethinking model scaling for convolutional neural networks"

from model.conv.MBConv import MBConvBlock

import torch

from torch import nn

from torch.nn import functional as F

input=torch.randn(1,3,224,224)

mbconv=MBConvBlock(ksize=3,input_filters=3,output_filters=512,image_size=224)

out=mbconv(input)

print(out.shape)

"Involution: Inverting the Inherence of Convolution for Visual Recognition"

from model.conv.Involution import Involution

import torch

from torch import nn

from torch.nn import functional as F

input=torch.randn(1,4,64,64)

involution=Involution(kernel_size=3,in_channel=4,stride=2)

out=involution(input)

print(out.shape)"Dynamic Convolution: Attention over Convolution Kernels"

from model.conv.DynamicConv import *

import torch

from torch import nn

from torch.nn import functional as F

if __name__ == '__main__':

input=torch.randn(2,32,64,64)

m=DynamicConv(in_planes=32,out_planes=64,kernel_size=3,stride=1,padding=1,bias=False)

out=m(input)

print(out.shape) # 2,32,64,64"CondConv: Conditionally Parameterized Convolutions for Efficient Inference"

from model.conv.CondConv import *

import torch

from torch import nn

from torch.nn import functional as F

if __name__ == '__main__':

input=torch.randn(2,32,64,64)

m=CondConv(in_planes=32,out_planes=64,kernel_size=3,stride=1,padding=1,bias=False)

out=m(input)

print(out.shape)