About • How To Use • Citations • License

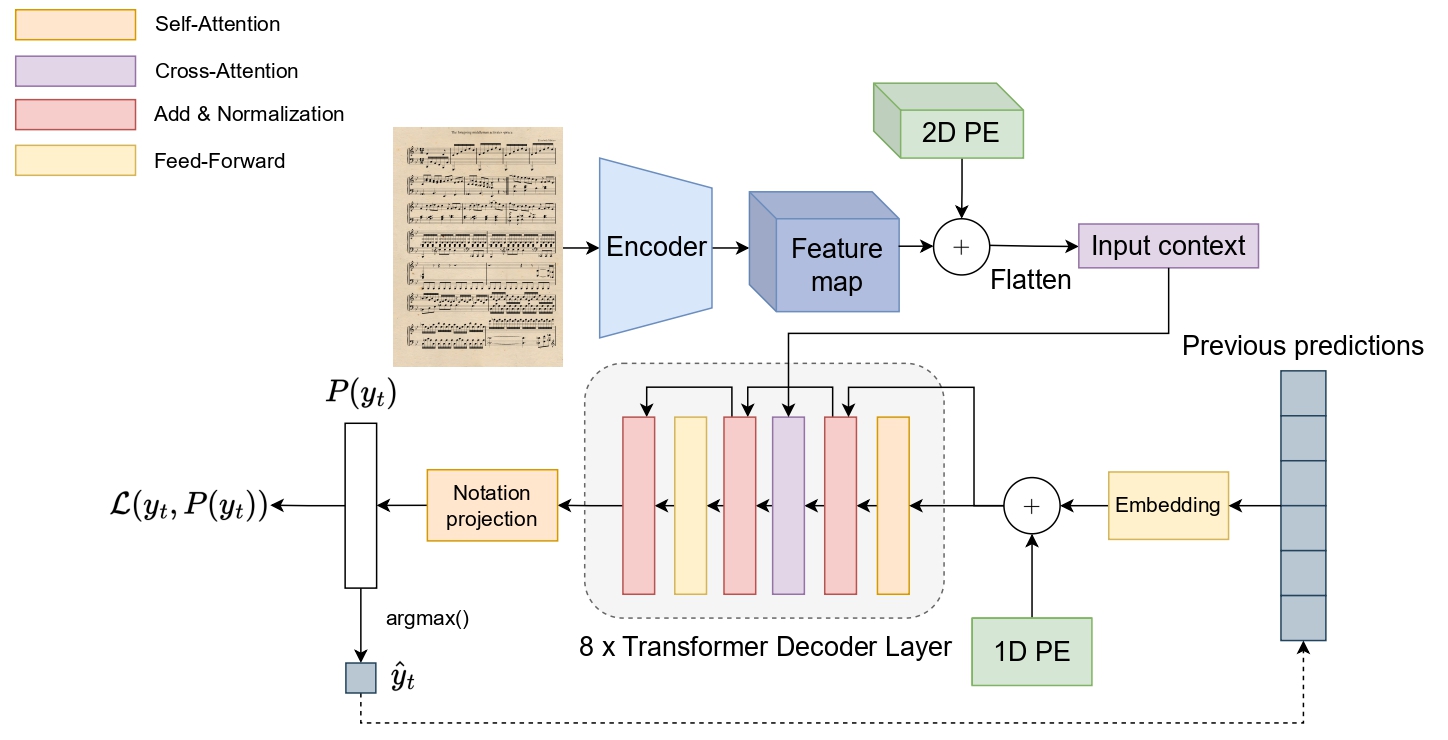

This GitHub repository contains the implementation of the upgraded version of the Sheet Music Transformer model for full-page pianoform music sheet transcription. Unlike traditional approaches that primarily resort this challenge by implementing layout analysis techniques with end-to-end transcription, this SMT model offers a complete end-to-end system for transcribing these scores directly from images. To do so, this model is trained through a progressive curriculum learning strategy with synthetic generation.

Warning

This is a work-in-progress project. Although it is very advanced and has an associate preprint, some bugs may be found.

This implementation has been developed in Python 3.9, PyTorch 2.0 and CUDA 12.0.

It should work in earlier versions.

To setup a project, run the following configuration instructions:

Create a virtual environment using either virtualenv or conda and run the following:

git clone https://github.com/antoniorv6/SMT.git

pip install -r requirements.txt

mkdir DataIf you are using Docker to run experiments, create an image with the provided Dockerfile:

docker build -t <your_tag> .

docker run -itd --rm --gpus all --shm-size=8gb -v <repository_path>:/workspace/ <image_tag>

docker exec -it <docker_container_id> /bin/bashUsing the SMT for transcribing scores is very easy, thanks to the HuggingFace Transformers 🤗 library. Just implement the following code and you will have the SMT up and running for transcribing excerpts!

import torch

import cv2

from data_augmentation.data_augmentation import convert_img_to_tensor

from smt_model import SMTModelForCausalLM

image = cv2.imread("sample.jpg")

device = "cuda" if torch.cuda.is_available() else "cpu"

model = SMTModelForCausalLM.from_pretrained("antoniorv6/<smt-weights>").to(device)

predictions, _ = model.predict(convert_img_to_tensor(image).unsqueeze(0).to(device),

convert_to_str=True)

print("".join(predictions).replace('<b>', '\n').replace('<s>', ' ').replace('<t>', '\t'))Important

Access to the datasets should be formally requested through the Huggingface form interface. Please,

Two of the three datasets datasets created to evaluate the SMT are publicly available for replication purposes.

Eveything is implemented through the HuggingFace Datasets 🤗 library, so loading any of these datasets can be done through just one line of code:

import datasets

dataset = datasets.load_dataset('antoniorv6/<dataset-name>')The dataset has two columns: image which contains the original image of the music excerpt for input, and the transcription, which contains the corresponding bekern notation ground-truth that represents the content of this input.

@misc{RiosVila:2024:SMTplusplus,

title={End-to-End Full-Page Optical Music Recognition for Pianoform Sheet Music},

author={Antonio Ríos-Vila and Jorge Calvo-Zaragoza and David Rizo and Thierry Paquet},

year={2024},

eprint={2405.12105},

archivePrefix={arXiv},

primaryClass={cs.CV}

}