The author's officially unofficial Pytorch EvolutionaryGAN implementation. The original theano code can be found here.

The author still working on improving the pytorch version and attempting to add more related functions to achieve better performance. Besides the proposed EGAN farmework, we also provide the two_player_gan_model framework that contributes to integrating some existing GAN models together.

- Linux or macOS

- Python 3

- CPU or NVIDIA GPU + CUDA CuDNN

- Clone this repo:

git clone https://github.com/WANG-Chaoyue/EvolutionaryGAN-pytorch.git

cd EvolutionaryGAN-pytorch-

Install PyTorch and other dependencies requirements.txt (e.g., torchvision, visdom and dominate).

-

Preparing .npz files for Pytorch Inception metrics evaluation (cifar10 as an example):

python inception_pytorch/calculate_inception_moments.py --dataset C10 --data_root datasets

An example of LSGAN training command was saved in ./scripts/train_lsgan_cifar10.sh. Train a model (cifar10 as an example):

bash ./scripts/train_lsgan_cifar10.shThrough configuring args --g_loss_mode, --d_loss_mode and --which_D, different training strategies can be utilized to training the two-player GAN game. Note that more explanations of loss settings can be found below.

An example of E-GAN training command was saved in ./scripts/train_egan_cifar10.sh. Train a model (cifar10 as an example):

bash ./scripts/train_egan_cifar10.shDifferent from Two-player GANs, here the arg --g_loss_mode should be set as a list of 'losses' (e.g., --g_loss_mode vanilla nsgan lsgan), which are corresponding to different mutations (or variations).

This code borrows heavily from pytorch-CycleGAN-and-pix2pix, since it provided a flexible and efficient framework for pytorch deep networks training. In this part, we briefly introduce the functions of this code. The author is working on implementing more GAN related functions.

-

Loading from image folder: ./data/single_dataset.py

--dataset_mode single -

Loading from HDF5 file: ./data/hdf5_dataset.py

--dataset_mode hdf5 -

Loading from ./data/torchvision: torchvision_dataset.py

--dataset_mode torchvision

-

Two-player GANs: ./models/two_player_gan_model.py

--model two_player_gan -

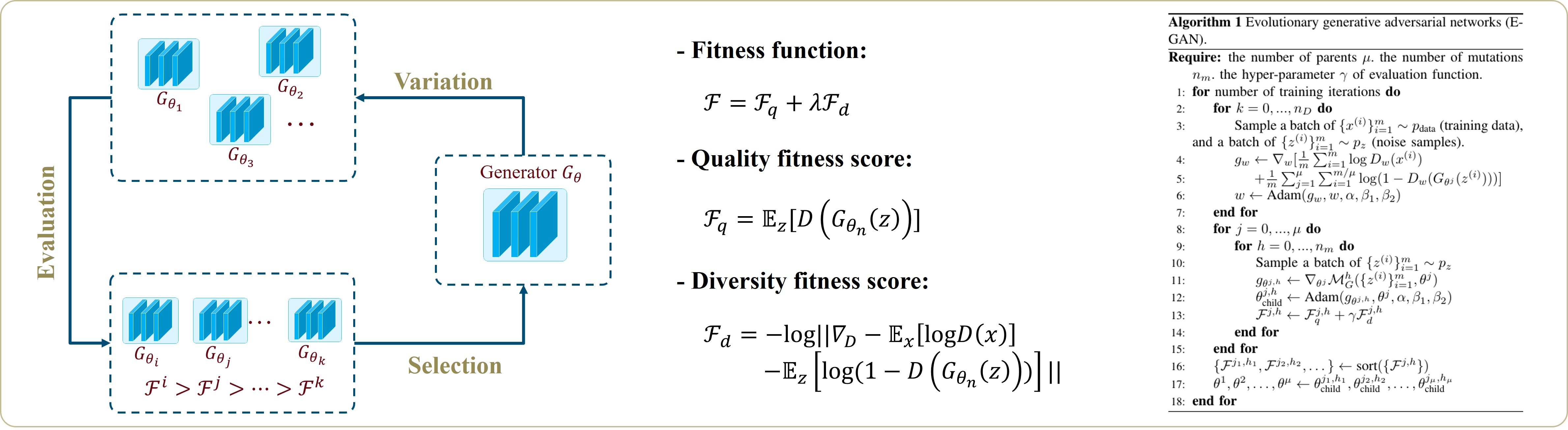

EvolutionaryGAN: ./models/egan_model.py

--model egan

- DCGAN-based networks architecture: ./models/DCGAN_nets.py

--netD DCGAN_cifar10 --netG DCGAN_cifar10

More architectures will be added.

Standard GANs: --which_D S

After the GAN was first proposed by Goodfellow et al., many different adversarial losses have been devised. Generally, they can be described in the following way:

Through defining different functions f(.) and g(.), different Standard GAN losses are delivered.

Relativistic Average GANs: --which_D Ra

Recently, Alexia proposed the relativistic average GANs (RaGANs) for GAN training. Its general loss function can be formulated as bellow:

Note that functions f(.) and g(.) are defined similarly with Standard GANs, yet the average term of both real and fake images are further considered.

Through setting --which_D, we can basically select the general form of GAN losses. Then, through configuring --d_loss_mode and --g_loss_mode, the specific losses of discriminator and generator can be determined. Specifically,

-

The original GAN (or minimax GAN) losses:

--d_loss_mode vanillaor--g_loss_mode vanilla. -

The non-saturating GAN losses:

--d_loss_mode nsganor--g_loss_mode nsgan. -

The Least-Squares GAN losses:

--d_loss_mode lsganor--g_loss_mode lsgan. -

The Wasserstein GAN losses:

--d_loss_mode wganor--g_loss_mode wgan. Note that Gradients Penalty term should be added--use_gp. -

The Higne GAN losses:

--d_loss_mode hingeor--g_loss_mode hinge. -

The Relativistic Standard GAN losses:

--d_loss_mode rsganor--g_loss_mode rsgan.

Note that, in practice, different kinds of g_loss and d_loss can be combined, and the GP term can also be added into all Discriminators' training.

Although many Inception metrics have been proposed to measure generation performance, Inception Score (IS) and Fréchet Inception Distance (FID) are two most used. Since both of them are firstly calculated by tensorflow codes, we adopted related codes: TensorFlow Inception Score code from OpenAI's Improved-GAN and TensorFlow FID code from TTUR. Through setting --score_name IS, related scores will be measured during the training process. But, note that you will need to have TensorFlow 1.3 or earlier installed, as TF1.4+ breaks the original IS code.

PyTorch version inception metrics were adopted from BigGAN-PyTorch. If you want to use it, simply set --use_pytorch_scores. However, these scores are different from the scores you would get using the official TF inception code, and are only for monitoring purposes.

- Preparing .npz files for Pytorch Inception metrics evaluation (cifar10 as an example):

python inception_pytorch/calculate_inception_moments.py --dataset C10 --data_root datasets

If you use this code for your research, please cite our paper.

@article{wang2019evolutionary,

title={Evolutionary generative adversarial networks},

author={Wang, Chaoyue and Xu, Chang and Yao, Xin and Tao, Dacheng},

journal={IEEE Transactions on Evolutionary Computation},

year={2019},

publisher={IEEE}

}

Evolving Generative Adversarial Networks | Two Minute Papers #242

The best of GAN papers in the year 2018

Pytorch framework from pytorch-CycleGAN-and-pix2pix.

Pytorch Inception metrics code from BigGAN-PyTorch.

TensorFlow Inception Score code from OpenAI's Improved-GAN..

TensorFlow FID code from TTUR.