Docs:https://www.yuque.com/huangzhongqing/hre6tf/vy6gd2

自动驾驶相关交流群,欢迎扫码加入:自动驾驶感知(PCL/ROS+DL):技术交流群汇总(新版)

创建一个知识星球 【自动驾驶感知(PCL/ROS+DL)】 专注于自动驾驶感知领域,包括传统方法(PCL点云库,ROS)和深度学习(目标检测+语义分割)方法。同时涉及Apollo,Autoware(基于ros2),BEV感知,三维重建,SLAM(视觉+激光雷达) ,模型压缩(蒸馏+剪枝+量化等),自动驾驶模拟仿真,自动驾驶数据集标注&数据闭环等自动驾驶全栈技术,欢迎扫码二维码加入,一起登顶自动驾驶的高峰!

TODO:

- 【202212done】目标检测最新论文实时更新

- 【202304done】语义分割最新论文实时更新

- 【202209done】目标检测框架(pcdet+mmdetection3d+det3d+paddle3d)文章撰写

- 数据集详细剖析:kitti&waymo&nuScenes

- Apollo学习https://github.com/HuangCongQing/apollo_note

代码注解

-

config yaml配置文件注释(eg.pointpillar.yaml):tools/cfgs/kitti_models/pointpillar.yaml

-

kitti评测详细介绍(可适配自己的数据集评测):pcdet/datasets/kitti/kitti_object_eval_python

其他目标检测框架(pcdet+mmdetection3d+det3d+paddle3d)代码注解笔记:

- pcdet:https://github.com/HuangCongQing/pcdet-note

- mmdetection3d:https://github.com/HuangCongQing/mmdetection3d-note

- det3d: TODO

- paddle3dL TODO

# pointpillars

python train.py --cfg_file=cfgs/kitti_models/pointpillar.yaml --batch_size=4 --epochs=10

tensorrt部署参考:

Install pcdet toolbox.

pip install -r requirements.txt

python setup.py develop# pointpillars

python train.py --cfg_file=cfgs/kitti_models/pointpillar.yaml --batch_size=4 --epochs=10

# centerpoint

train.py --cfg_file cfgs/kitti_models/centerpoint.yaml --batch_size 4 --epoch 100

## 报错 RuntimeError: CUDA error: out of memory

train.py --cfg_file cfgs/kitti_models/centerpoint_pillar.yaml --batch_size 4 --epoch 100

python demo.py --cfg_file cfgs/kitti_models/centerpoints.yaml --ckpt ../checkpoints/centerpoint_kitti_80.pth --data_path ../testing/velodyne/000003.bin

OpenPCDet is a clear, simple, self-contained open source project for LiDAR-based 3D object detection.

It is also the official code release of [PointRCNN], [Part-A^2 net] and [PV-RCNN].

Docs:https://www.yuque.com/huangzhongqing/hre6tf/vy6gd2

[2020-11-27] Bugfixed: Please re-prepare the validation infos of Waymo dataset (version 1.2) if you would like to use our provided Waymo evaluation tool (see PR). Note that you do not need to re-prepare the training data and ground-truth database.

[2020-11-10] NEW: The Waymo Open Dataset has been supported with state-of-the-art results. Currently we provide the

configs and results of SECOND, PartA2 and PV-RCNN on the Waymo Open Dataset, and more models could be easily supported by modifying their dataset configs.

[2020-08-10] Bugfixed: The provided NuScenes models have been updated to fix the loading bugs. Please redownload it if you need to use the pretrained NuScenes models.

[2020-07-30] OpenPCDet v0.3.0 is released with the following features:

- The Point-based and Anchor-Free models (

PointRCNN,PartA2-Free) are supported now. - The NuScenes dataset is supported with strong baseline results (

SECOND-MultiHead (CBGS)andPointPillar-MultiHead). - High efficiency than last version, support PyTorch 1.1~1.7 and spconv 1.0~1.2 simultaneously.

[2020-07-17] Add simple visualization codes and a quick demo to test with custom data.

[2020-06-24] OpenPCDet v0.2.0 is released with pretty new structures to support more models and datasets.

[2020-03-16] OpenPCDet v0.1.0 is released.

Note that we have upgrated PCDet from v0.1 to v0.2 with pretty new structures to support various datasets and models.

OpenPCDet is a general PyTorch-based codebase for 3D object detection from point cloud.

It currently supports multiple state-of-the-art 3D object detection methods with highly refactored codes for both one-stage and two-stage 3D detection frameworks.

Based on OpenPCDet toolbox, we win the Waymo Open Dataset challenge in 3D Detection,

3D Tracking, Domain Adaptation

three tracks among all LiDAR-only methods, and the Waymo related models will be released to OpenPCDet soon.

We are actively updating this repo currently, and more datasets and models will be supported soon. Contributions are also welcomed.

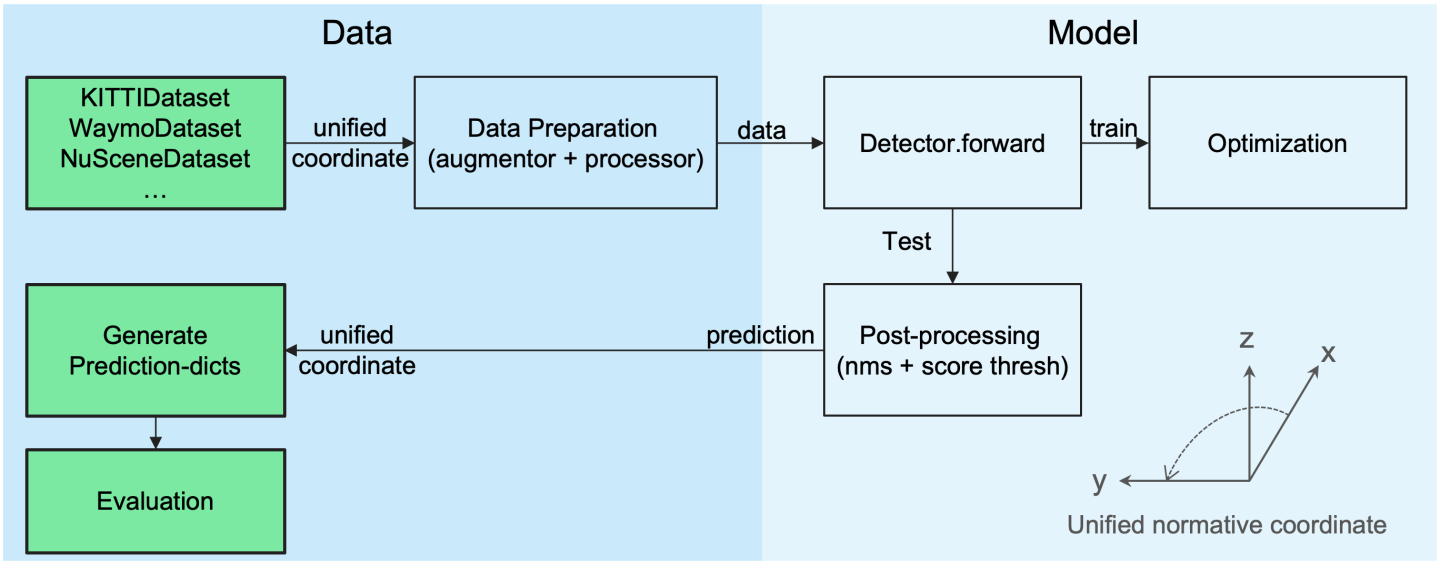

- Data-Model separation with unified point cloud coordinate for easily extending to custom datasets:

- Unified 3D box definition: (x, y, z, dx, dy, dz, heading).

- Flexible and clear model structure to easily support various 3D detection models:

- Support various models within one framework as:

- Support both one-stage and two-stage 3D object detection frameworks

- Support distributed training & testing with multiple GPUs and multiple machines

- Support multiple heads on different scales to detect different classes

- Support stacked version set abstraction to encode various number of points in different scenes

- Support Adaptive Training Sample Selection (ATSS) for target assignment

- Support RoI-aware point cloud pooling & RoI-grid point cloud pooling

- Support GPU version 3D IoU calculation and rotated NMS

Selected supported methods are shown in the below table. The results are the 3D detection performance of moderate difficulty on the val set of KITTI dataset.

- All models are trained with 8 GTX 1080Ti GPUs and are available for download.

- The training time is measured with 8 TITAN XP GPUs and PyTorch 1.5.

| training time | Car@R11 | Pedestrian@R11 | Cyclist@R11 | download | |

|---|---|---|---|---|---|

| PointPillar | ~1.2 hours | 77.28 | 52.29 | 62.68 | model-18M |

| SECOND | ~1.7 hours | 78.62 | 52.98 | 67.15 | model-20M |

| PointRCNN | ~3 hours | 78.70 | 54.41 | 72.11 | model-16M |

| PointRCNN-IoU | ~3 hours | 78.75 | 58.32 | 71.34 | model-16M |

| Part-A^2-Free | ~3.8 hours | 78.72 | 65.99 | 74.29 | model-226M |

| Part-A^2-Anchor | ~4.3 hours | 79.40 | 60.05 | 69.90 | model-244M |

| PV-RCNN | ~5 hours | 83.61 | 57.90 | 70.47 | model-50M |

All models are trained with 8 GTX 1080Ti GPUs and are available for download.

| mATE | mASE | mAOE | mAVE | mAAE | mAP | NDS | download | |

|---|---|---|---|---|---|---|---|---|

| PointPillar-MultiHead | 33.87 | 26.00 | 32.07 | 28.74 | 20.15 | 44.63 | 58.23 | model-23M |

| SECOND-MultiHead (CBGS) | 31.15 | 25.51 | 26.64 | 26.26 | 20.46 | 50.59 | 62.29 | model-35M |

We provide the setting of DATA_CONFIG.SAMPLED_INTERVAL on the Waymo Open Dataset (WOD) to subsample partial samples for training and evaluation,

so you could also play with WOD by setting a smaller DATA_CONFIG.SAMPLED_INTERVAL even if you only have limited GPU resources.

By default, all models are trained with 20% data (~32k frames) of all the training samples on 8 GTX 1080Ti GPUs, and the results of each cell here are mAP/mAPH calculated by the official Waymo evaluation metrics on the whole validation set (version 1.2).

| Vec_L1 | Vec_L2 | Ped_L1 | Ped_L2 | Cyc_L1 | Cyc_L2 | |

|---|---|---|---|---|---|---|

| SECOND | 68.03/67.44 | 59.57/59.04 | 61.14/50.33 | 53.00/43.56 | 54.66/53.31 | 52.67/51.37 |

| Part-A^2-Anchor | 71.82/71.29 | 64.33/63.82 | 63.15/54.96 | 54.24/47.11 | 65.23/63.92 | 62.61/61.35 |

| PV-RCNN | 74.06/73.38 | 64.99/64.38 | 62.66/52.68 | 53.80/45.14 | 63.32/61.71 | 60.72/59.18 |

We could not provide the above pretrained models due to Waymo Dataset License Agreement, but you could easily achieve similar performance by training with the default configs.

More datasets are on the way.

Please refer to INSTALL.md for the installation of OpenPCDet.

Please refer to DEMO.md for a quick demo to test with a pretrained model and visualize the predicted results on your custom data or the original KITTI data.

Please refer to GETTING_STARTED.md to learn more usage about this project.

OpenPCDet is released under the Apache 2.0 license.

OpenPCDet is an open source project for LiDAR-based 3D scene perception that supports multiple

LiDAR-based perception models as shown above. Some parts of PCDet are learned from the official released codes of the above supported methods.

We would like to thank for their proposed methods and the official implementation.

We hope that this repo could serve as a strong and flexible codebase to benefit the research community by speeding up the process of reimplementing previous works and/or developing new methods.

If you find this project useful in your research, please consider cite:

@misc{openpcdet2020,

title={OpenPCDet: An Open-source Toolbox for 3D Object Detection from Point Clouds},

author={OpenPCDet Development Team},

howpublished = {\url{https://github.com/open-mmlab/OpenPCDet}},

year={2020}

}

Welcome to be a member of the OpenPCDet development team by contributing to this repo, and feel free to contact us for any potential contributions.