This is the 4th release of Demucs (v4), featuring Hybrid Transformer based source separation.

For the classic Hybrid Demucs (v3): Go this commit.

If you are experiencing issues and want the old Demucs back, please fill an issue, and then you can get back to the v3 with

git checkout v3. You can also go Demucs v2.

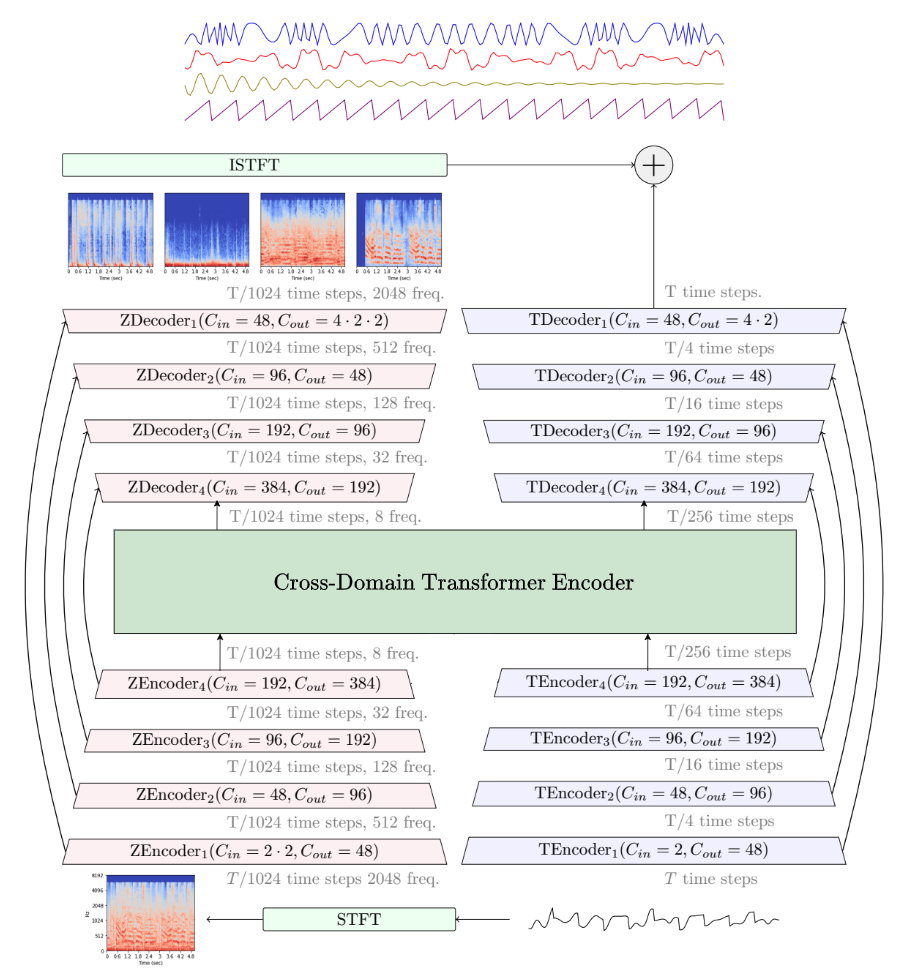

Demucs is a state-of-the-art music source separation model, currently capable of separating drums, bass, vocals and other sounds from the rest of the accompaniment. It's based on a U-Net convolutional architecture inspired by Wave-U-Net. The v4 version features Hybrid Transformer Demucs, a hybrid spectrogram/waveform separation model using Transformers. It is based on Hybrid Demucs (also provided in this repository) with the innermost layers are replaced by a cross-domain Transformer Encoder. This Transformer uses self-attention within each domain, and cross-attention across domains.

- The model achieves a SDR of 9.00 dB on the MUSDB HQ test set. Moreover, when using sparse attention kernels to extend its receptive field and per source fine-tuning, we achieve state-of-the-art 9.20 dB of SDR. (The Sparse Hybrid Transformer model decribed in our paper isn't provided as it requires custom CUDA code that isn't ready for release yet.)

Samples are available on our sample page. Checkout our paper for more information.

See the release notes for more details.

-

22/02/2023

- Added support for the SDX 2023 Challenge (see the dedicated doc page)

-

07/12/2022

- Added Demucs v4 on PyPI

- Released

htdemucs_6s - htdemucs model now used by default.

-

16/11/2022

- Added the new Hybrid Transformer Demucs v4 models.

- Adding support for the torchaudio implementation of HDemucs.

-

30/08/2022

- Added reproducibility and ablation grids along with an updated version of the paper.

-

17/08/2022

- Releasing v3.0.5

- Set split segment length to reduce memory

- Compatible with pyTorch 1.12

-

24/02/2022

- Releasing v3.0.4

--two-stemssplit method (i.e. karaoke mode).float32orint24export support

-

17/12/2021

- Releasing v3.0.3

- Bug fixes (@keunwoochoi)

- Memory drastically reduced on GPU (@famzah)

- New multi-core evaluation on CPU (

-jflag).

-

12/11/2021

- Releasing Demucs v3 with hybrid domain separation.

- Strong improvements on all sources. This is the model that won Sony MDX challenge.

-

11/05/2021

- Adding support for MusDB-HQ and arbitrary wav set, for the MDX challenge. For more information on joining the challenge with Demucs see the Demucs MDX instructions

We provide hereafter a summary of the different metrics presented in the paper.

You can also compare:

- Hybrid Demucs (v3)

- KUIELAB-MDX-Net

- Spleeter

- Open-Unmix

- Demucs (v1)

- Conv-Tasnet on one of my favorite songs on my soundcloud playlist.

We refer the reader to our paper for more details.

| Model | Domain | Extra data | Overall SDR1 |

MOS Quality2 |

MOS Contamination3 |

|---|---|---|---|---|---|

| Wave-U-Net | Waveform | ❌ | 3.2 | - | - |

| Open-Unmix | Spectrogram | ❌ | 5.3 | - | - |

| D3_Net | Spectrogram | ❌ | 6.0 | - | - |

| Conv-Tasnet | Waveform | ❌ | 5.7 | - | - |

| Demucs (v2) | Waveform | ❌ | 6.3 | 2.37 | 2.36 |

| ResUNetDecouple+ | Spectrogram | ❌ | 6.7 | - | - |

| KUIELAB-MDX-Net | Hybrid | ❌ | 7.5 | 2.86 | 2.55 |

| Band-Spit RNN | Spectrogram | ❌ | 8.2 | - | - |

| Hybrid Demucs (v3) | Hybrid | ❌ | 7.7 | 2.83 | 3.04 |

| MMDenseLSTM | Spectrogram | 804 songs | 6.0 | - | - |

| D3_Net | Spectrogram | 1500 songs | 6.7 | - | - |

| Spleeter | Spectrogram | 25000 songs | 5.9 | - | - |

| Band-Spit RNN | Spectrogram | 1700 mixes | 9.0 | - | - |

| HT Demucs f.t. (v4) | Hybrid | 800 songs | 9.0 | - | - |

1 - Mean of the SDR for each of the 4 sources.

2 - Rating from 1 to 5 of the naturalness and absence of artifacts given by human listeners. (5 = no artifacts)

3 - Rating from 1 to 5 with 5 being zero contamination by other sources.

Python>=3.7- See

requirements_minimal.txtfor requirements for separation only. - See

requirements.txt/environment-[cpu|cuda].ymlfor training purposes.

- See

Everytime you see python3, replace it with sys.executable/python.exe. You should always run commands from the

Anaconda console.

If you just want to use Demucs to separate tracks, you can install it with

# Basic installation

pip install -U demucs

# Bleeding edge versions - directly from this repository

pip install -U git+https://github.com/facebookresearch/demucs#egg=demucsAdvanced OS support are provided on the following page, you must read the page for your OS before posting an issues.

If you have anaconda installed, you can run from the root of this repository. This will create a demucs environment with all the dependencies installed:

conda env update -f environment-cpu.yml # If you don't have GPUs

conda env update -f environment-cuda.yml # If you have GPUs

conda activate demucs

pip install -e .You will also need to install soundtouch for pitch/tempo augmentation:

- Linux:

sudo apt-get install soundstretch - Mac OS X:

brew install sound-touch

-

- Thanks to @xserrat, there is now a Docker image definition ready for using Demucs. This can ensure all libraries are correctly installed without interfering with the host OS.

-

-

Please note that transfer speeds with Colab are slow for large media files, but it will allow you to use Demucs without installing anything.

-

-

- @CarlGao4 has released a GUI for Demucs. Downloads for Windows and macOS is available here. Use FossHub mirror to speed up your download.

- @Anjok07 is providing a self contained GUI in UVR (Ultimate Vocal Remover) that supports Demucs.

-

- MVSep

- Free online separation with multiple Demucs models.

- AudioStrip

- Free online separation with Demucs.

- MVSep

In order to try Demucs, you can just run from any folder (as long as you properly installed it)

demucs PATH_TO_AUDIO_FILE_1 [PATH_TO_AUDIO_FILE_2 ...] # for Demucs

# If you used "pip install --user" you might need to replace demucs with python3 -m demucs

# If your filename contain spaces don't forget to quote it!

python3 -m demucs --mp3 --mp3-bitrate BITRATE "PATH_TO_AUDIO_FILE_1" # Output files saved as MP3

# You can select different models (listed below) with the "-n" flag

demucs -n mdx_q "File.mp3"

# If you only want to separate vocals out of an audio, use `--two-stems=vocal` (You can also set to drums or bass)

demucs --two-stems=vocals "File.mp3"If you have a GPU, but you run out of memory, please use --segment SEGMENT to reduce length of each split. SEGMENT should be changed to a integer. Personally recommend not less than 10 (the bigger the number is, the more memory is required, but quality may increase). Create an environment variable PYTORCH_NO_CUDA_MEMORY_CACHING=1 is also helpful. If this still cannot help, please add -d cpu to the command line. See the section hereafter for more details on the memory requirements for GPU acceleration.

Separated tracks are stored in the separated/MODEL_NAME/TRACK_NAME folder. There you will find four stereo wave (or MP3, if you --mp3 flag used) files sampled at 44 100 Hz:

-

drums.wav -

bass.wav, -

other.wav -

vocals.wav. -

All audio formats supported by

torchaudiocan be processed (i.e. WAV, MP3, FLAC, Ogg/Vorbis on Linux/Mac OS X etc.).- On Windows,

torchaudiohas limited support, so we rely onFFmpeg, which should support pretty much anything.

- On Windows,

-

Audio is resampled on the fly if necessary.

-

The output will be a wave file encoded as int16, unless other flag used.

It can happen that the output would need clipping, in particular due to some separation artifacts.

Demucs will automatically rescale each output stem so as to avoid clipping. This can however break

the relative volume between stems. If instead you prefer hard clipping, pass --clip-mode clamp.

You can also try to reduce the volume of the input mixture before feeding it to Demucs.

The list of pre-trained models:

| Code name | Description |

|---|---|

htdemucs |

First version of Hybrid Transformer Demucs. Trained on MusDB + 800 songs. Default model. |

htdemucs_ft |

Fine-tuned version of htdemucsSeparation will take 4 times more than htdemucs at cost of better quality.Same training set as htdemucs. |

htdemucs_6s |

6 sources version of htdemucs, with piano and guitar being added as sources.Note that the piano source is not working great at the moment. |

hdemucs_mmi |

Hybrid Demucs v3 Retrained on MusDB + 800 songs. |

mdx |

Trained only on MusDB HQ Winning model on track A at the MDX challenge. |

mdx_extra |

Trained with extra training data (including MusDB test set) Ranked 2nd on the track B of the MDX challenge. |

mdx_qmdx_extra_q |

Quantized version of the previous models. Smaller download and storage at cost of worse quality. |

SIG: whereSIGis a single model from the model zoo.

-

--two-stems=STEM_NAMESeparateSTEM_NAMEfrom the rest.STEM_NAMEis a value into any source in the selected model. (i.e.vocals)- This will mix the files after separating the mix fully, so this won't be faster or use less memory.

-

--shifts=n- Performs multiple predictions with random shifts (shift trick) of the input and average them.

- This makes prediction

ntimes slower.

- This makes prediction

- Don't use it unless you have a GPU!

- Performs multiple predictions with random shifts (shift trick) of the input and average them.

-

--overlap=n- Controls the amount (

n) of overlap between prediction windows. Default is 0.25 (25%) which is probably fine. - It can probably be reduced to 0.1 to improve a bit speed.

- Controls the amount (

-

-j=n- Specify a number of parallel jobs (

n). Default is1. - This will multiply by the same amount the RAM used so be careful!

- Specify a number of parallel jobs (

-

--mp3-bitrate- Default is

320. (kb/s)

- Default is

-

--mp3- Save stems as MP3 files

-

--float32/--int24- File bit depth.

- Obsolete if

--mp3flag used.

- Obsolete if

- File bit depth.

If you want to use GPU acceleration, you will need at least 3GB of RAM on your GPU for demucs. However, about 7GB of RAM will be required if you use the default arguments. Add --segment SEGMENT to change size of each split. If you only have 3GB memory, set SEGMENT to 8 (though quality may be worse if this argument is too small). Creating an environment variable PYTORCH_NO_CUDA_MEMORY_CACHING=1 can help users with even smaller RAM such as 2GB (I separated a track that is 4 minutes but only 1.5GB is used), but this would make the separation slower.

If you do not have enough memory on your GPU, simply add -d cpu to the command line to use the CPU. With Demucs, processing time should be roughly equal to 1.5 times the duration of the track.

-

- If you want to train (Hybrid) Demucs, please follow the training doc.

-

- In order to reproduce the results from the Track A and Track B submissions, please check out the MDX Hybrid Demucs submission repository.

-

@inproceedings{rouard2022hybrid, title={Hybrid Transformers for Music Source Separation}, author={Rouard, Simon and Massa, Francisco and D{\'e}fossez, Alexandre}, booktitle={ICASSP 23}, year={2023} } @inproceedings{defossez2021hybrid, title={Hybrid Spectrogram and Waveform Source Separation}, author={D{\'e}fossez, Alexandre}, booktitle={Proceedings of the ISMIR 2021 Workshop on Music Source Separation}, year={2021} }

-

- Demucs is released under the MIT license as found in the LICENSE file.