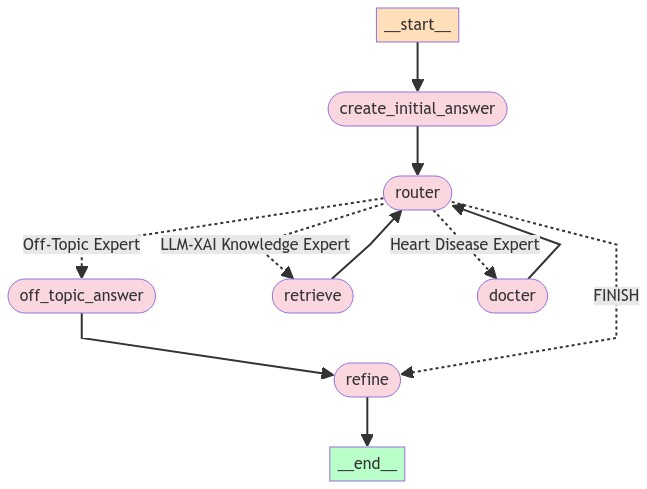

This is my third version of using LangChain to implement a QA chatbot. The advantage is that it has the benefit of the router chain and agent; you can define your workflow, and you can use a node as a supervisor to decide if it needs the skills or knowledge of other nodes. These nodes function similarly to experts with specific expertise (e.g., proficiency in LLM fine-tuning or medical knowledge). In the current implementation, you can always expect to receive a response, whereas with an agent, there are times when you might not receive a response because the agent may not make a decision within a certain time limit.

The disadvantage is that it takes longer because you have to return the intermediate result to the supervisor node, which then decides whether to stop using any more experts or continue with appropriate ones.

To better utilize chat memory, I use an LLM node to generate the initial answer. For example, if the first question is "What is explainable AI?" and the next question is "Why is it important?", the supervisor node may mistakenly consider the latter as off-topic and redirect it to the Off-Topic Expert. However, an LLM equipped with chat memory and without awareness of our topics can generate an initial answer containing keywords like "explainable AI." When the supervisor node sees the question and the initial answer, it will recognize the relevance of the question to our topics.

The final refine node uses an LLM to maintain the desired format and merge intermediate answers.

To prevent the supervisor node from getting stuck by using the same expert repeatedly, I enforce a rule that it can only use the same expert once. If a question is regarded as off-topic, the supervisor doesn't need to make a decision again.

- Create and activate a Conda environment:

conda create -y -n chatbot python=3.11 conda activate chatbot

- Install required packages:

pip install -r requirements.txt

- Set up environment variables:

- Write your

OPENAI_API_KEYin the.envfile. A template can be found in.env.example.

source .env - Write your

To start the application, use the following command:

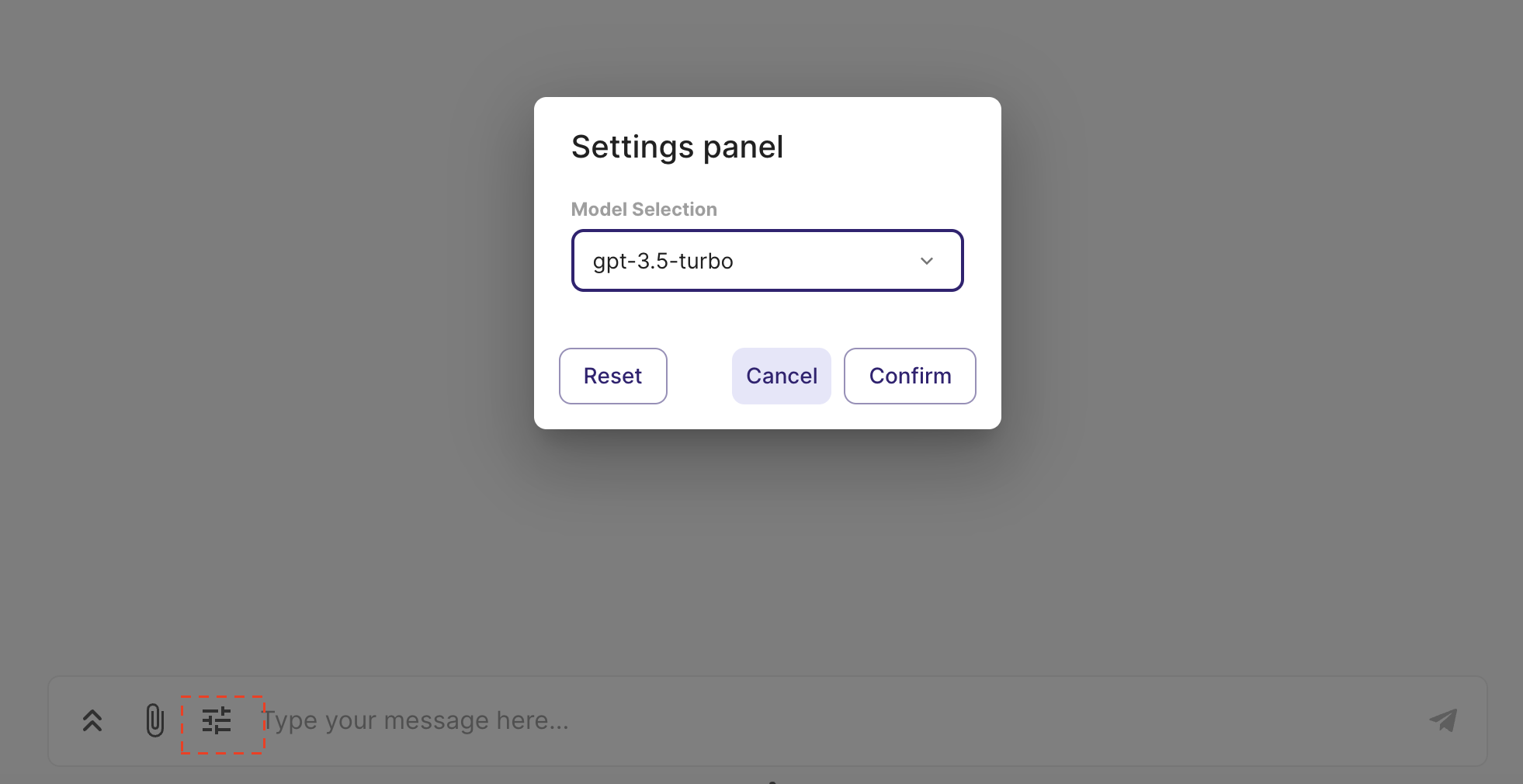

chainlit run app.pyUsers have the option to select the specific LLM (language learning model) they prefer for generating responses. The switch between different LLMs can be accomplished within a single conversation session.

- Various Information Source: The chatbot can retrieve information from web pages, YouTube videos, and PDFs.

- Source Display: You can view the source of the information at the end of each answer.

- LLM Model Identification: The specific LLM model utilized for generating the current response is indicated.

- Router retriever: Easy to adapt to different domains, as each domain can be equipped with a different retriever.

- Memory Management: The chatbot is equipped with a conversation memory feature. If the memory exceeds 500 tokens, it is automatically summarized.

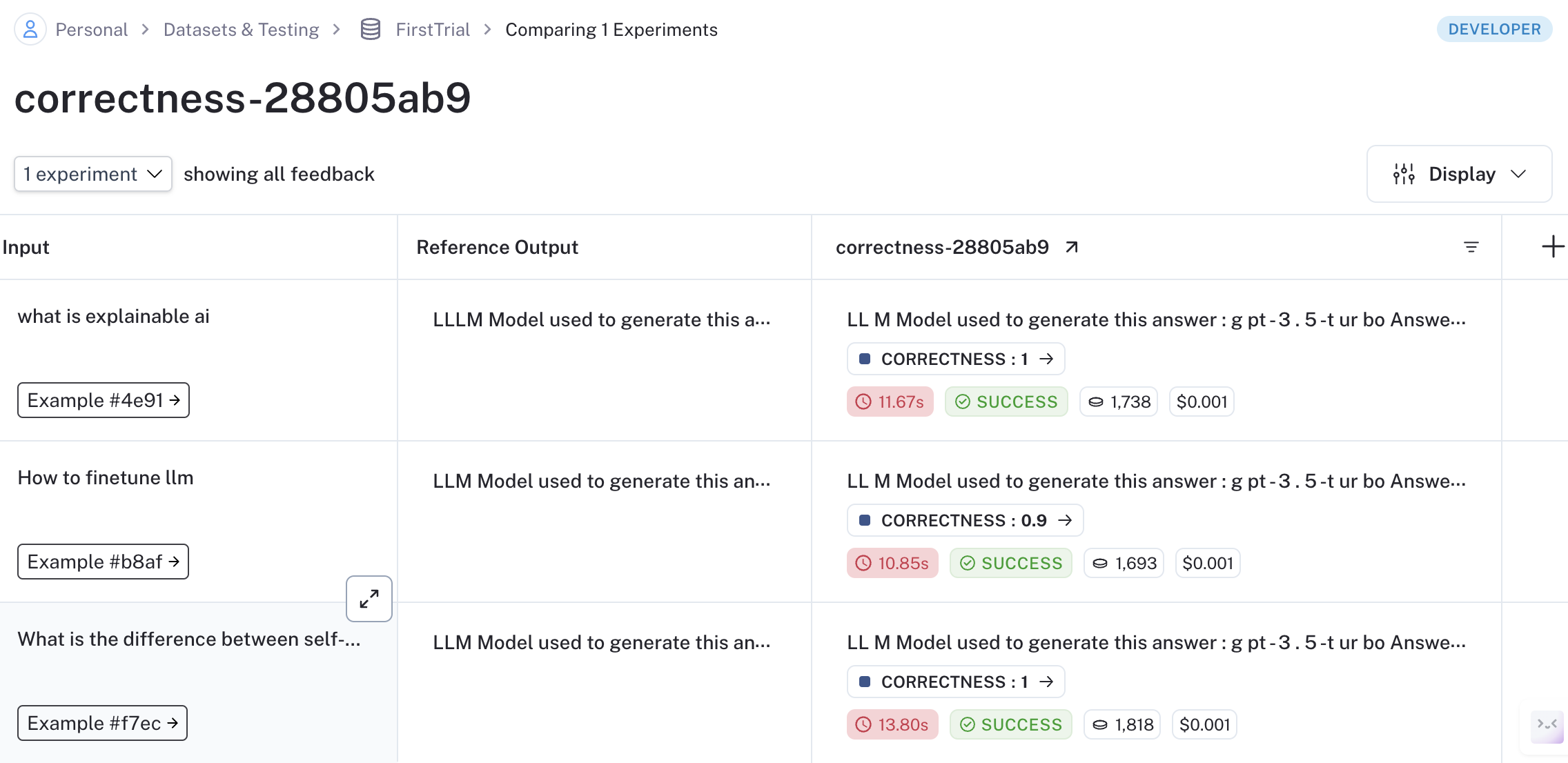

To evaluate model generation against human references or log outputs for specific test queries, use Langsmith.

- Register an account at Langsmith.

- Add your

LANGCHAIN_API_KEYto the.envfile. - Execute the script with your dataset name:

python langsmith_tract.py --dataset_name <YOUR DATASET NAME>

- Modify the data path in

langsmith_evaluation/config.tomlif necessary (e.g., path to a CSV file with question and answer pairs).

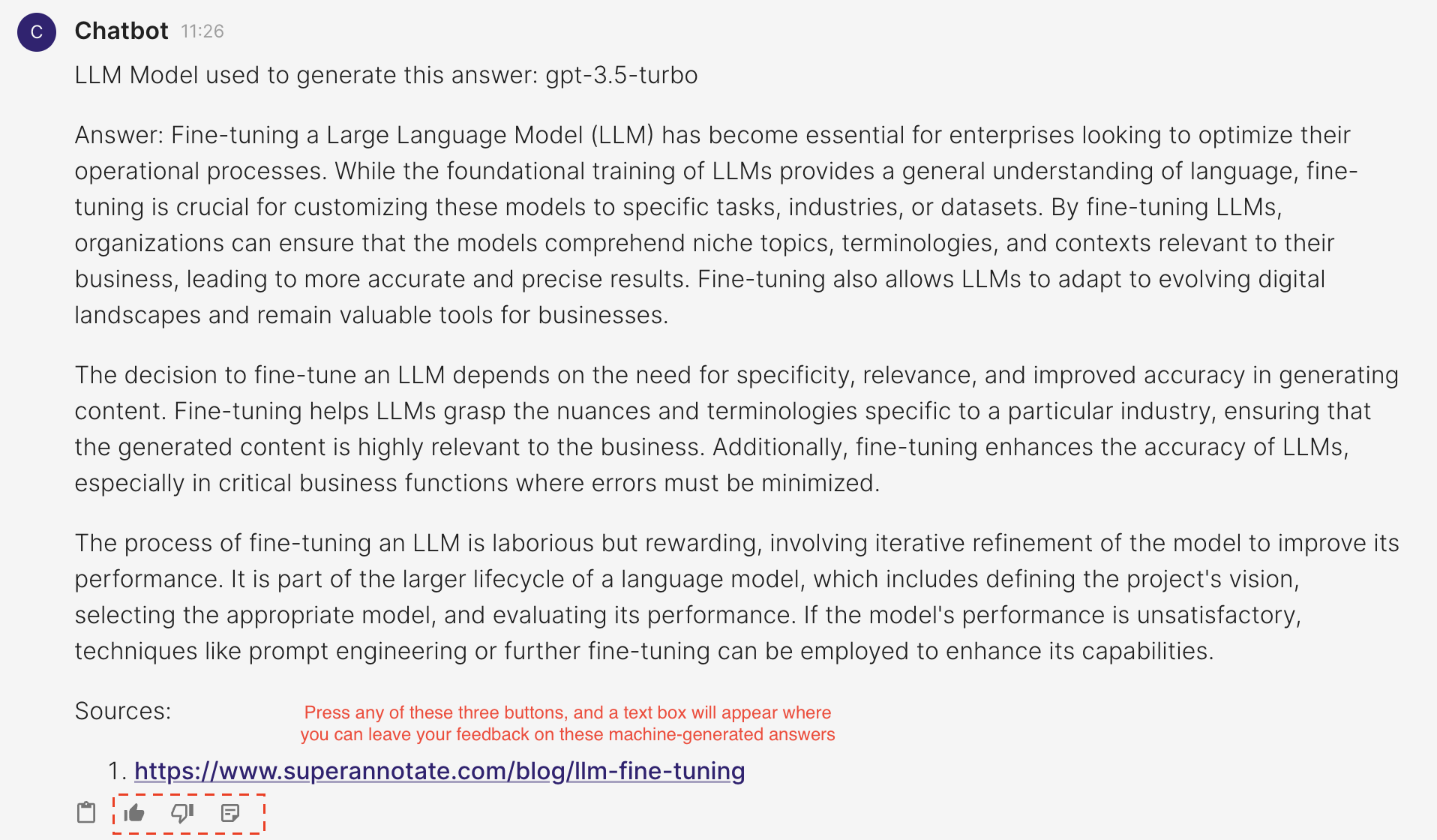

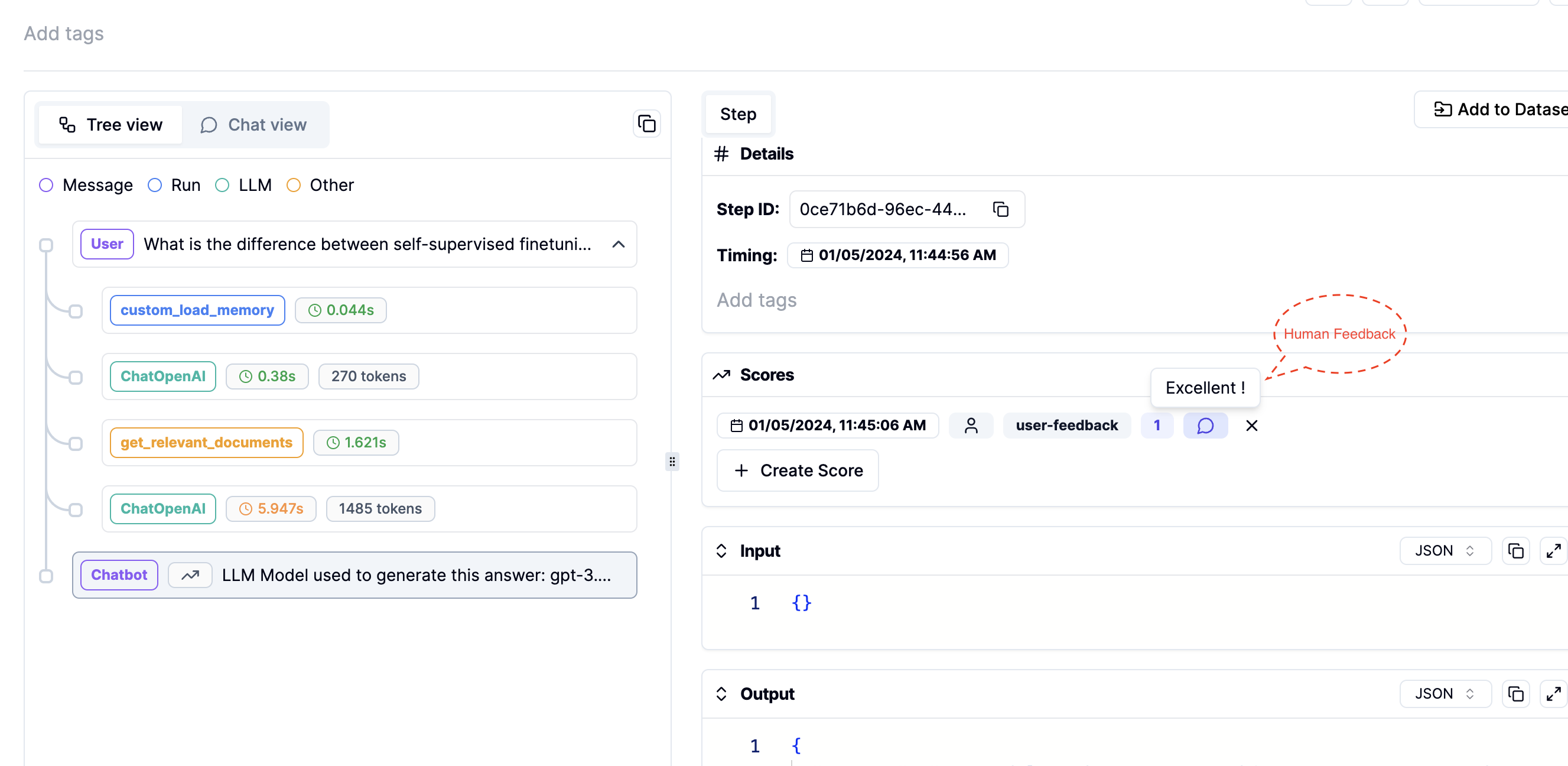

Use Literal AI to record human feedback for each generated answer. Follow these steps:

- Register an account at Literal AI.

- Add your

LITERAL_API_KEYto the.envfile. - Once the

LITERAL_API_KEYis added to your environment, run the commandchainlit run app.py. You will see three new icons as shown in the image below, where you can leave feedback on the generated answers.

- Track this human feedback in your Literal AI account. You can also view the prompts or intermediate steps used to generate these answers.

This guide details the steps for setting up user authentication in your application. Each authenticated user will have the ability to view their own past interactions with the chatbot.

- Add your APP_LOGIN_USERNAME and APP_LOGIN_PASSWORD to the

.envfile. - Run the following command to create a secret which is essential for securing user sessions:

Copy the outputted CHAINLIT_AUTH_SECRET and add it to your .env file

chainlit create-secret

- Once you launch your application, you will see a login authentication page

- Login with your APP_LOGIN_USERNAME and APP_LOGIN_PASSWORD

- Upon successful login, each user will be directed to a page displaying their personal chat history with the chatbot.

Below is a preview of the web interface for the chatbot:

To customize the chatbot according to your needs, define your configurations in the config.toml file and tool_configs.toml where you can define the name and descriptions of your experts.