-

We propose a glyph-conditional text-to-image generation model named GlyphControl for visual text generation, which outperforms DeepFloyd IF and Stable Diffusion in terms of OCR accuracy and CLIP score while saving the number of parameters by more than 3×.

-

We introduce a visual text generation benchmark named LAION-Glyph by filtering the LAION-2B-en and selecting the images with rich visual text content by using the modern OCR system. We conduct experiments on three different dataset scales: LAION-Glyph-100K,LAION-Glyph-1M, and LAION-Glyph-10M.

-

We report flexible and customized visual text generation results. We empirically show that the users can control the content, locations, and sizes of generated visual text through the interface of glyph instructions.

- SimpleBench: A simple text prompt benchmark following the Character-aware Paper. The format of prompts remains the same: `A sign that says "<word>".'

- CreativeBench: A creative text prompt benchmark adapted from GlyphDraw. We adopt diverse English-version prompts in the original benchmark and replace the words inside quotes. As an example, the prompt may look like: `Little panda holding a sign that says "<word>".' or 'A photographer wears a t-shirt with the word "<word>." printed on it.'

(The prompts are listed in the text_prompts folder)

Following Character-aware Paper, we collect a pool of single-word candidates from Wikipedia. These words are then categorized into four buckets based on their frequencies: top 1K, 1k to 10k, 10k to 100k, and 100k plus. Each bucket contains words with frequencies in the respective range. To form input prompts, we randomly select 100 words from each bucket and insert them into the above templates. We generate four images for each word during the evaluation process.

We evaluate the OCR accuracy through three metrics, i.e., exact match accuracy

| Method | #Params | Training Dataset |

|

|

CLIP Score |

|

|---|---|---|---|---|---|---|

| Stable Diffusion v2.0 | 865M | LAION 1.2B | ||||

| DeepFloyd (IF-I-M) | 2.1B | LAION 1.2B | ||||

| DeepFloyd (IF-I-L) | 2.6B | LAION 1.2B | ||||

| DeepFloyd (IF-I-XL) | 6.0B | LAION 1.2B | ||||

| GlyphControl | 1.3B | LAION-Glyph-100K | ||||

| GlyphControl | 1.3B | LAION-Glyph-1M | ||||

| GlyphControl | 1.3B | LAION-Glyph-10M |

The results shown here are averaged over four word-frequency buckets. The results on SimpleBench / CreativeBench are presented on the left/right side of the slash, respectively. For all evaluated models, the global seed is set as 0 and no additional prompts are used while we take the empty string as the negative prompt for classifier-free guidance.

Clone this repo:

git clone https://github.com/AIGText/GlyphControl-release.git

cd GlyphControl-release

Install required Python packages

(Recommended)

conda env create -f environment.yaml

conda activate GlyphControl

or

conda create -n GlyphControl python=3.9

conda activate GlyphControl

pip install -r requirements.txt

Althoguh you could run our codes on CPU device, we recommend you to use CUDA device for faster inference. The recommended CUDA version is CUDA 11.3 and the minimum GPU memory consumption is 8~10G.

Download the checkpoints from our hugging face space and put the corresponding checkpoint files into the checkpoints folder.

We provide four types of checkpoints.

Apart from the model trained on LAION-Glyph-10M for 6 epochs, we also fine-tune the model for additional 40 epochs on TextCaps-5K, a subset of TextCaps v0.1 Dataset consisting of 5K images related to signs, books, and posters.During the fine-tuning, we also train the U-Net decoder of the original SD branch according to the ablation study in our report.

The relevant information is shown below.

| Checkpoint File | Training Dataset | Trainig Epochs |

|

|

CLIP Score |

|

|---|---|---|---|---|---|---|

| laion10M_epoch_6_model_ema_only.ckpt | LAION-Glyph-10M | 6 | ||||

| textcaps5K_epoch_10_model_ema_only.ckpt | TextCaps 5K | 10 | ||||

| textcaps5K_epoch_20_model_ema_only.ckpt | TextCaps 5K | 20 | ||||

| textcaps5K_epoch_40_model_ema_only.ckpt | TextCaps 5K | 40 |

Although the models fine-tuned on TextCaps 5K demonstrate high OCR accuracy, the creativity and diversity of generted images may be lost. Feel free to try all the provided checkpoints for comparison. All the checkpoints are ema-only checkpoints while use_ema in the configs/config.yaml should be set as False.

-

Text character information: GlyphControl allows for the specification of not only single words but also phrases or sentences composed of multiple words. As long as the text is intended to be placed within the same area, users can customize the text accordingly.

-

Text line information: GlyphControl provides the flexibility to assign words to multiple lines by adjusting the number of rows. This feature enhances the visual effects and allows for more versatile text arrangements.

-

Text box information: Users have control over the font size of the rendered text by modifying the width property of the text bounding box. The location of the text on the image can be specified using the coordinates of the top left corner. Additionally, the yaw rotation angle of the text box allows for further adjustments. By default, the text is rendered following the optimal width-height ratio, but users can define a specific width-height ratio to precisely control the height of the text box (not recommended).

Users should provide the above three types of glyph instructions for inference.

To run inference code locally, you need specify the glyph instructions first in the file glyph_instructions.yaml.

And then execute the code like this:

python inference.py --cfg configs/config.yaml --ckpt checkpoints/laion10M_epoch_6_model_ema_only.ckpt --save_path generated_images --glyph_instructions glyph_instructions.yaml --prompt <Prompt> --num_samples 4

If you do not want to generate visual text, you could remove the "--glyph_instructions" parameter in the command. You could also specify other parameters like a_prompt and n_prompt to monitor the generation process. Please see the codes for detailed descriptions.

As an easier way to conduct trials on our models, you could test through a demo.

After downloading the checkpoints, execute the code:

python app.py

Then you could generate visual text through a local demo interface.

Or you can directly try our demo in our hugging face space GlyphControl.

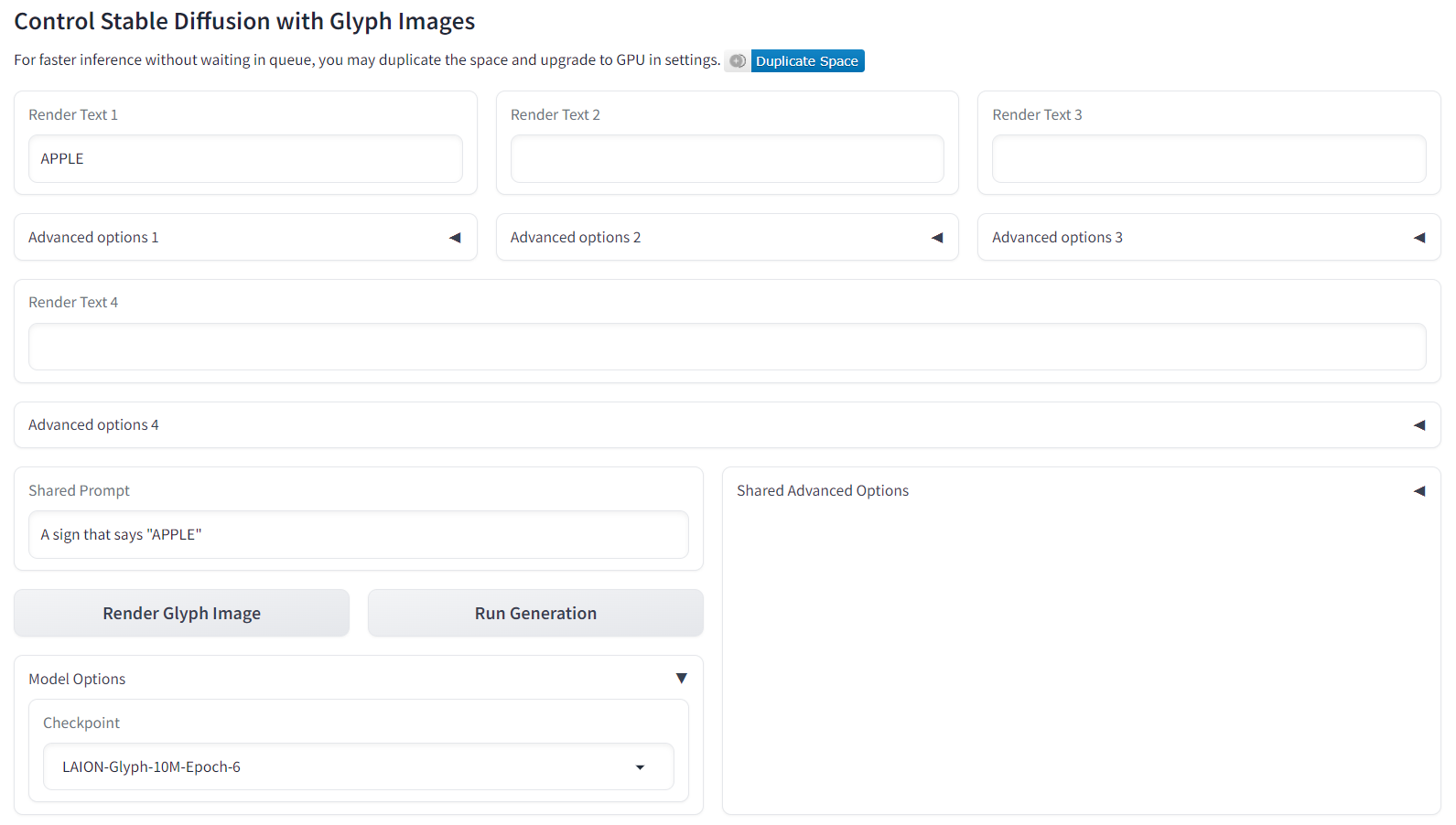

In the current version of our demo, we support four groups of Render Text at most. Users should enter in the glyph instructions at corresponding parts. By selecting the checkpoint in the Model Options part, users could try all four released checkpoints.

Dataset: We sincerely thank the open-source large image-text dataset LAION-2B-en and corresponding aesthetic score prediction codes LAION-Aesthetics_Predictor V2. As for OCR detection, thanks for the open-source tool PP-OCRv3.

Methodolgy and Demo: Our method is based on the powerful controllable image generation method ControlNet. Thanks to their open-source codes. As for demo, we use the ControlNet demo as reference.

Comparison Methods in the paper: Thanks to the open-source diffusion codes or demos: DALL-E 2, Stable Diffusion 2.0, Stable Diffusion XL, DeepFloyd.

Q: What is the approximate success rate?

A: About 10-20%. Since the current version is an alpha version, the success rate is relatively low.

For help or issues about the github codes or huggingface demo of GlyphControl, please email Yukang Yang ([email protected]), Dongnan Gui ([email protected]), and Yuhui Yuan ([email protected]) or submit a GitHub issue.

If you find this code useful in your research, please consider citing:

@article{yang2023glyphcontrol,

title={GlyphControl: Glyph Conditional Control for Visual Text Generation},

author={Yukang Yang and Dongnan Gui and Yuhui Yuan and Haisong Ding and Han Hu and Kai Chen},

year={2023},

eprint={2305.18259},

archivePrefix={arXiv},

primaryClass={cs.CV}

}