Your Local AI Assistant with Llama Models

Website: llama-assistant.nrl.ai

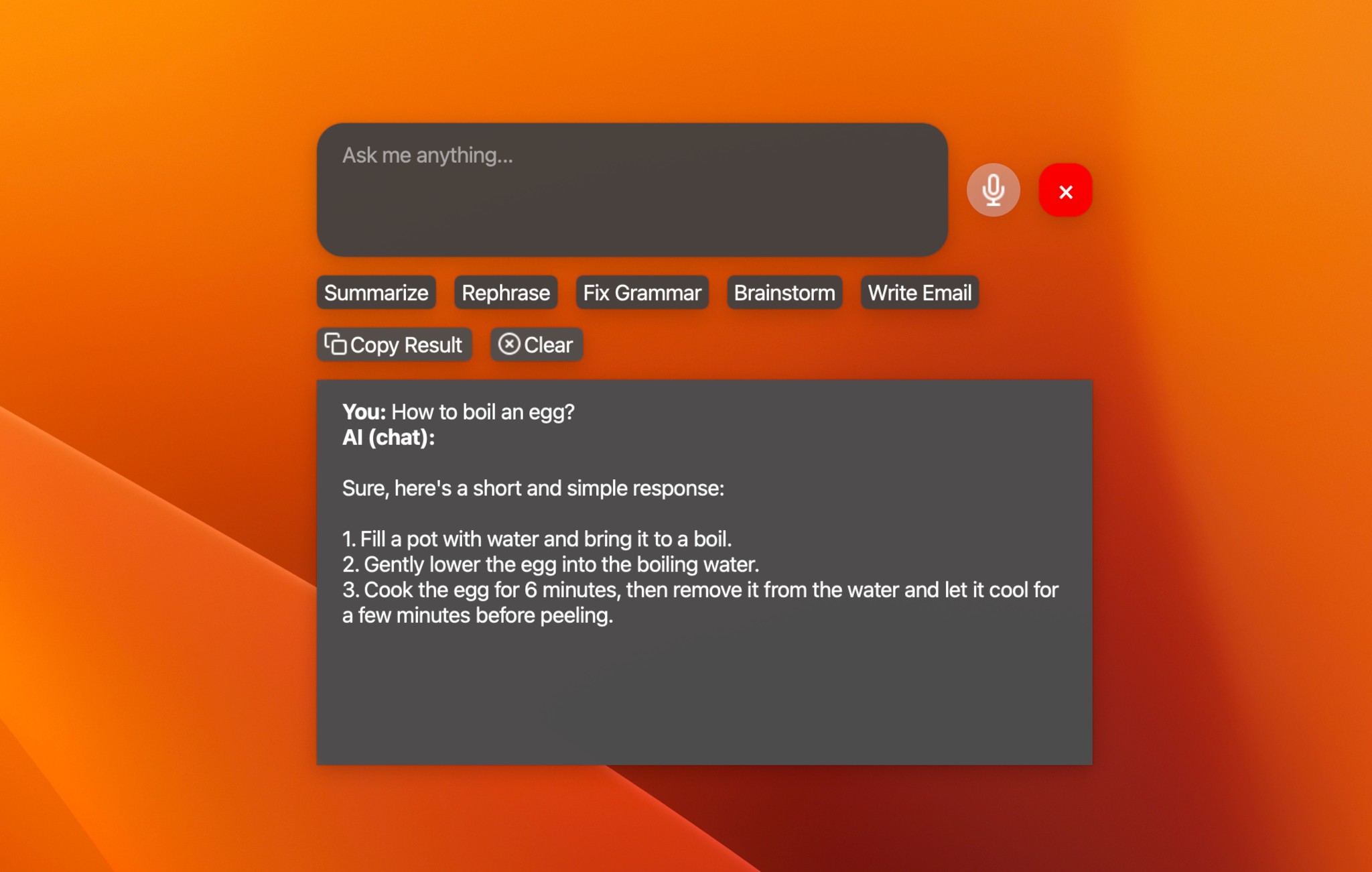

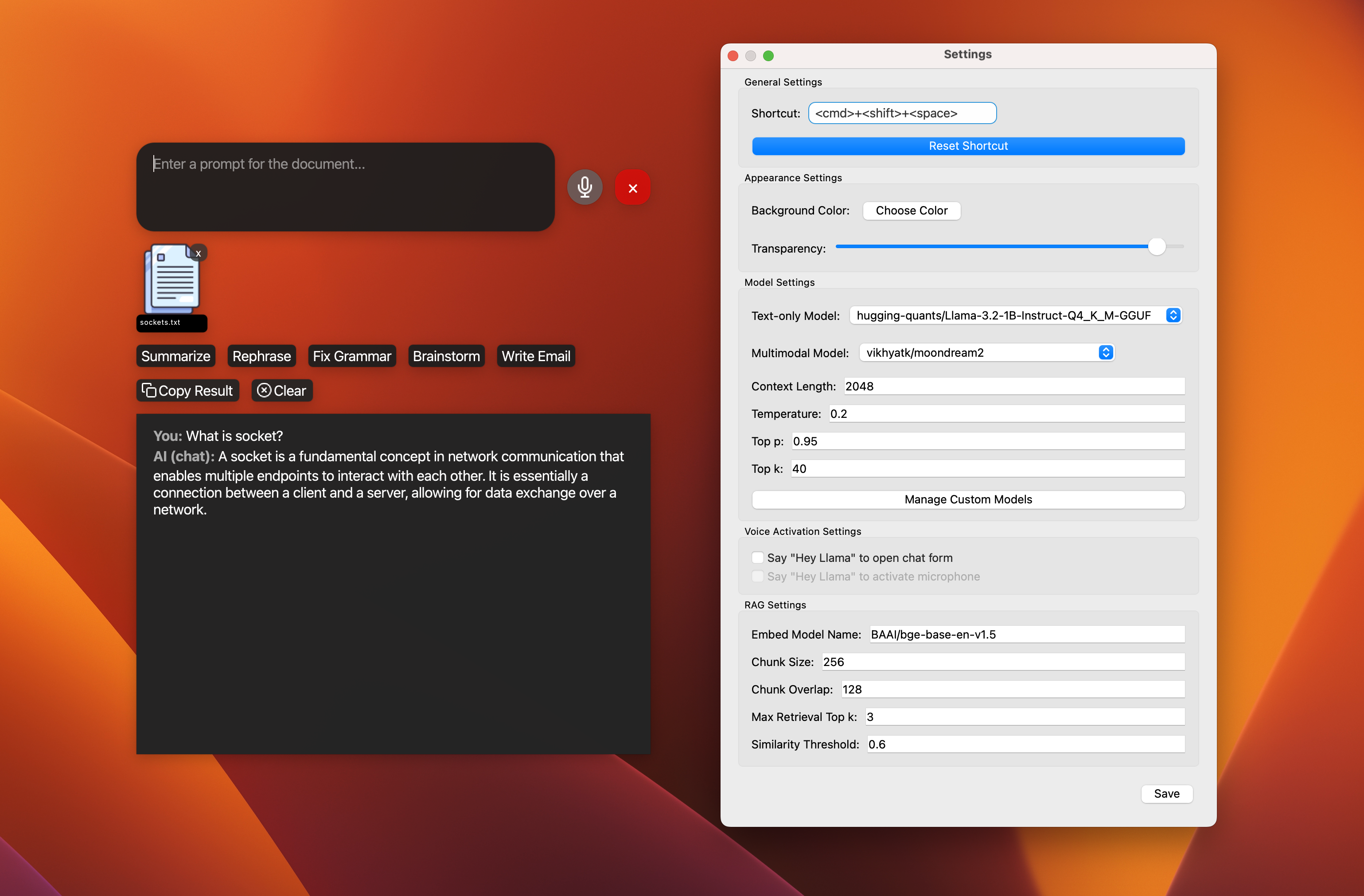

AI-powered assistant to help you with your daily tasks, powered by Llama 3.2. It can recognize your voice, process natural language, and perform various actions based on your commands: summarizing text, rephrasing sentences, answering questions, writing emails, and more.

This assistant can run offline on your local machine, and it respects your privacy by not sending any data to external servers.

-

📝 Text-only models:

- Llama 3.2 - 1B, 3B (4/8-bit quantized).

- Qwen2.5-0.5B-Instruct (4-bit quantized).

- And other models that LlamaCPP supports via custom models. See the list.

-

🖼️ Multimodal models:

- Moondream2.

- MiniCPM-v2.6.

- LLaVA 1.5/1.6.

- Besides supported models, you can try other variants via custom models.

- 🖼️ Support multimodal model: moondream2.

- 🗣️ Add wake word detection: "Hey Llama!".

- 🛠️ Custom models: Add support for custom models.

- 📚 Support 5 other text models.

- 🖼️ Support 5 other multimodal models.

- ⚡ Streaming support for response.

- 🎙️ Add offline STT support: WhisperCPP.

- 🧠 Knowledge database: Langchain or LlamaIndex?.

- 🔌 Plugin system for extensibility.

- 📰 News and weather updates.

- 📧 Email integration with Gmail and Outlook.

- 📝 Note-taking and task management.

- 🎵 Music player and podcast integration.

- 🤖 Workflow with multiple agents.

- 🌐 Multi-language support: English, Spanish, French, German, etc.

- 📦 Package for Windows, Linux, and macOS.

- 🔄 Automated tests and CI/CD pipeline.

- 🎙️ Voice recognition for hands-free interaction.

- 💬 Natural language processing with Llama 3.2.

- 🖼️ Image analysis capabilities (TODO).

- ⚡ Global hotkey for quick access (Cmd+Shift+Space on macOS).

- 🎨 Customizable UI with adjustable transparency.

Note: This project is a work in progress, and new features are being added regularly.

Install from PyPI:

pip install llama-assistant

pip install pyaudioOr install from source:

- Clone the repository:

git clone https://github.com/vietanhdev/llama-assistant.git

cd llama-assistant- Install the required dependencies:

pip install -r requirements.txt

pip install pyaudioSpeed Hack for Apple Silicon (M1, M2, M3) users: 🔥🔥🔥

- Install Xcode:

# check the path of your xcode install

xcode-select -p

# xcode installed returns

# /Applications/Xcode-beta.app/Contents/Developer

# if xcode is missing then install it... it takes ages;

xcode-select --install- Build

llama-cpp-pythonwith METAL support:

pip uninstall llama-cpp-python -y

CMAKE_ARGS="-DGGML_METAL=on" pip install -U llama-cpp-python --no-cache-dir

# You should now have llama-cpp-python v0.1.62 or higher installed

# llama-cpp-python 0.1.68Run the assistant using the following command:

llama-assistant

# Or with a

python -m llama_assistant.mainUse the global hotkey (default: Cmd+Shift+Space) to quickly access the assistant from anywhere on your system.

The assistant's settings can be customized by editing the settings.json file located in your home directory: ~/llama_assistant/settings.json.

Contributions are welcome! Please feel free to submit a Pull Request.

This project is licensed under the GPLv3 License - see the LICENSE file for details.

- The default model is Llama 3.2 by Meta AI Research.

- This project uses LlamaCPP by Georgi Gerganov and contributors.

- Viet-Anh Nguyen - vietanhdev, contact form.

- Project Link: https://github.com/vietanhdev/llama-assistant, https://llama-assistant.nrl.ai/.