This repository hosts Python/Octave/Matlab code of the Preconditioned ICA for Real Data (Picard) and Picard-O algorithms.

See the documentation.

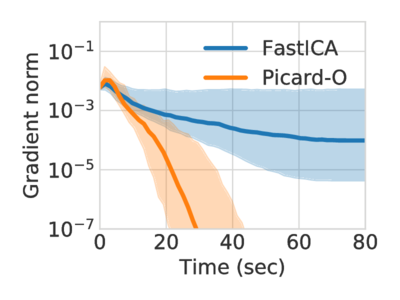

Picard is an algorithm for maximum likelihood independent component analysis. It shows state of the art speed of convergence, and solves the same problems as the widely used FastICA, Infomax and extended-Infomax, faster.

The parameter ortho choses whether to work under orthogonal constraint (i.e. enforce the decorrelation of the output) or not. It also comes with an extended version just like extended-infomax, which makes separation of both sub and super-Gaussian signals possible. It is chosen with the parameter extended.

- ortho=False, extended=False: same solution as Infomax

- ortho=False, extended=True: same solution as extended-Infomax

- ortho=True, extended=True: same solution as FastICA

- ortho=True, extended=False: finds the same solutions as Infomax under orthogonal constraint.

We recommend the Anaconda Python distribution.

Picard can be installed with conda-forge. You need to add conda-forge to your conda channels, and then do:

$ conda install python-picard

Otherwise, to install picard, you first need to install its dependencies:

$ pip install numpy matplotlib scipy

Then install Picard with pip:

$ pip install python-picard

or to get the latest version of the code:

$ pip install git+https://github.com/pierreablin/picard.git#egg=picard

If you do not have admin privileges on the computer, use the --user flag

with pip. To upgrade, use the --upgrade flag provided by pip.

To check if everything worked fine, you can do:

$ python -c 'import picard'

and it should not give any error message.

The Matlab/Octave version of Picard and Picard-O is available here.

To get started, you can build a synthetic mixed signals matrix:

>>> import numpy as np

>>> N, T = 3, 1000

>>> S = np.random.laplace(size=(N, T))

>>> A = np.random.randn(N, N)

>>> X = np.dot(A, S)And then use Picard to separate the signals:

>>> from picard import picard

>>> K, W, Y = picard(X)Picard outputs the whitening matrix, K, the estimated unmixing matrix, W, and the estimated sources Y. It means that Y = WKX

Introducing picard.Picard, which mimics sklearn.decomposition.FastICA behavior:

>>> from sklearn.datasets import load_digits

>>> from picard import Picard

>>> X, _ = load_digits(return_X_y=True)

>>> transformer = Picard(n_components=7)

>>> X_transformed = transformer.fit_transform(X)

>>> X_transformed.shapeThese are the dependencies to use Picard:

- numpy (>=1.8)

- matplotlib (>=1.3)

- scipy (>=0.19)

Optionally to get faster computations, you can install

- numexpr (>= 2.0)

These are the dependencies to run the EEG example:

- mne (>=0.14)

If you use this code in your project, please cite:

Pierre Ablin, Jean-Francois Cardoso, Alexandre Gramfort Faster independent component analysis by preconditioning with Hessian approximations IEEE Transactions on Signal Processing, 2018 https://arxiv.org/abs/1706.08171 Pierre Ablin, Jean-François Cardoso, Alexandre Gramfort Faster ICA under orthogonal constraint ICASSP, 2018 https://arxiv.org/abs/1711.10873

New in 0.8 : for the density exp, the default parameter is now alpha = 0.1 instead of alpha = 1.