Pyspark

Using pipenv to create virtual enviorment which content requirements

-

install pipenv If you don't have pipenv you need to install it you can see detail here

-

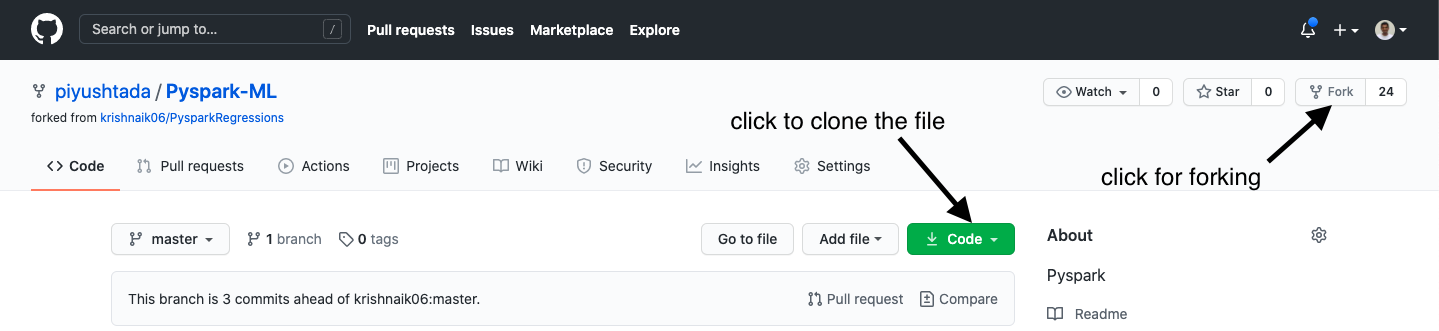

clone this repo in the your system

git clone https://github.com/piyushtada/Pyspark-ML.git

-

then change directory to Pyspark-ML

cd Pyspark-ML -

Then run command pipenv install

pipenv install

this will install all the dependences you need to run the project

-

Run jupyter notebook

jupyter notebook

it will open the jupyter notebook and you can use spark in it.

-

Check if everything is working by using test.ipynb

-

when you want to open the secission again you need run following command after going in the PysparkML folder

pipenv shell jupyter notebook

- Do exploratory data analysis

- Make update to columns with categorical data

- Visualise the results

- Make data ready for models

- Save the file

- Run one sample model to check everything uptill now working

- Make list of models to apply

- Apply models

- Do hyperparameter tuning for the model