A No-code workflow executor with built-in web UI

It runs DAGs (Directed acyclic graph) defined in a simple, declarative YAML format.

- Dagu

In the projects I worked on, our ETL pipeline had many problems. There were hundreds of cron jobs on the server's crontab, and it is impossible to keep track of those dependencies between them. If one job failed, we were not sure which to rerun. We also have to SSH into the server to see the logs and run each shell script one by one. So we needed a tool that can explicitly visualize and manage the dependencies of the pipeline. How nice it would be to be able to visually see the job dependencies, execution status, and logs of each job in a Web UI, and to be able to rerun or stop a series of jobs with just a mouse click!

There are many popular workflow engines such as Airflow, Prefect, etc. They are powerful and valuable tools, but they require writing code such as Python to run workflows. In many situations like above, there are already hundreds of thousands of existing lines of code in other languages such as shell scripts or Perl. Adding another layer of Python on top of these would make it more complicated. So we developed Dagu. It is easy-to-use and self-contained, making it ideal for smaller projects with fewer people.

- Self-contained - It is a single binary with zero dependency, No DBMS or cloud service is required.

- Simple - It executes DAGs defined in a simple declarative YAML format. Existing programs can be used without any modification.

You can quickly install dagu command and try it out.

brew install yohamta/tap/daguUpgrade to the latest version:

brew upgrade yohamta/tap/dagucurl -L https://raw.githubusercontent.com/yohamta/dagu/main/scripts/downloader.sh | bashDownload the latest binary from the Releases page and place it in your $PATH. For example, you can download it in /usr/local/bin.

Start the server with dagu server and browse to http://127.0.0.1:8080 to explore the Web UI.

Create a workflow by clicking the New DAG button on the top page of the web UI. Input example.yaml in the dialog.

Go to the workflow detail page and click the Edit button in the Config Tab. Copy and paste from this example YAML and click the Save button.

You can execute the example by pressing the Start button.

dagu start [--params=<params>] <file>- Runs the workflowdagu status <file>- Displays the current status of the workflowdagu retry --req=<request-id> <file>- Re-runs the specified workflow rundagu stop <file>- Stops the workflow execution by sending TERM signalsdagu dry [--params=<params>] <file>- Dry-runs the workflowdagu server- Starts the web server for web UIdagu version- Shows the current binary version

-

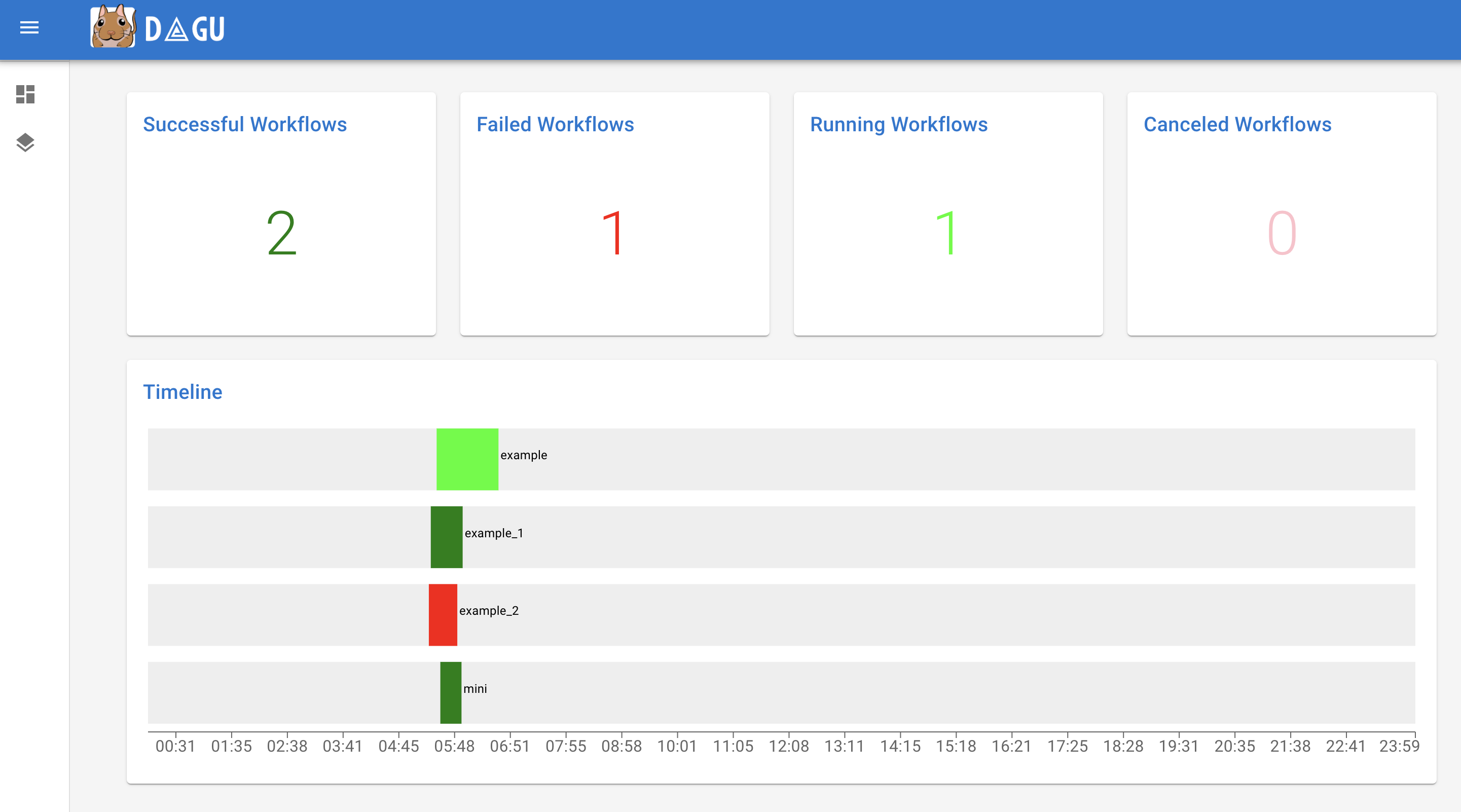

Dashboard: It shows the overall status and executions timeline of the day.

-

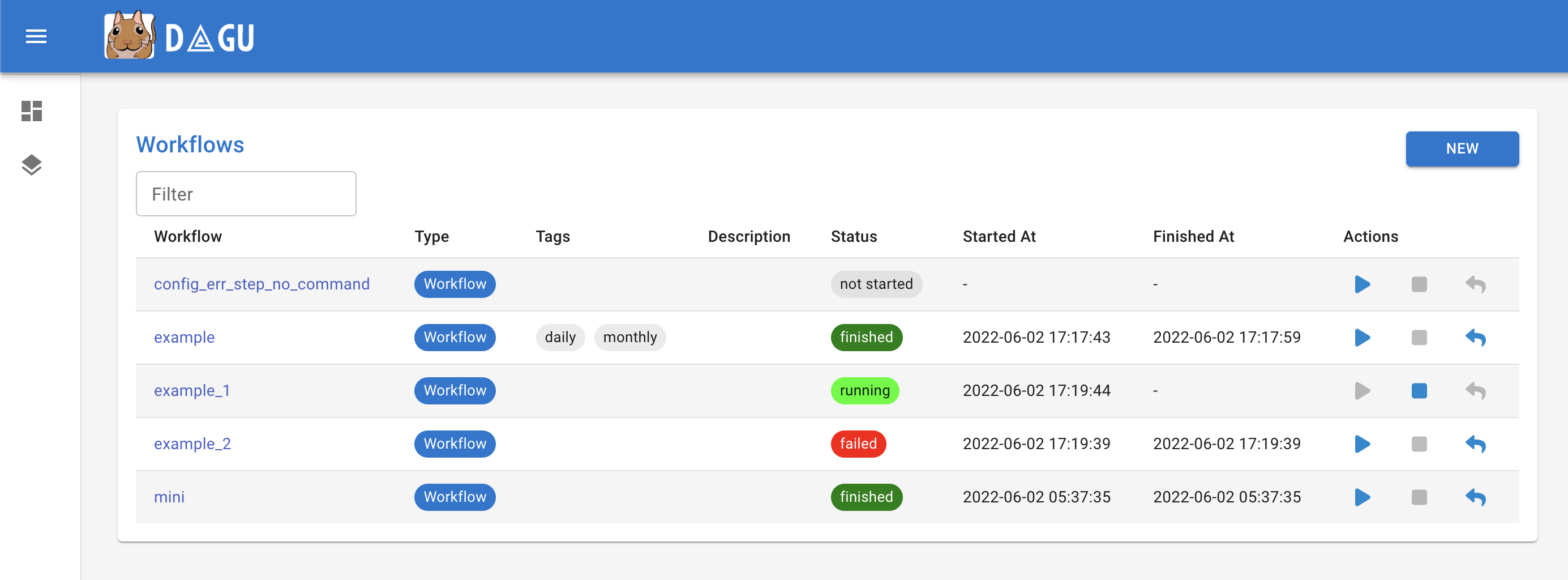

Workflows: It shows all workflows and the real-time status.

-

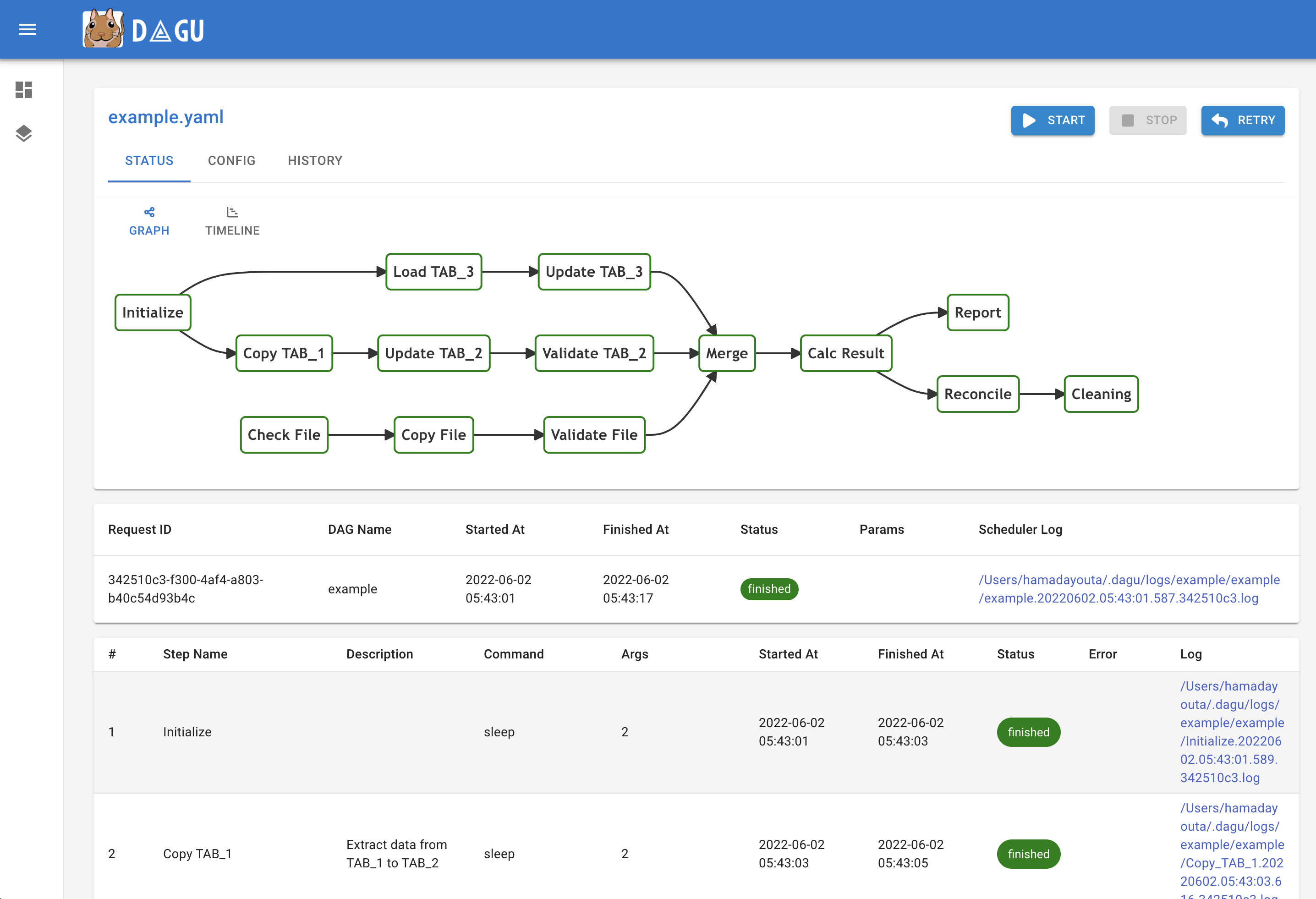

Workflow Details: It shows the real-time status, logs, and workflow configurations. You can edit workflow configurations on a browser.

-

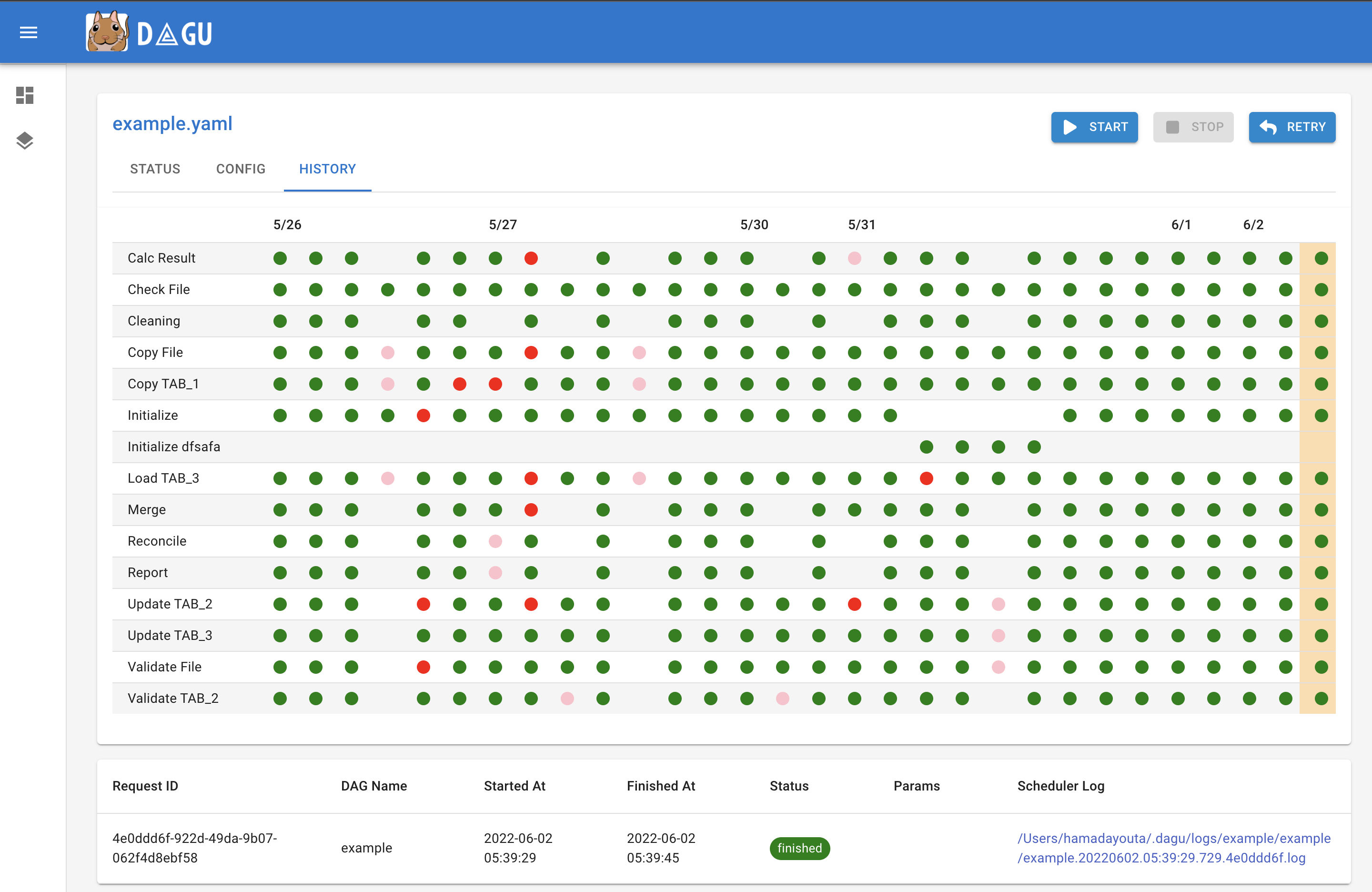

Execution History: It shows past execution results and logs.

-

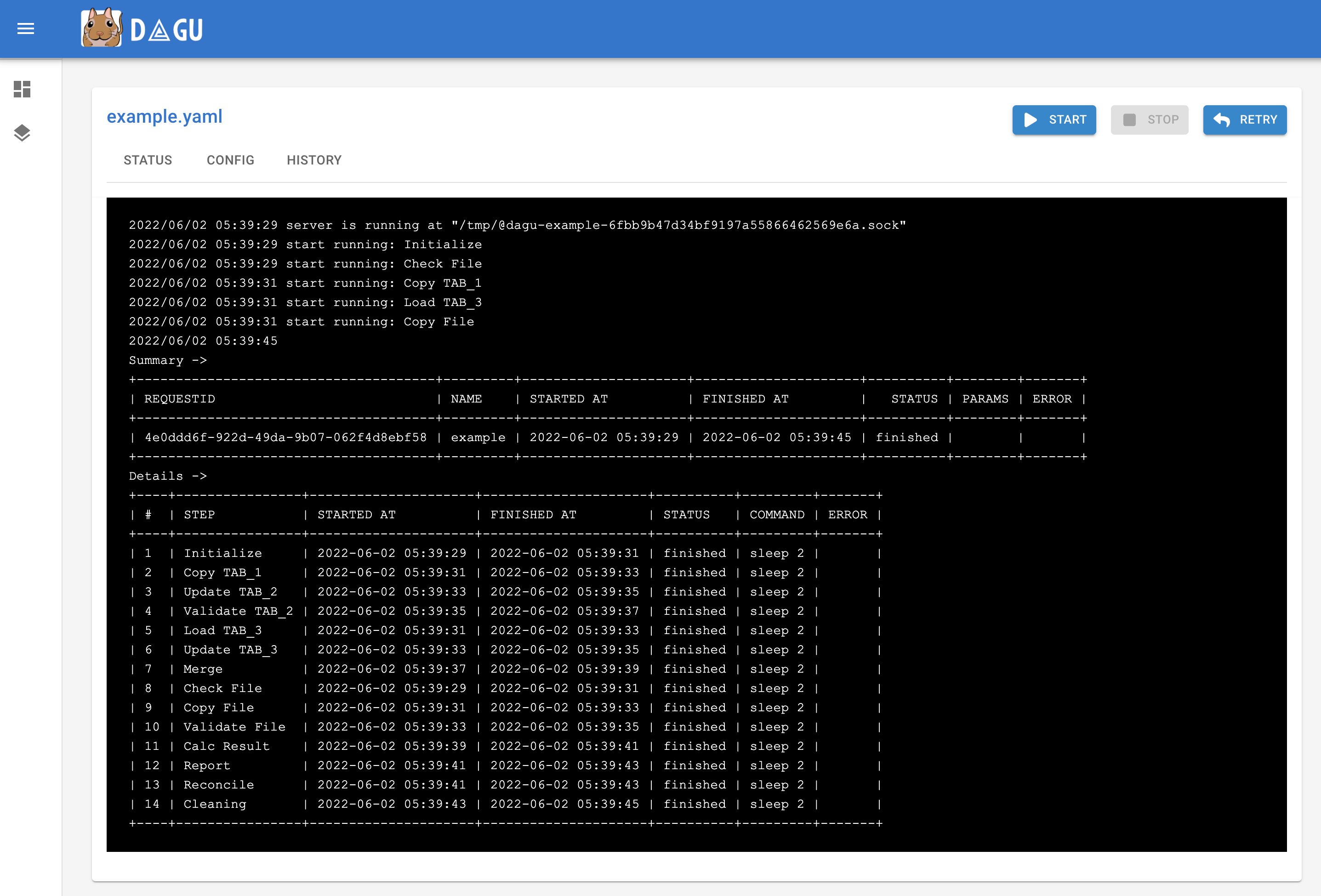

Workflow Execution Log: It shows the detail log and standard output of each execution and steps.

Minimal workflow definition is as simple as follows:

steps:

- name: step 1

command: echo hello

- name: step 2

command: echo world

depends:

- step 1script field provides a way to run arbitrary snippets of code in any language.

steps:

- name: step 1

command: "bash"

script: |

cd /tmp

echo "hello world" > hello

cat hello

output: RESULT

- name: step 2

command: echo ${RESULT} # hello world

depends:

- step 1You can define environment variables and refer using env field.

env:

- SOME_DIR: ${HOME}/batch

- SOME_FILE: ${SOME_DIR}/some_file

steps:

- name: some task in some dir

dir: ${SOME_DIR}

command: python main.py ${SOME_FILE}You can define parameters using params field and refer to each parameter as $1, $2, etc. Parameters can also be command substitutions or environment variables. It can be overridden by --params= parameter of start command.

params: param1 param2

steps:

- name: some task with parameters

command: python main.py $1 $2Named parameters are also available as follows:

params: ONE=1 TWO=`echo 2`

steps:

- name: some task with parameters

command: python main.py $ONE $TWOYou can use command substitution in field values. I.e., a string enclosed in backquotes (`) is evaluated as a command and replaced with the result of standard output.

env:

TODAY: "`date '+%Y%m%d'`"

steps:

- name: hello

command: "echo hello, today is ${TODAY}"Sometimes you have parts of a workflow that you only want to run under certain conditions. You can use the precondition field to add conditional branches to your workflow.

For example, the below task only runs on the first date of each month.

steps:

- name: A monthly task

command: monthly.sh

preconditions:

- condition: "`date '+%d'`"

expected: "01"If you want the workflow to continue to the next step regardless of the step's conditional check result, you can use the continueOn field:

steps:

- name: A monthly task

command: monthly.sh

preconditions:

- condition: "`date '+%d'`"

expected: "01"

continueOn:

skipped: trueoutput field can be used to set a environment variable with standard output. Leading and trailing space will be trimmed automatically. The environment variables can be used in subsequent steps.

steps:

- name: step 1

command: "echo foo"

output: FOO # will contain "foo"stdout field can be used to write standard output to a file.

steps:

- name: create a file

command: "echo hello"

stdout: "/tmp/hello" # the content will be "hello\n"It is often desirable to take action when a specific event happens, for example, when a workflow fails. To achieve this, you can use handlerOn fields.

handlerOn:

failure:

command: notify_error.sh

exit:

command: cleanup.sh

steps:

- name: A task

command: main.shIf you want a task to repeat execution at regular intervals, you can use the repeatPolicy field. If you want to stop the repeating task, you can use the stop command to gracefully stop the task.

steps:

- name: A task

command: main.sh

repeatPolicy:

repeat: true

intervalSec: 60Combining these settings gives you granular control over how the workflow runs.

name: all configuration # name (optional, default is filename)

description: run a DAG # description

tags: daily job # Free tags (separated by comma)

env: # Environment variables

- LOG_DIR: ${HOME}/logs

- PATH: /usr/local/bin:${PATH}

logDir: ${LOG_DIR} # Log directory to write standard output

histRetentionDays: 3 # Execution history retention days (not for log files)

delaySec: 1 # Interval seconds between steps

maxActiveRuns: 1 # Max parallel number of running step

params: param1 param2 # Default parameters that can be referred to by $1, $2, ...

preconditions: # Precondisions for whether the it is allowed to run

- condition: "`echo $2`" # Command or variables to evaluate

expected: "param2" # Expected value for the condition

mailOn:

failure: true # Send a mail when the it failed

success: true # Send a mail when the it finished

MaxCleanUpTimeSec: 300 # The maximum amount of time to wait after sending a TERM signal to running steps before killing them

handlerOn: # Handlers on Success, Failure, Cancel, and Exit

success:

command: "echo succeed" # Command to execute when the execution succeed

failure:

command: "echo failed" # Command to execute when the execution failed

cancel:

command: "echo canceled" # Command to execute when the execution canceled

exit:

command: "echo finished" # Command to execute when the execution finished

steps:

- name: some task # Step name

description: some task # Step description

dir: ${HOME}/logs # Working directory

command: bash # Command and parameters

stdout: /tmp/outfile

ouptut: RESULT_VARIABLE

script: |

echo "any script"

mailOn:

failure: true # Send a mail when the step failed

success: true # Send a mail when the step finished

continueOn:

failure: true # Continue to the next regardless of the step failed or not

skipped: true # Continue to the next regardless the preconditions are met or not

retryPolicy: # Retry policy for the step

limit: 2 # Retry up to 2 times when the step failed

repeatPolicy: # Repeat policy for the step

repeat: true # Boolean whether to repeat this step

intervalSec: 60 # Interval time to repeat the step in seconds

preconditions: # Precondisions for whether the step is allowed to run

- condition: "`echo $1`" # Command or variables to evaluate

expected: "param1" # Expected Value for the conditionThe global configuration file ~/.dagu/config.yaml is useful to gather common settings, such as logDir or env.

You can customize the admin web UI by environment variables.

DAGU__DATA- path to directory for internal use by dagu (default :~/.dagu/data)DAGU__LOGS- path to directory for logging (default :~/.dagu/logs)DAGU__ADMIN_PORT- port number for web URL (default :8080)DAGU__ADMIN_NAVBAR_COLOR- navigation header color for web UI (optional)DAGU__ADMIN_NAVBAR_TITLE- navigation header title for web UI (optional)

Please create ~/.dagu/admin.yaml.

host: <hostname for web UI address> # default value is 127.0.0.1

port: <port number for web UI address> # default value is 8000

dags: <the location of DAG configuration files> # default value is current working directory

command: <Absolute path to the dagu binary> # [optional] required if the dagu command not in $PATH

isBasicAuth: <true|false> # [optional] basic auth config

basicAuthUsername: <username for basic auth of web UI> # [optional] basic auth config

basicAuthPassword: <password for basic auth of web UI> # [optional] basic auth configCreating a global configuration ~/.dagu/config.yaml is a convenient way to organize shared settings.

logDir: <path-to-write-log> # log directory to write standard output

histRetentionDays: 3 # history retention days

smtp: # [optional] mail server configuration to send notifications

host: <smtp server host>

port: <stmp server port>

errorMail: # [optional] mail configuration for error-level

from: <from address>

to: <to address>

prefix: <prefix of mail subject>

infoMail:

from: <from address> # [optional] mail configuration for info-level

to: <to address>

prefix: <prefix of mail subject>Please refert to REST API Docs

Feel free to contribute in any way you want. Share ideas, questions, submit issues, and create pull requests. Thanks!

It will store execution history data in the DAGU__DATA environment variable path. The default location is $HOME/.dagu/data.

It will store log files in the DAGU__LOGS environment variable path. The default location is $HOME/.dagu/logs. You can override the setting by the logDir field in a YAML file.

The default retention period for execution history is seven days. However, you can override the setting by the histRetentionDays field in a YAML file.

You can change the status of any task to a failed state. Then, when you retry the workflow, it will execute the failed one and any subsequent.

No, not yet. It is meant to be used with cron or other schedulers. If you could implement the scheduler daemon function and create a PR, it would be greatly appreciated.

Dagu uses Unix sockets to communicate with running processes.

This project is licensed under the GNU GPLv3 - see the LICENSE.md file for details

Made with contrib.rocks.