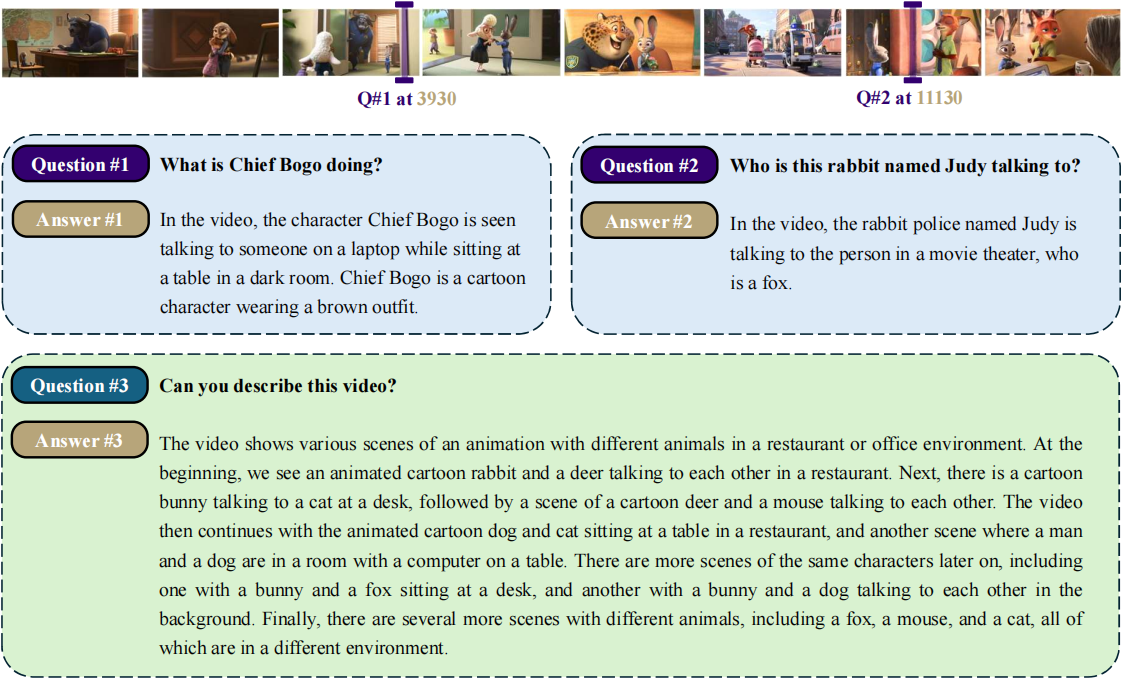

MovieChat: From Dense Token to Sparse Memory for Long Video Understanding

Enxin Song*, Wenhao Chai*, Guanhong Wang*, Yucheng Zhang, Haoyang Zhou, Feiyang Wu, Xun Guo, Tian Ye, Yan Lu, Jenq-Neng Hwang, Gaoang Wang✉️

arXiv 2023.

MovieChat can handle videos with >10K frames on a 24GB graphics card. MovieChat has a 10000× advantage over other methods in terms of the average increase in GPU memory cost per frame (21.3KB/f to ~200MB/f).

- [2023.8.1] 📃 We release the paper.

- [2023.7.31] We release eval code and instraction for short video QA on MSVD-QA, MSRVTT-QA and ActivityNet-QA.

- [2023.7.29] We release Gradio demo of MovieChat.

- [2023.7.22] We release source code of MovieChat.

First, ceate a conda environment:

conda env create -f environment.yml

conda activate moviechat

Before using the repository, make sure you have obtained the following checkpoints:

- Get the original LLaMA weights in the Hugging Face format by following the instructions here.

- Download Vicuna delta weights 👉 [7B] (Note: we use v0 weights instead of v1.1 weights).

- Use the following command to add delta weights to the original LLaMA weights to obtain the Vicuna weights:

python apply_delta.py \

--base ckpt/LLaMA/7B_hf \

--target ckpt/Vicuna/7B \

--delta ckpt/Vicuna/vicuna-7b-delta-v0 \

- Download the MiniGPT-4 model (trained linear layer) from this link.

- Download pretrained weights to run MovieChat with Vicuna-7B as language decoder locally from this link.

Firstly, set the llama_model, llama_proj_model and ckpt in eval_configs/MovieChat.yaml.

Then run the script:

python inference.py \

--cfg-path eval_configs/MovieChat.yaml \

--gpu-id 0 \

--num-beams 1 \

--temperature 1.0 \

--text-query "What is he doing?" \

--video-path src/examples/Cooking_cake.mp4 \

--fragment-video-path src/video_fragment/output.mp4 \

--cur-min 1 \

--cur-sec 1 \

--middle-video 1 \

Note that, if you want to use the global mode (understanding and question-answering for the whole video), remember to change middle-video into 0.

We are grateful for the following awesome projects our MovieChat arising from:

- Video-LLaMA: An Instruction-tuned Audio-Visual Language Model for Video Understanding

- Token Merging: Your ViT but Faster

- XMem: Long-Term Video Object Segmentation with an Atkinson-Shiffrin Memory Model

- MiniGPT-4: Enhancing Vision-language Understanding with Advanced Large Language Models

- FastChat: An Open Platform for Training, Serving, and Evaluating Large Language Model based Chatbots

- BLIP-2: Bootstrapping Language-Image Pre-training with Frozen Image Encoders and Large Language Models

- EVA-CLIP: Improved Training Techniques for CLIP at Scale

- LLaMA: Open and Efficient Foundation Language Models

- VideoChat: Chat-Centric Video Understanding

- LLaVA: Large Language and Vision Assistant

Our MovieChat is just a research preview intended for non-commercial use only. You must NOT use our MovieChat for any illegal, harmful, violent, racist, or sexual purposes. You are strictly prohibited from engaging in any activity that will potentially violate these guidelines.

If you find MovieChat useful for your your research and applications, please cite using this BibTeX:

@article{song2023moviechat,

title={MovieChat: From Dense Token to Sparse Memory for Long Video Understanding},

author={Song, Enxin and Chai, Wenhao and Wang, Guanhong and Zhang, Yucheng and Zhou, Haoyang and Wu, Feiyang and Guo, Xun and Ye, Tian and Lu, Yan and Hwang, Jenq-Neng and others},

journal={arXiv preprint arXiv:2307.16449},

year={2023}

}