Custom ComfyUI Nodes for interacting with Ollama using the ollama python client.

Integrate the power of LLMs into ComfyUI workflows easily or just experiment with LLM inference.

To use this properly, you would need a running Ollama server reachable from the host that is running ComfyUI.

Install ollama server on the desired host

Install on Linux

curl -fsSL https://ollama.com/install.sh | shCPU only

docker run -d -p 11434:11434 -v ollama:/root/.ollama --name ollama ollama/ollamaNVIDIA GPU

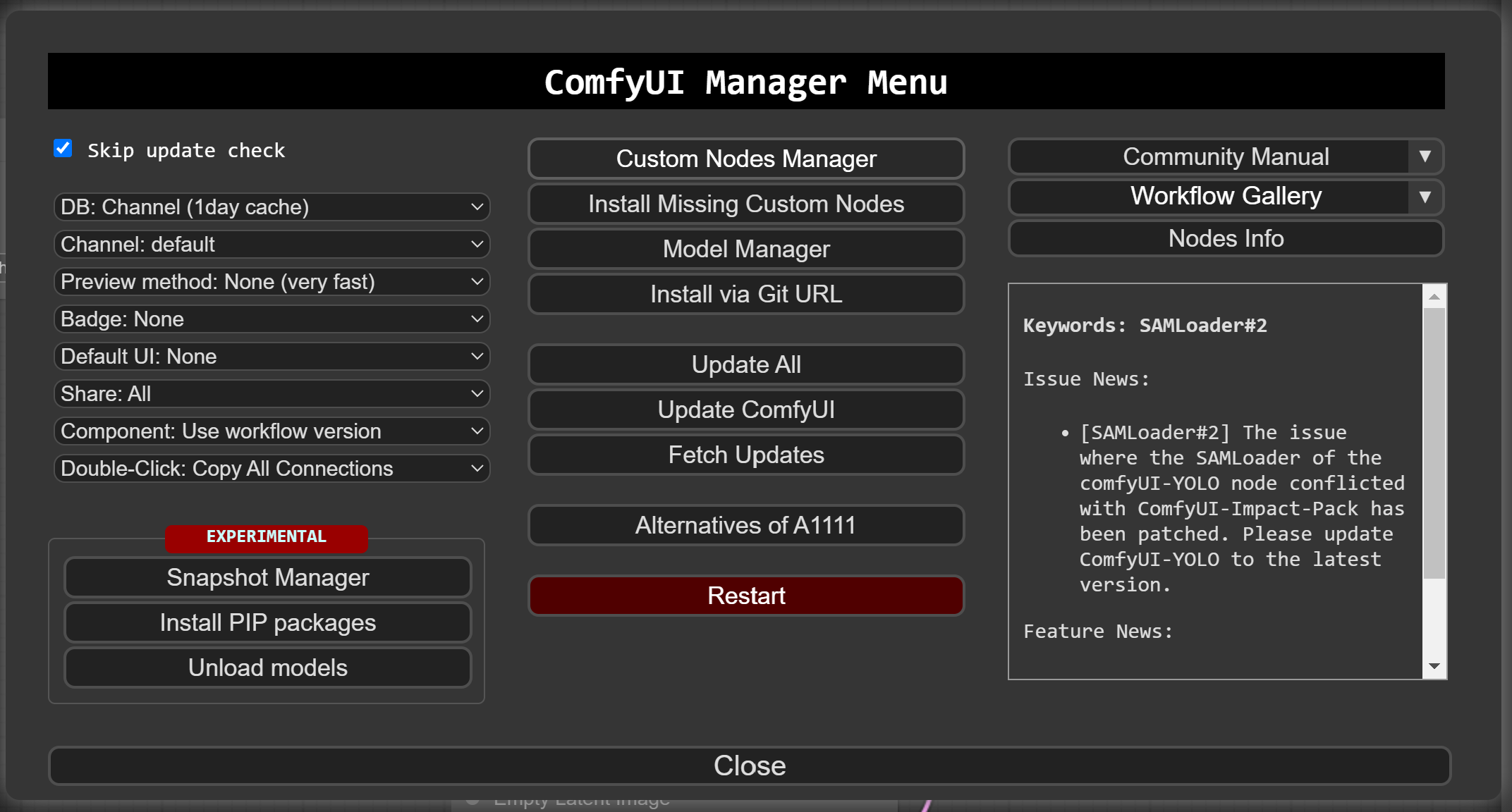

docker run -d -p 11434:11434 --gpus=all -v ollama:/root/.ollama --name ollama ollama/ollamaUse the compfyui manager "Custom Node Manager":

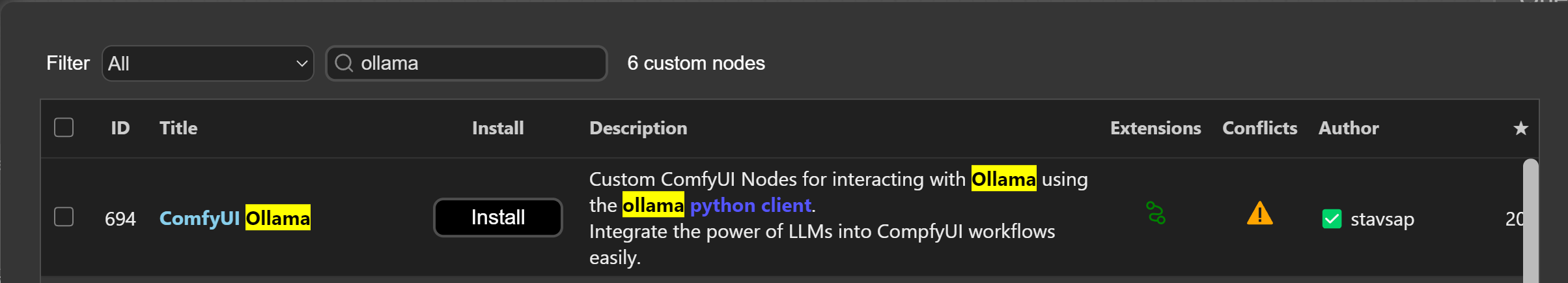

Search ollama and select the one by stavsap

Or

- git clone into the

custom_nodesfolder inside your ComfyUI installation or download as zip and unzip the contents tocustom_nodes/compfyui-ollama. pip install -r requirements.txt- Start/restart ComfyUI

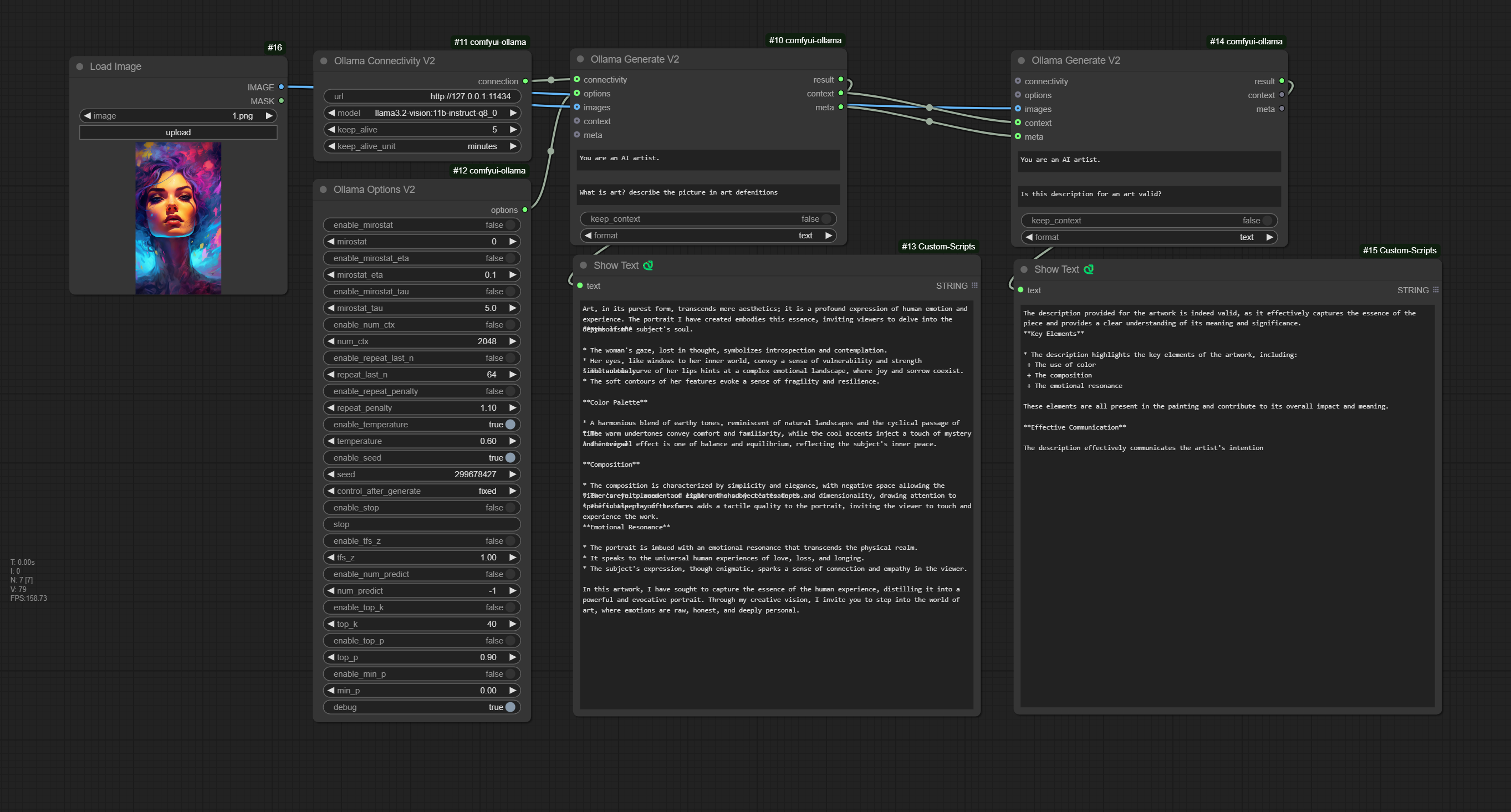

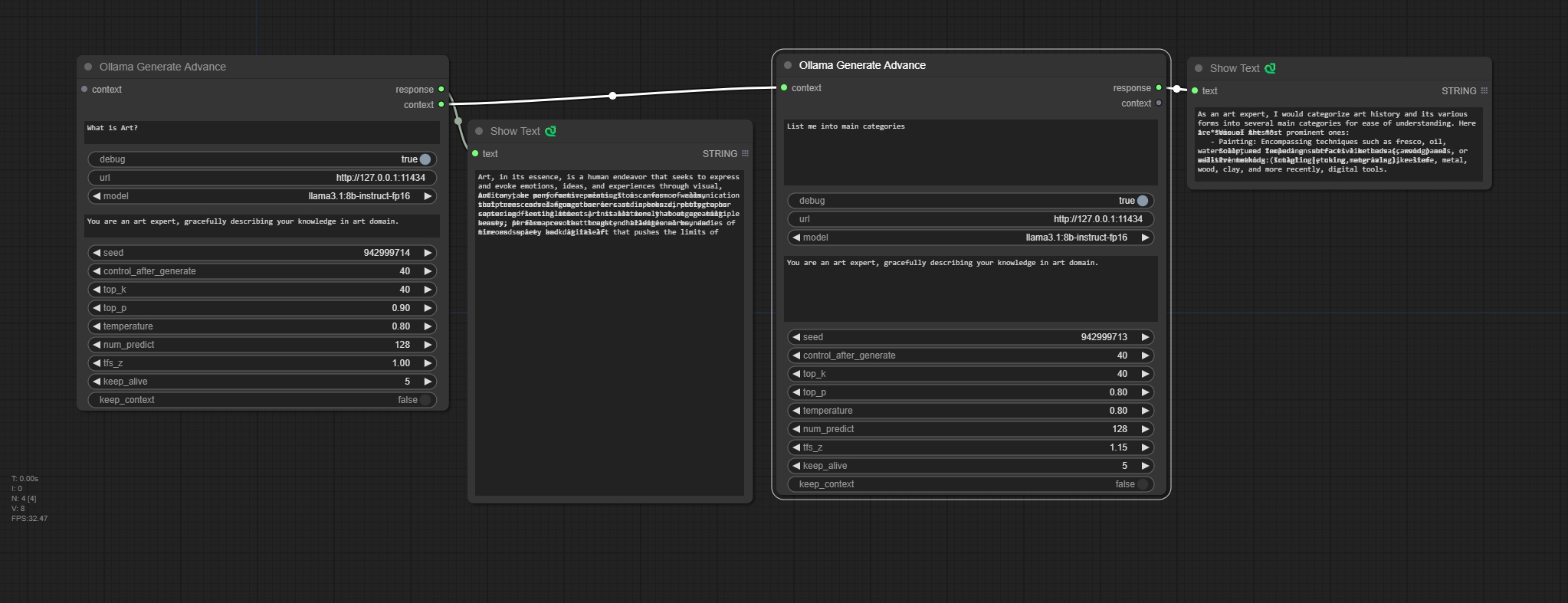

Release of additional V2 Nodes, for more modular and controllable chained flows.

A node that provides ability to set the system prompt and the prompt.

Ability to save context locally in the node enable/disable

Inputs:

- OllamaConnectivityV2 (optional)

- OllamaOptionsV2 (optional)

- images (optional)

- context (optional), a context from other OllamaConnectivityV2

- meta (optional), passing metadata of the OllamaConnectivityV2 and OllamaOptionsV2 from other OllamaGenerateV2 node.

Notes:

- For this node to be operational, OllamaConnectivityV2 or meta must be inputted!.

- If images are inputted and a chain of meta usage is made, all the images need to be passed as well to the next OllamaConnectivityV2 nodes.

A node responsible only fot the connectivity to the ollama server

A node for full control of the ollama api options.

For an option to take effect, each option have also enable/disable, enabled options are passed to api call to ollama server.

Ollama API options can be found in this table.

Note: There is an additional option debug that enables debug print in the cli, its not part of ollama api.

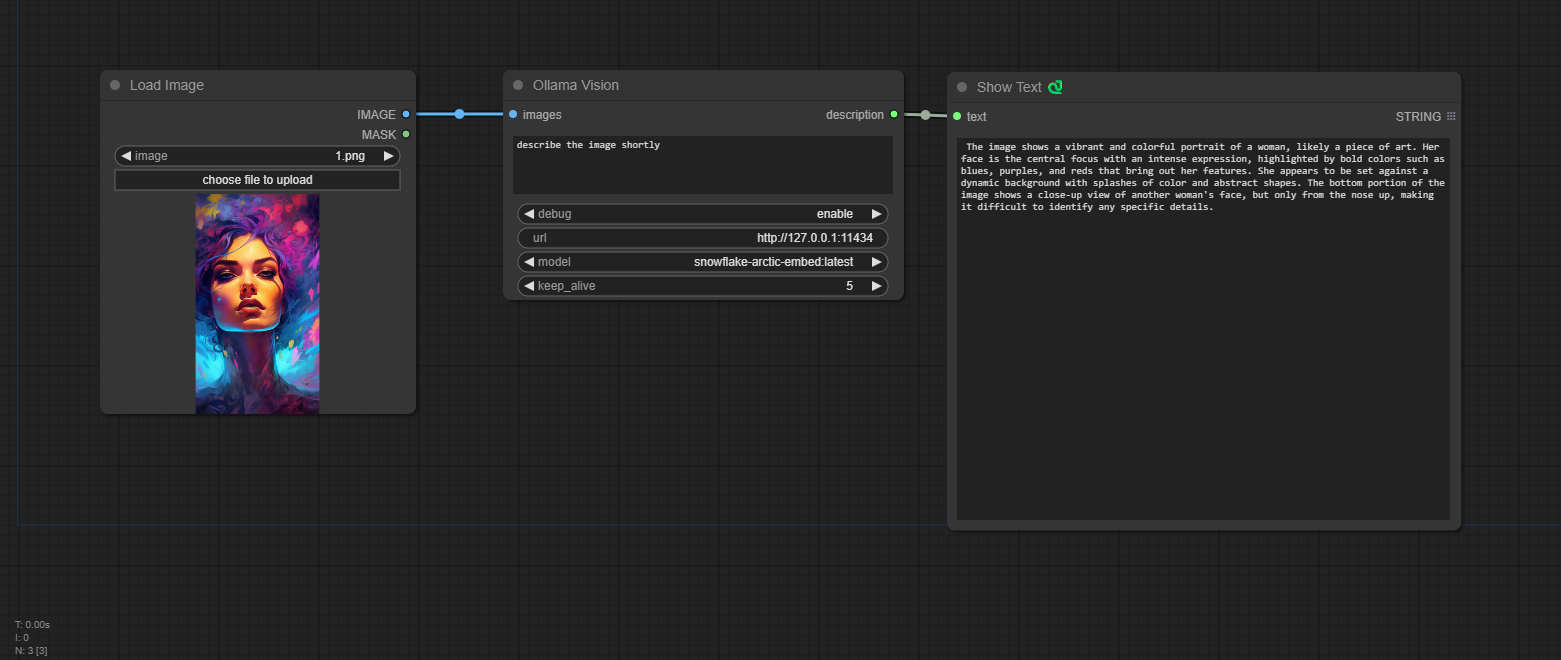

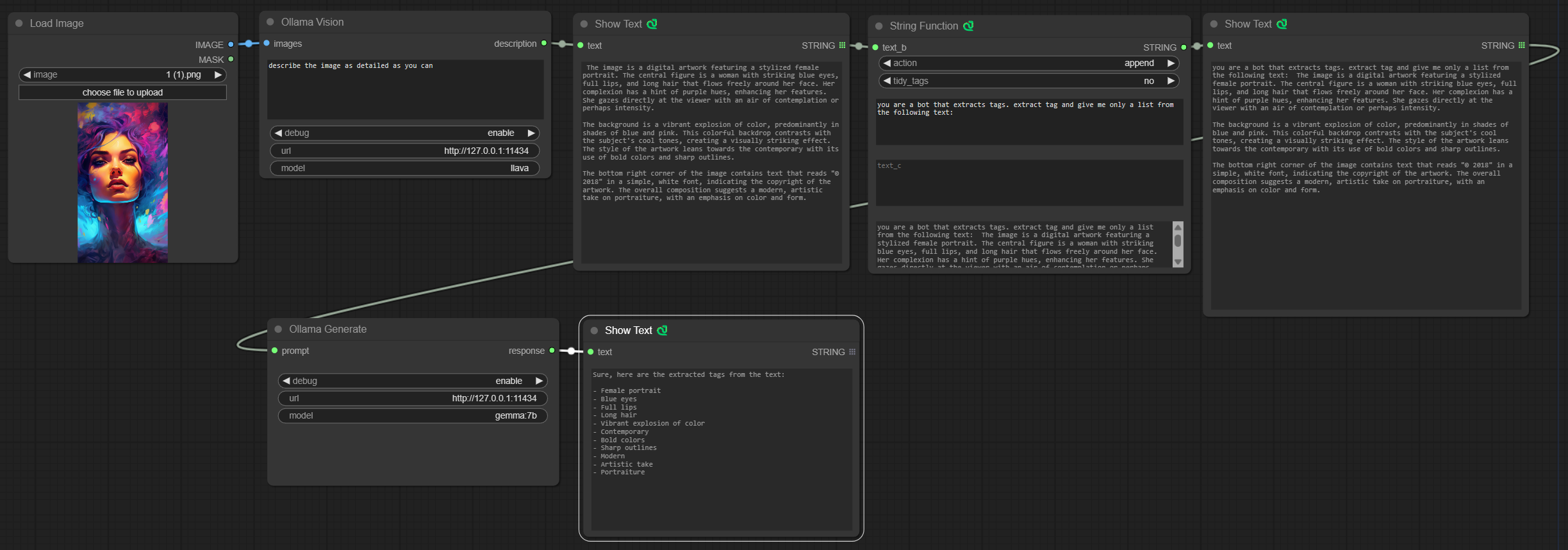

A node that gives an ability to query input images.

A model name should be model with Vision abilities, for example: https://ollama.com/library/llava.

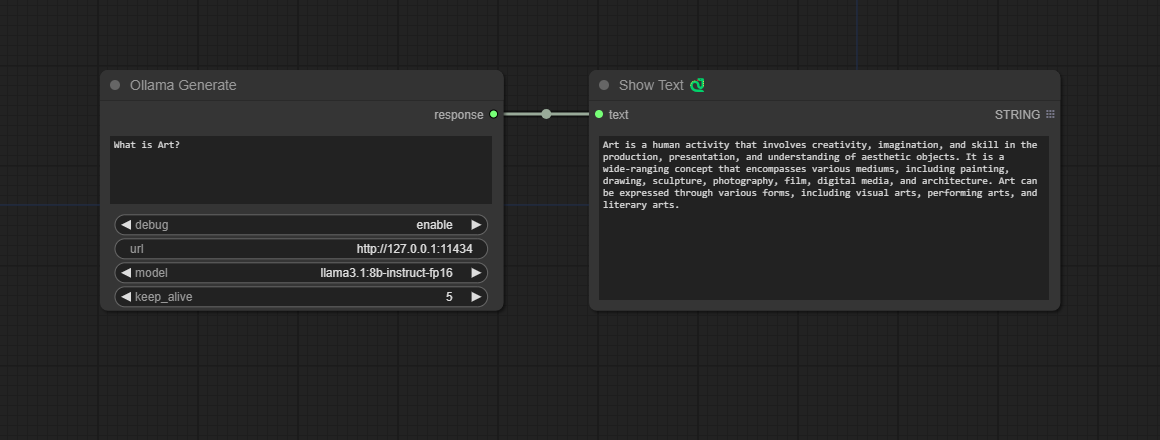

A node that gives an ability to query an LLM via given prompt.

A node that gives an ability to query an LLM via given prompt with fine tune parameters and an ability to preserve context for generate chaining.

Check ollama api docs to get info on the parameters.

More params info

Consider the following workflow of vision an image, and perform additional text processing with desired LLM. In the OllamaGenerate node set the prompt as input.

The custom Text Nodes in the examples can be found here: https://github.com/pythongosssss/ComfyUI-Custom-Scripts